Circuit Fingerprints: How Answer Tokens Encode Their Geometrical Path

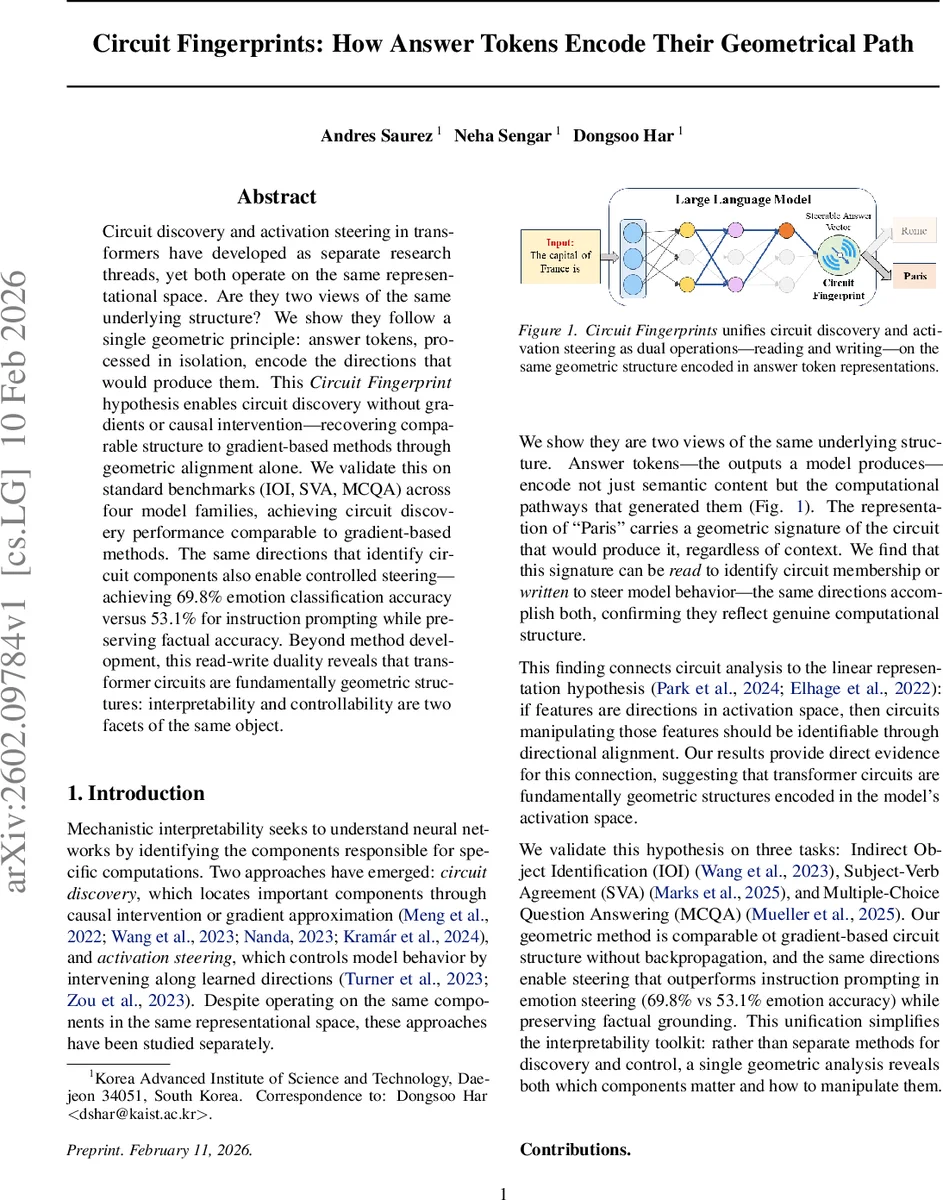

Circuit discovery and activation steering in transformers have developed as separate research threads, yet both operate on the same representational space. Are they two views of the same underlying structure? We show they follow a single geometric principle: answer tokens, processed in isolation, encode the directions that would produce them. This Circuit Fingerprint hypothesis enables circuit discovery without gradients or causal intervention – recovering comparable structure to gradient-based methods through geometric alignment alone. We validate this on standard benchmarks (IOI, SVA, MCQA) across four model families, achieving circuit discovery performance comparable to gradient-based methods. The same directions that identify circuit components also enable controlled steering – achieving 69.8% emotion classification accuracy versus 53.1% for instruction prompting while preserving factual accuracy. Beyond method development, this read-write duality reveals that transformer circuits are fundamentally geometric structures: interpretability and controllability are two facets of the same object.

💡 Research Summary

The paper introduces the “Circuit Fingerprint” hypothesis, which posits that answer tokens processed in isolation encode the geometric directions of the internal transformer circuits that generate them. In other words, the vector difference between the final‑layer residual representations of a correct answer token (a⁺) and an incorrect answer token (a⁻) – Δr(L) = r(L){a⁺} – r(L){a⁻} – captures a direction in activation space that is written by every component belonging to the underlying circuit. By extracting this direction and aligning it with component‑wise activation differences induced by contrasting prompts, the authors demonstrate that one can identify circuit members without any gradient computation or causal intervention.

The method proceeds in two complementary phases: “reading” (circuit discovery) and “writing” (activation steering). For reading, the answer‑token direction Δr(L) is projected back into each component’s native space using the component’s output projection matrix (W_c). The transformed target ˆt_c = W_c^T Δr(L) is then compared to the component’s differential output Δo_c (obtained by feeding a clean versus a corrupted prompt) via an inner product S_c = ⟨Δo_c, ˆt_c⟩. This yields a direct importance score for every attention head and MLP. To capture indirect influence, the authors decompose the residual stream into edge‑level contributions. For each downstream head j they compute channel‑specific directions (Δq, Δk, Δv) and project them back to the residual space, then evaluate how much each upstream component i contributes to these channels using ratios R^{i→j}_Q, R^{i→j}_K, R^{i→j}V. Because Q, K, and V interact non‑linearly, the authors employ Shapley values to weight the three channels fairly. The Shapley value for a channel (e.g., ϕ_Q) is derived by averaging marginal contributions across all subsets of channels, requiring evaluation of eight coalitions per head. Edge importance is then combined as E{i→j} = S_j·(ϕ_Q·R^{i→j}_Q + ϕ_K·R^{i→j}_K + ϕ_V·R^{i→j}_V). A backward pass aggregates direct and indirect scores to produce a total importance T_c for each component (Algorithm 1).

For writing, the same geometric directions are used to steer model behavior. The authors extract a direction that separates two target sentiments (e.g., “positive” vs. “negative”) and add a scaled version of this vector to the residual stream at inference time. This simple intervention causes the model to output the desired sentiment with substantially higher probability. Empirically, on an emotion‑classification task, the geometric steering achieves 69.8 % accuracy, outperforming a strong instruction‑prompt baseline (53.1 %) while preserving factual correctness.

The hypothesis and methodology are validated across three standard mechanistic interpretability benchmarks—Indirect Object Identification (IOI), Subject‑Verb Agreement (SVA), and Multiple‑Choice Question Answering (MCQA)—and four model families (GPT‑2 Small, Qwen2.5‑0.5B, Llama 3.2‑1B, among others). In the reading experiments, the component importance rankings derived from answer‑token alignment closely match those obtained from gradient‑based methods such as EAP‑IG and integrated gradients, correctly highlighting the same critical attention heads (typically in layers 9‑11) and MLPs. In the steering experiments, the same directions that identify circuit members also serve as effective control vectors, demonstrating the read‑write duality claimed by the authors.

Key contributions are: (1) proposing the Circuit Fingerprint hypothesis that bridges interpretability and controllability via a shared geometric representation; (2) presenting a gradient‑free circuit discovery pipeline that relies solely on inner‑product alignment between answer‑token directions and prompt‑induced activation differences; (3) showing that the extracted directions can be reused for targeted model steering, achieving state‑of‑the‑art performance on emotion steering while maintaining factual grounding; (4) providing a principled Shapley‑based decomposition of attention‑head channels to fairly attribute importance across Q, K, and V pathways.

Overall, the work suggests that transformer circuits are fundamentally geometric structures embedded in activation space. By treating answer tokens as carriers of their own generation pathways, one can both read (identify) and write (manipulate) these structures with a single, efficient geometric analysis. This unifies two previously separate research threads—circuit discovery and activation steering—into a coherent framework, opening the door to scalable, interpretable, and controllable AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment