Aligning Tree-Search Policies with Fixed Token Budgets in Test-Time Scaling of LLMs

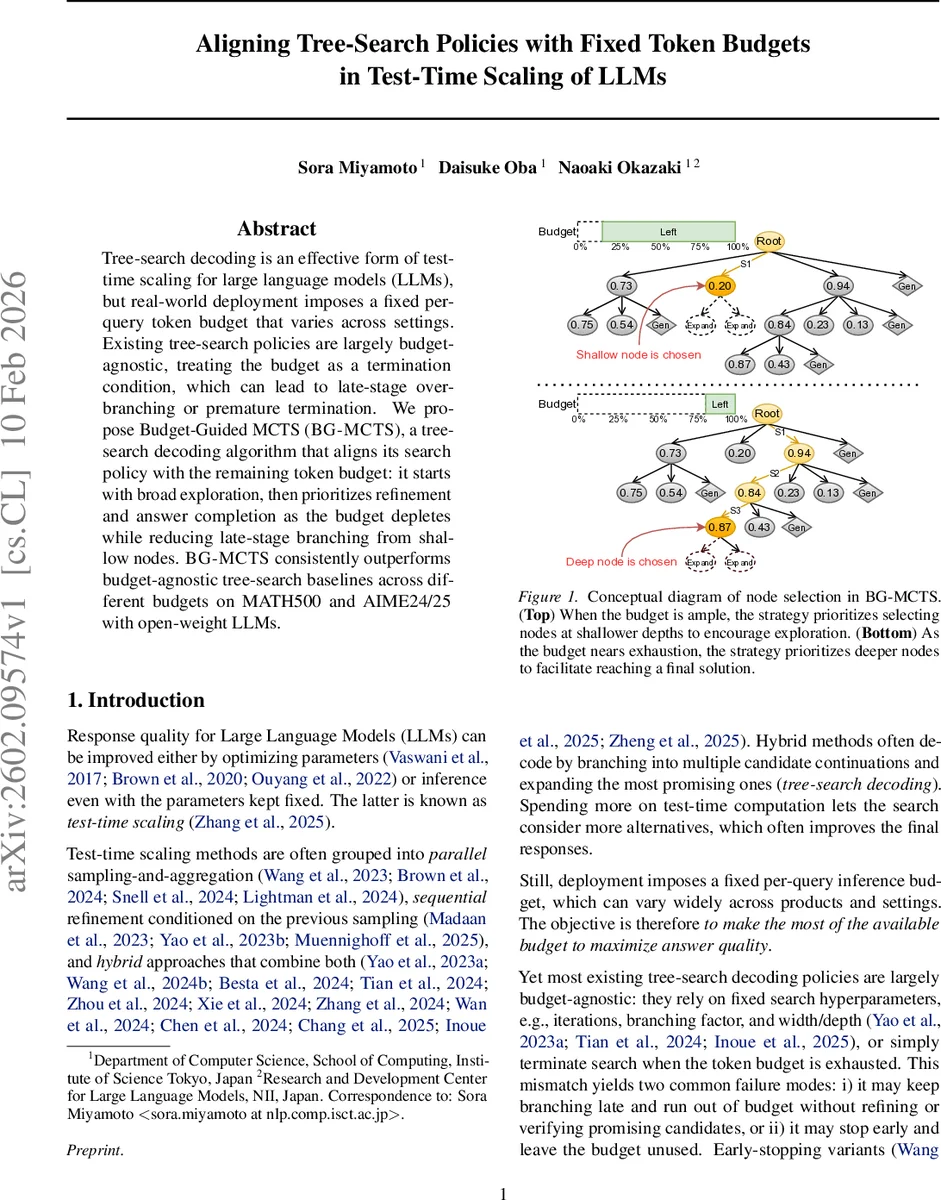

Tree-search decoding is an effective form of test-time scaling for large language models (LLMs), but real-world deployment imposes a fixed per-query token budget that varies across settings. Existing tree-search policies are largely budget-agnostic, treating the budget as a termination condition, which can lead to late-stage over-branching or premature termination. We propose {Budget-Guided MCTS} (BG-MCTS), a tree-search decoding algorithm that aligns its search policy with the remaining token budget: it starts with broad exploration, then prioritizes refinement and answer completion as the budget depletes while reducing late-stage branching from shallow nodes. BG-MCTS consistently outperforms budget-agnostic tree-search baselines across different budgets on MATH500 and AIME24/25 with open-weight LLMs.

💡 Research Summary

The paper addresses a practical limitation of test‑time scaling for large language models (LLMs): real‑world deployments often impose a fixed per‑query token budget that can vary across products and use‑cases. Existing tree‑search decoding methods treat the budget merely as a termination condition, which leads to two common failure modes: late‑stage over‑branching that exhausts the budget before promising candidates can be refined, and premature stopping that leaves the budget under‑utilized. To solve this, the authors propose Budget‑Guided Monte Carlo Tree Search (BG‑MCTS), a decoding algorithm that conditions every decision on the remaining token budget.

The core idea is to track the “budget sufficiency ratio” ρ = 1 − C_used/B, where C_used is the cumulative number of tokens generated across the entire search. When ρ is close to 1 (early stage), the algorithm behaves like standard MCTS, encouraging broad exploration. As ρ approaches 0 (late stage), exploration is gradually annealed and the search shifts toward deepening and refining the most promising branches.

Two budget‑aware mechanisms are introduced:

-

Budget‑Guided Selection (BG‑PUCT). The classic PUCT score is modified to include ρ in the exploration term (ρ · c · P(s|p) · ln(m_p/m_s)) and to replace the accumulated value W(s) with a budget‑conditioned value \tilde W(s,ρ). The latter adds a depth‑biased completion bonus κ·(1 − ρ)·d(x)/\hat d_ans, where d(x) is node depth and \hat d_ans is an estimate of the typical answer‑completion depth. This bias is negligible early on but becomes strong near the end of the budget, steering the search toward nodes that are closer to a final answer.

-

Budget‑Guided Widening. Each internal node p is equipped with a virtual “generate‑new‑child” option s_gen(p). Selecting this option triggers the creation of a new actual child, effectively widening the tree at p. The score for this option is E_gen(p,ρ) = μ(p) + λ·ρ·σ²(p), where μ(p) and σ²(p) are the mean and variance of the Q‑values of existing children. High variance (high uncertainty) encourages widening, but the factor ρ ensures that this encouragement fades as the budget runs out, preventing wasteful late‑stage branching.

The algorithm proceeds iteratively: compute ρ, select a child (standard or virtual) using the unified score, expand the chosen node (either generate k new children for a leaf or a single child for widening), evaluate each new node with a reward model (GenPRM‑7B), back‑propagate the values, and update C_used. The loop stops when C_used ≥ B, and the best completed answer (highest Q) among all leaf nodes is returned.

Experiments are conducted on two mathematical reasoning benchmarks, MATH500 and AIME24/25, using two open‑weight instruction‑tuned LLMs under 8‑10 B parameters: Llama‑3.1‑8B‑Instruct and Qwen‑2.5‑7B‑Instruct. The token budgets tested are 10 k, 20 k, and 30 k tokens per problem. Baselines include Repeated Sampling (parallel scaling), Sequential Refinement (sequential scaling), vanilla MCTS, AB‑MCTS‑M, and LiteSearch (early‑stopping hybrid). BG‑MCTS consistently outperforms all baselines across every budget, achieving 2–5 percentage‑point gains in answer accuracy. The advantage is most pronounced at the smallest budget (10 k), where the ability to suppress late‑stage branching and focus on deep refinement yields the greatest efficiency.

Ablation studies explore variants without the ρ‑dependent annealing, with fixed κ and λ values, and without the virtual child mechanism. All ablations confirm that conditioning both selection and widening on the remaining budget is essential for the observed performance gains. The authors also discuss limitations: reliance on a high‑quality reward model, the current focus on token‑count as the sole cost metric (ignoring GPU time or memory), and the need to extend the framework to multi‑constraint optimization. Future work is suggested on integrating more sophisticated cost models, applying the method to larger LLMs, and exploring adaptive reward functions that better capture reasoning quality.

In summary, BG‑MCTS offers a principled, budget‑aware adaptation of Monte Carlo tree search for LLM decoding, turning a fixed token budget from a hard constraint into a guiding signal that dynamically balances exploration and exploitation. This results in more reliable, higher‑quality answers without increasing inference cost, making test‑time scaling more practical for real‑world deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment