Comprehensive Comparison of RAG Methods Across Multi-Domain Conversational QA

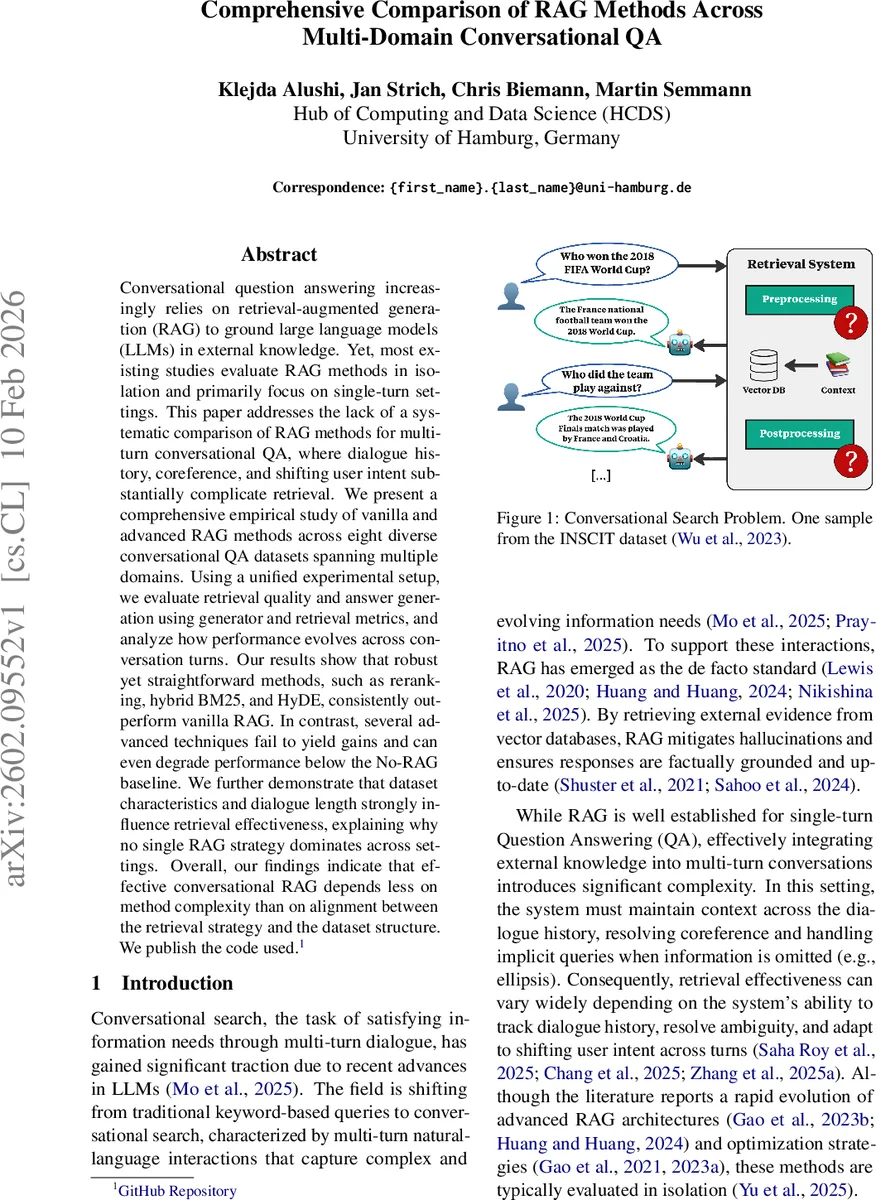

Conversational question answering increasingly relies on retrieval-augmented generation (RAG) to ground large language models (LLMs) in external knowledge. Yet, most existing studies evaluate RAG methods in isolation and primarily focus on single-turn settings. This paper addresses the lack of a systematic comparison of RAG methods for multi-turn conversational QA, where dialogue history, coreference, and shifting user intent substantially complicate retrieval. We present a comprehensive empirical study of vanilla and advanced RAG methods across eight diverse conversational QA datasets spanning multiple domains. Using a unified experimental setup, we evaluate retrieval quality and answer generation using generator and retrieval metrics, and analyze how performance evolves across conversation turns. Our results show that robust yet straightforward methods, such as reranking, hybrid BM25, and HyDE, consistently outperform vanilla RAG. In contrast, several advanced techniques fail to yield gains and can even degrade performance below the No-RAG baseline. We further demonstrate that dataset characteristics and dialogue length strongly influence retrieval effectiveness, explaining why no single RAG strategy dominates across settings. Overall, our findings indicate that effective conversational RAG depends less on method complexity than on alignment between the retrieval strategy and the dataset structure. We publish the code used.\footnote{\href{https://github.com/Klejda-A/exp-rag.git}{GitHub Repository}}

💡 Research Summary

This paper presents the first large‑scale systematic comparison of retrieval‑augmented generation (RAG) techniques for multi‑turn conversational question answering (QA) across diverse domains. While prior work has largely evaluated RAG methods in isolation and focused on single‑turn settings, the authors address the gap by examining how different retrieval strategies perform when dialogue history, coreference, and shifting user intent complicate the retrieval process.

Eight conversational QA datasets are selected from the ChatRAG‑Bench suite: Sequential QA (SQA), QuAC, CoQA, DoQA, Doc2Dial, QReCC, TopicQA, and INSCIT. These datasets span a range of domains (Wikipedia, StackExchange, social‑welfare, etc.) and exhibit varied structural properties, such as context‑to‑question token ratios, average context length (≈100‑500 tokens), and dialogue depth (up to 10 turns). The authors preprocess all datasets into a unified format, linearizing multi‑turn dialogues and normalising tokenisation with the Llama 3.3 tokenizer.

Two baselines are defined: “No‑RAG”, where the large language model (LLM) receives only the dialogue history, and “Oracle‑Context”, where the gold‑standard context is supplied directly to the LLM, establishing lower and upper performance bounds. Seven RAG configurations are evaluated: (1) Basic dense embedding retrieval (Base RAG); (2) Sparse BM25; (3) Hybrid BM25 (sparse + dense); (4) Cross‑encoder reranker; (5) HyDE (hypothetical‑answer‑driven query expansion); (6) Query Rewriting; (7) Summarization, SumContext, and HyDE‑Reranker as post‑processing variants. All experiments use the EncouRAGE library with Llama 3 8B Instruct as the generator, ensuring a consistent generation backbone.

Evaluation metrics cover both retrieval quality (Recall@k, MRR) and generation quality (F1, Exact Match). The authors also analyse performance as a function of conversation turn, revealing how retrieval effectiveness evolves over the dialogue.

Key findings:

- Hybrid BM25 and the cross‑encoder reranker consistently outperform pure dense retrieval across most datasets, confirming the benefit of combining lexical overlap with semantic similarity, especially when coreferential expressions and domain‑specific terminology are present.

- HyDE and the HyDE‑Reranker provide notable gains on datasets where questions are under‑specified or heavily dependent on prior turns (e.g., QReCC, TopicQA). By generating a provisional answer and using it as a refined query, these methods boost recall by up to 12 % absolute. However, on short‑context datasets such as CoQA and SQA, the same mechanisms introduce noise and can underperform the No‑RAG baseline.

- Simple summarisation of retrieved documents often harms performance, particularly for long contexts (Doc2Dial, INSCIT), because essential details are lost during compression. SumContext, which retains both the summary and the full document, mitigates this effect but still lags behind hybrid retrieval.

- Turn‑level analysis shows that early turns (1‑2) exhibit modest differences among methods, but performance divergence widens after the third turn. Reranking‑based approaches maintain relatively stable F1 scores in later turns, whereas methods that rely heavily on query rewriting or summarisation degrade sharply as coreference chains lengthen.

- Dataset characteristics strongly dictate which RAG strategy excels. High context‑to‑question token ratios and domain‑specific vocabularies (DoQA, Doc2Dial) favor hybrid lexical‑semantic retrieval, while datasets with extensive question rewriting (QReCC) benefit from HyDE‑style query expansion.

The authors conclude that “method complexity does not guarantee superior results”; instead, alignment between the retrieval strategy and the structural properties of the dataset is paramount. For current LLMs such as Llama 3 8B Instruct, a pragmatic pipeline that combines hybrid BM25 (or a reranker) with lightweight query expansion (HyDE) yields the most robust performance across domains and dialogue depths.

Beyond empirical results, the paper highlights several research directions: (1) developing multi‑turn aware retrievers that explicitly model dialogue state and coreference; (2) constructing benchmark suites that systematically vary context length, domain specificity, and turn count to stress‑test RAG systems; and (3) exploring joint training of retriever and generator to reduce the gap between retrieval quality and generation fidelity.

All code and experimental scripts are released publicly (GitHub repository), facilitating reproducibility and encouraging further exploration of RAG in conversational AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment