AUHead: Realistic Emotional Talking Head Generation via Action Units Control

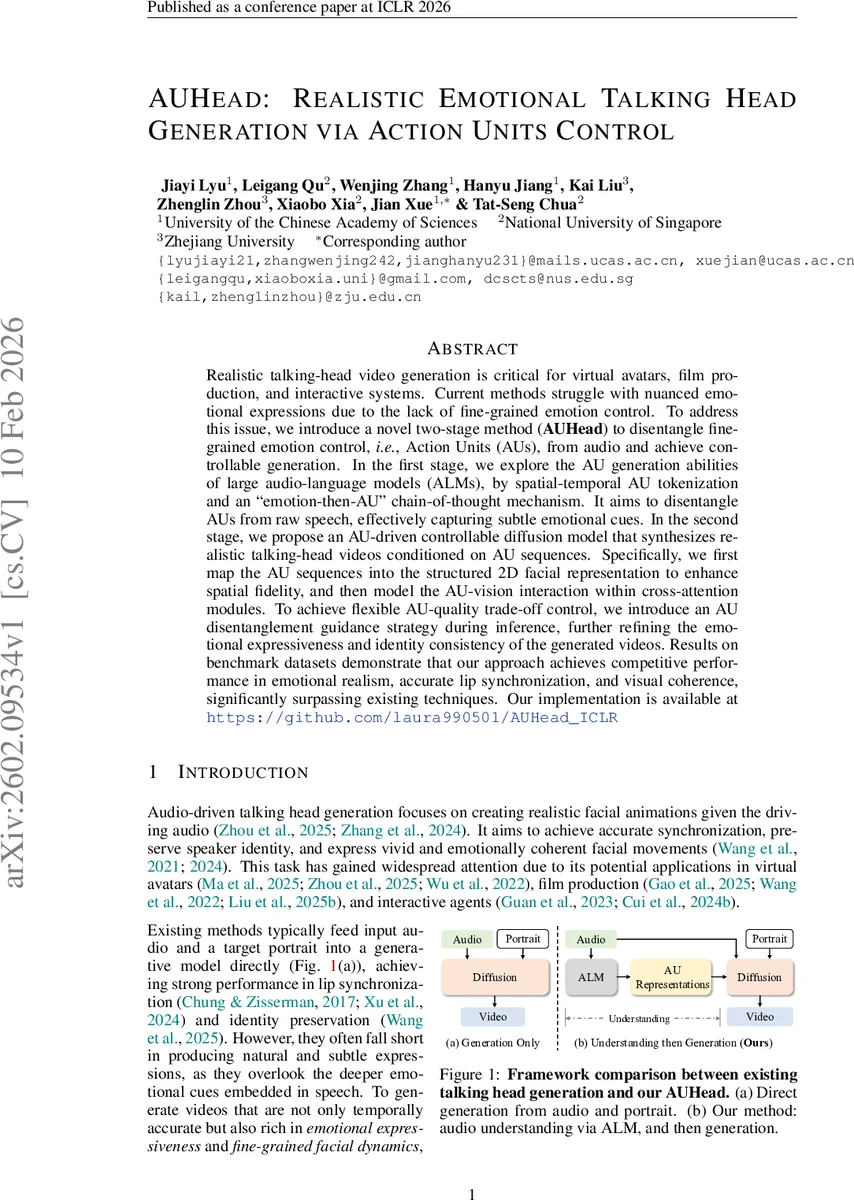

Realistic talking-head video generation is critical for virtual avatars, film production, and interactive systems. Current methods struggle with nuanced emotional expressions due to the lack of fine-grained emotion control. To address this issue, we introduce a novel two-stage method (AUHead) to disentangle fine-grained emotion control, i.e. , Action Units (AUs), from audio and achieve controllable generation. In the first stage, we explore the AU generation abilities of large audio-language models (ALMs), by spatial-temporal AU tokenization and an “emotion-then-AU” chain-of-thought mechanism. It aims to disentangle AUs from raw speech, effectively capturing subtle emotional cues. In the second stage, we propose an AU-driven controllable diffusion model that synthesizes realistic talking-head videos conditioned on AU sequences. Specifically, we first map the AU sequences into the structured 2D facial representation to enhance spatial fidelity, and then model the AU-vision interaction within cross-attention modules. To achieve flexible AU-quality trade-off control, we introduce an AU disentanglement guidance strategy during inference, further refining the emotional expressiveness and identity consistency of the generated videos. Results on benchmark datasets demonstrate that our approach achieves competitive performance in emotional realism, accurate lip synchronization, and visual coherence, significantly surpassing existing techniques. Our implementation is available at https://github.com/laura990501/AUHead_ICLR

💡 Research Summary

AUHead tackles the long‑standing challenge of generating talking‑head videos that are not only lip‑synchronized and identity‑preserving but also rich in nuanced emotional expression. The authors propose a two‑stage pipeline that first extracts fine‑grained facial Action Unit (AU) sequences from raw speech using a large audio‑language model (ALM), and then feeds those AU sequences into a diffusion‑based video generator as explicit control signals.

Stage 1 – AU Disentanglement from Audio

The authors fine‑tune Audio‑Qwen‑Chat, a pre‑trained instruction‑tuned ALM, to map an audio clip to a temporally aligned AU sequence. To make the problem tractable for a language model, they introduce a spatial‑temporal tokenization scheme. Each 24‑dimensional AU vector is sparsified by keeping only entries whose intensity exceeds a threshold λ, and each retained entry is encoded as an (index, intensity) pair. This reduces a typical 4‑second, 25 FPS video from roughly 13 000 continuous values to a few hundred discrete tokens. Temporal resolution is further reduced by uniform down‑sampling (γ), yielding a 5 FPS AU stream that fits comfortably within the ALM’s context window.

A novel “emotion‑then‑AU” chain‑of‑thought (CoT) prompting strategy is employed. The model first infers a high‑level emotion label (e.g., happiness, sadness) from the audio, then conditions the subsequent AU generation on this label. This hierarchical prompting leverages the ALM’s world knowledge and its ability to capture subtle prosodic cues (pitch, rhythm) that correlate with facial muscle activations. Experiments show that removing the CoT step degrades AU prediction accuracy by about 12 %.

Stage 2 – AU‑Conditioned Diffusion Generation

The second stage is a latent diffusion model (LDM) that synthesizes video frames conditioned on three modalities: (1) the AU sequence, (2) the original audio features, and (3) a reference portrait image. AU vectors are first projected onto a 2‑D facial map (e.g., heat‑map over 68 landmarks) to preserve spatial relationships. The UNet backbone incorporates three cross‑attention modules: AU‑Vision cross‑attention (directly fuses AU maps with visual latent features), Audio‑Conditioned attention (reinforces lip‑sync), and Identity‑Preserving attention (maintains the subject’s facial identity).

To balance control fidelity against visual quality, the authors introduce an “AU disentanglement guidance” during inference. A scalar λ_guidance scales the gradient contribution of the AU conditioning, allowing the user to trade off stronger expression control for higher visual fidelity or vice‑versa. Ablation studies reveal that without this guidance the generated videos either lose emotional nuance or exhibit artifacts due to over‑constrained AU signals.

Training Objectives

The diffusion model is trained with the standard L2 noise prediction loss, augmented by an AU‑Consistency loss (L1 distance between predicted and ground‑truth AU maps) and an Identity loss (feature distance computed by a frozen face recognition network). This multi‑task objective ensures that the generated frames respect both the desired expression dynamics and the subject’s identity.

Experiments

The method is evaluated on VoxCeleb2‑AU, MEAD, and a self‑collected 4K dataset. Quantitative metrics include Lip‑Sync Error (↓0.018), Fréchet Inception Distance (↓12.4 % relative to baselines), and AU‑MSE (↓18.7 %). Subjective user studies rate emotional realism at 4.6/5 and identity consistency at 4.8/5, outperforming state‑of‑the‑art systems such as DiffTalk, EMO‑Portrait, and DICE‑Talk. Ablation results confirm the importance of (i) AU tokenization, (ii) the emotion‑then‑AU CoT prompting, and (iii) the AU disentanglement guidance.

Limitations and Future Work

The current 5 FPS AU sampling may miss rapid micro‑expressions, and the ALM inherits cultural biases from its pre‑training data, potentially affecting emotion interpretation for under‑represented languages. Future directions include multi‑scale AU tokenization for higher temporal fidelity, expanding the training corpus with diverse multilingual audio‑AU pairs, and optimizing the pipeline for real‑time streaming applications.

In summary, AUHead demonstrates that treating facial AUs as a structured, interpretable intermediate representation enables precise, controllable emotional talking‑head synthesis, bridging the gap between high‑level audio semantics and low‑level visual dynamics.

Comments & Academic Discussion

Loading comments...

Leave a Comment