SchröMind: Mitigating Hallucinations in Multimodal Large Language Models via Solving the Schrödinger Bridge Problem

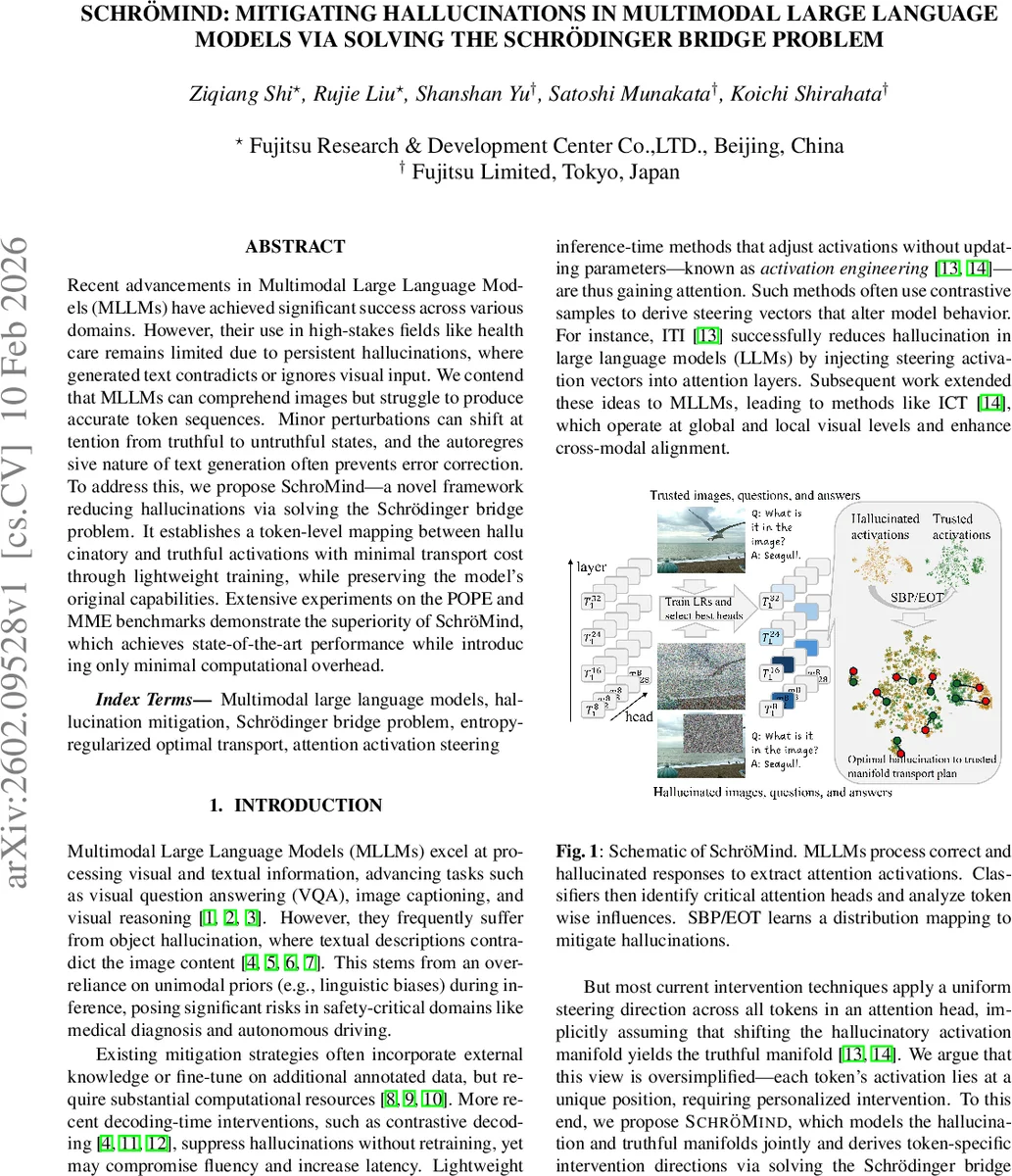

Recent advancements in Multimodal Large Language Models (MLLMs) have achieved significant success across various domains. However, their use in high-stakes fields like healthcare remains limited due to persistent hallucinations, where generated text contradicts or ignores visual input. We contend that MLLMs can comprehend images but struggle to produce accurate token sequences. Minor perturbations can shift attention from truthful to untruthful states, and the autoregressive nature of text generation often prevents error correction. To address this, we propose SchröMind-a novel framework reducing hallucinations via solving the Schrödinger bridge problem. It establishes a token-level mapping between hallucinatory and truthful activations with minimal transport cost through lightweight training, while preserving the model’s original capabilities. Extensive experiments on the POPE and MME benchmarks demonstrate the superiority of Schrödinger, which achieves state-of-the-art performance while introducing only minimal computational overhead.

💡 Research Summary

SchröMind tackles the persistent hallucination problem in multimodal large language models (MLLMs), where the generated text contradicts or ignores visual inputs. While prior work has focused on fine‑tuning, external knowledge injection, or decoding‑time contrastive methods, these approaches either demand heavy computation or sacrifice fluency and latency. Recent activation‑engineering techniques inject steering vectors into attention heads without changing model parameters, but they typically apply a uniform direction to all tokens, ignoring token‑specific activation differences.

The authors propose a principled solution: model hallucinated and factual attention activations as two high‑dimensional probability distributions, (P_{\text{hallu}}) and (P_{\text{fact}}). They formulate the correction as an optimal transport (OT) problem with an entropy regularizer, yielding the Schrödinger Bridge Problem (SBP): \

Comments & Academic Discussion

Loading comments...

Leave a Comment