Robust Depth Super-Resolution via Adaptive Diffusion Sampling

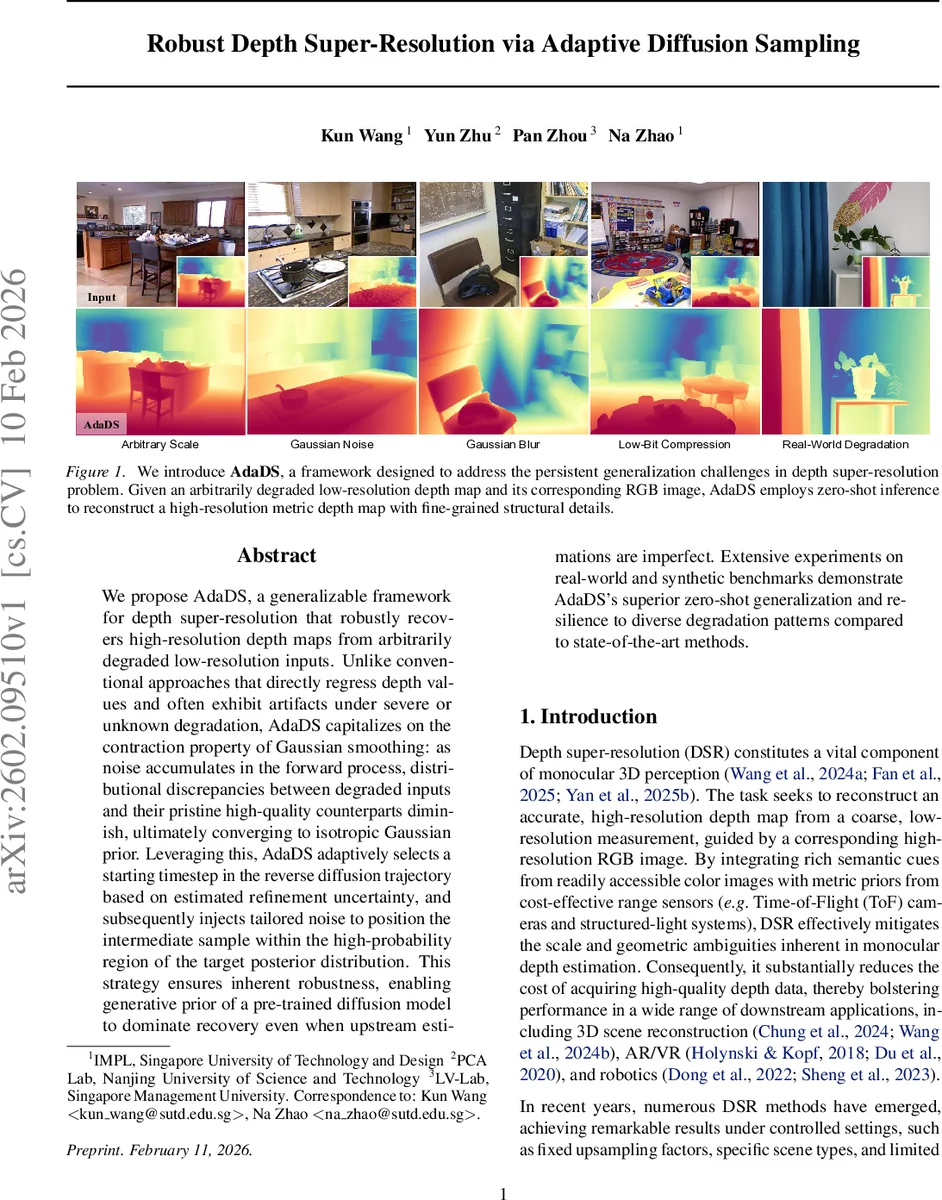

We propose AdaDS, a generalizable framework for depth super-resolution that robustly recovers high-resolution depth maps from arbitrarily degraded low-resolution inputs. Unlike conventional approaches that directly regress depth values and often exhibit artifacts under severe or unknown degradation, AdaDS capitalizes on the contraction property of Gaussian smoothing: as noise accumulates in the forward process, distributional discrepancies between degraded inputs and their pristine high-quality counterparts diminish, ultimately converging to isotropic Gaussian prior. Leveraging this, AdaDS adaptively selects a starting timestep in the reverse diffusion trajectory based on estimated refinement uncertainty, and subsequently injects tailored noise to position the intermediate sample within the high-probability region of the target posterior distribution. This strategy ensures inherent robustness, enabling generative prior of a pre-trained diffusion model to dominate recovery even when upstream estimations are imperfect. Extensive experiments on real-world and synthetic benchmarks demonstrate AdaDS’s superior zero-shot generalization and resilience to diverse degradation patterns compared to state-of-the-art methods.

💡 Research Summary

**

AdaDS (Adaptive Diffusion Sampling) tackles the long‑standing robustness problem in depth super‑resolution (DSR) by leveraging the intrinsic contraction property of Gaussian smoothing in diffusion models. Conventional DSR methods directly regress high‑resolution depth from low‑resolution inputs and typically fail when faced with severe or unknown degradations such as sensor noise, compression artifacts, or irregular down‑sampling. AdaDS instead treats a pre‑trained depth diffusion model (Marigold‑LCM) as a powerful prior over high‑quality depth distributions and carefully positions the low‑resolution input within this prior’s high‑probability region before denoising.

The framework consists of two stages.

-

Calibration Stage – A dual‑branch network processes the low‑resolution depth map together with its aligned RGB image. The image branch (a Vision‑Transformer with a DPT head) extracts global semantic cues, while the depth branch (a UNet‑like encoder) extracts multi‑scale depth features. Their fusion yields a refined latent depth representation (\hat{z}_0) and a per‑pixel uncertainty map (\hat{\sigma}_0). Training uses a Negative Log‑Likelihood loss to enforce calibrated uncertainty and an (\ell_1) loss on the decoded depth to compensate for VAE reconstruction errors. The uncertainty map is later used to decide how much noise to add in the diffusion process.

-

Sampling Stage – Starting from (\hat{z}_0) and (\hat{\sigma}_0), an approximate forward diffusion distribution (\hat{p}_t = \mathcal{N}(\sqrt{\bar\alpha_t}\hat{z}_0, \bar\alpha_t\hat{\sigma}_0^2 + (1-\bar\alpha_t)I)) is defined. The authors prove (Proposition 4.1) that the function

\

Comments & Academic Discussion

Loading comments...

Leave a Comment