Where-to-Unmask: Ground-Truth-Guided Unmasking Order Learning for Masked Diffusion Language Models

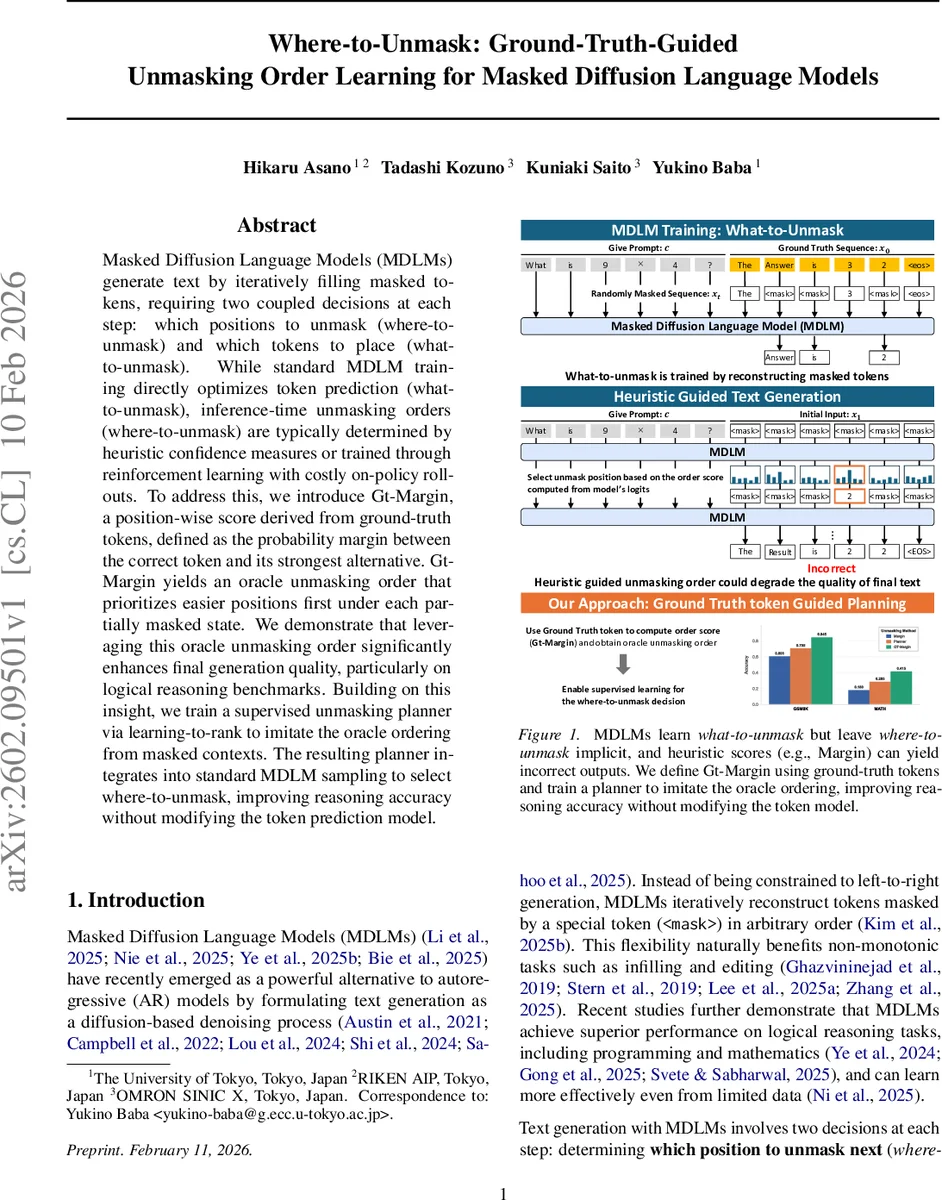

Masked Diffusion Language Models (MDLMs) generate text by iteratively filling masked tokens, requiring two coupled decisions at each step: which positions to unmask (where-to-unmask) and which tokens to place (what-to-unmask). While standard MDLM training directly optimizes token prediction (what-to-unmask), inference-time unmasking orders (where-to-unmask) are typically determined by heuristic confidence measures or trained through reinforcement learning with costly on-policy rollouts. To address this, we introduce Gt-Margin, a position-wise score derived from ground-truth tokens, defined as the probability margin between the correct token and its strongest alternative. Gt-Margin yields an oracle unmasking order that prioritizes easier positions first under each partially masked state. We demonstrate that leveraging this oracle unmasking order significantly enhances final generation quality, particularly on logical reasoning benchmarks. Building on this insight, we train a supervised unmasking planner via learning-to-rank to imitate the oracle ordering from masked contexts. The resulting planner integrates into standard MDLM sampling to select where-to-unmask, improving reasoning accuracy without modifying the token prediction model.

💡 Research Summary

This paper tackles a largely overlooked component of Masked Diffusion Language Models (MDLMs): the decision of which masked position to unmask at each diffusion step, commonly referred to as the “where‑to‑unmask” problem. While MDLMs already excel at the complementary “what‑to‑unmask” task—predicting the token to fill a chosen mask—most existing work treats the unmasking order as an implicit by‑product of training, relying on heuristic confidence measures (e.g., top‑probability or margin) or on costly reinforcement‑learning (RL) procedures that require on‑policy rollouts. The authors argue that the unmasking order can dramatically affect final generation quality, especially on tasks that demand long‑range reasoning such as mathematics, Sudoku solving, and strategic question answering.

To expose the potential of an optimal unmasking schedule, the authors introduce Gt‑Margin, a ground‑truth‑guided metric that quantifies, for each currently masked position, how decisively the model prefers the true token over its strongest competitor. Formally, for a masked state (x_t) and ground‑truth token (x_i^0) at position (i),

\

Comments & Academic Discussion

Loading comments...

Leave a Comment