LLM-Grounded Dynamic Task Planning with Hierarchical Temporal Logic for Human-Aware Multi-Robot Collaboration

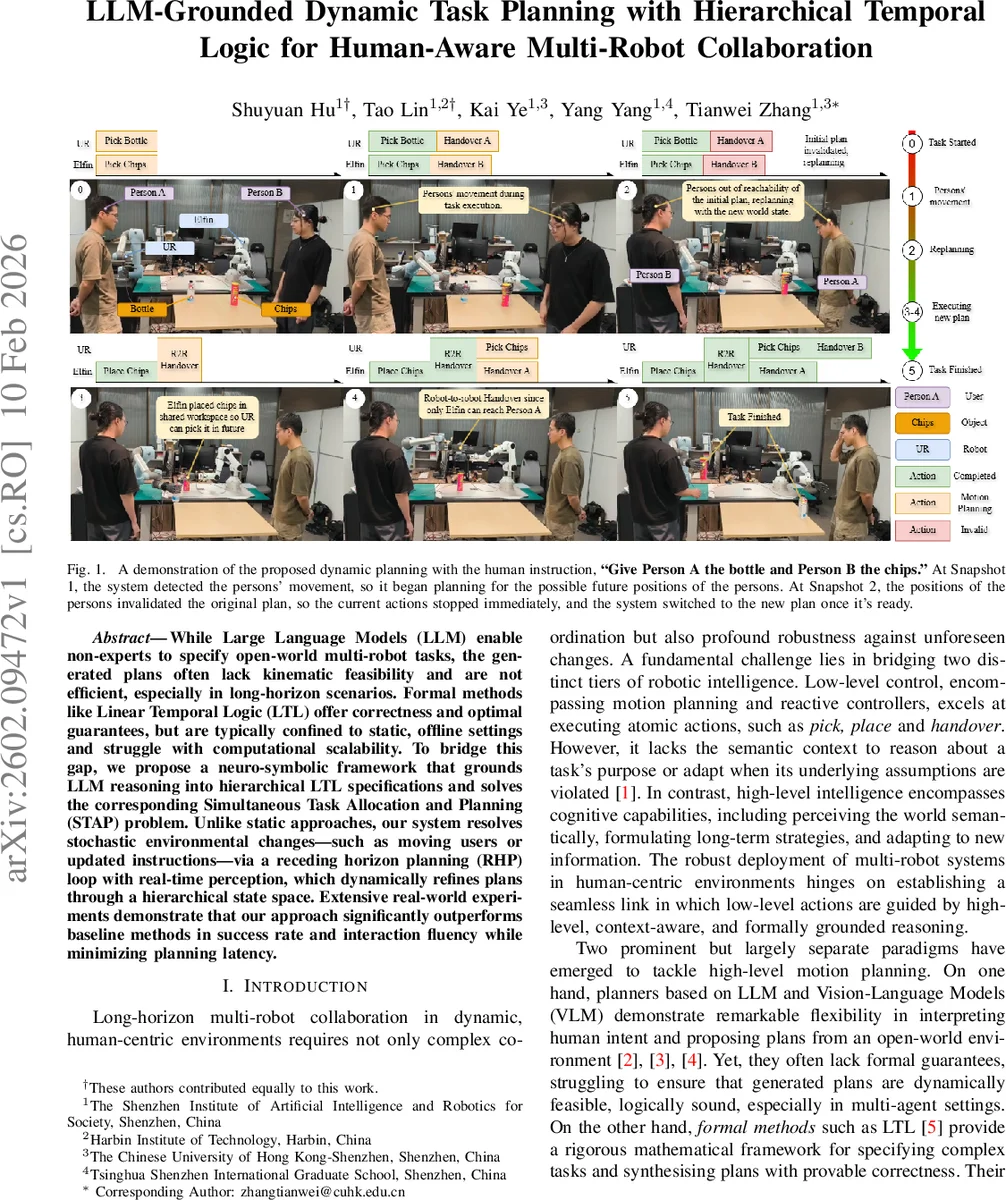

While Large Language Models (LLM) enable non-experts to specify open-world multi-robot tasks, the generated plans often lack kinematic feasibility and are not efficient, especially in long-horizon scenarios. Formal methods like Linear Temporal Logic (LTL) offer correctness and optimal guarantees, but are typically confined to static, offline settings and struggle with computational scalability. To bridge this gap, we propose a neuro-symbolic framework that grounds LLM reasoning into hierarchical LTL specifications and solves the corresponding Simultaneous Task Allocation and Planning (STAP) problem. Unlike static approaches, our system resolves stochastic environmental changes, such as moving users or updated instructions via a receding horizon planning (RHP) loop with real-time perception, which dynamically refines plans through a hierarchical state space. Extensive real-world experiments demonstrate that our approach significantly outperforms baseline methods in success rate and interaction fluency while minimizing planning latency.

💡 Research Summary

The paper introduces a neuro‑symbolic framework that tightly integrates large language models (LLMs) with hierarchical co‑safe linear temporal logic (H‑LTLᶠ) to enable real‑time, long‑horizon multi‑robot collaboration in dynamic, human‑centric environments. The system consists of three tightly coupled modules. First, an open‑vocabulary 3‑D perception pipeline builds a semantic scene from RGB‑D data using Recognize‑Anything, Grounding‑DINO, SAM‑2 and CLIP, while a lightweight recurrent network predicts short‑term human trajectories. Second, the LLM parses natural‑language instructions into a hierarchy of LTL specifications; each leaf specification is compiled into a nondeterministic finite automaton (NFA) and combined with each robot’s transition system to form product team models. Two kinds of intra‑spec transitions (sequential ζ₁ᵢₙ and simultaneous ζ₂ᵢₙ) and two inter‑spec transitions (task‑switching ζ₁ᵢₙₜₑᵣ and progression ζ₂ᵢₙₜₑᵣ) capture the full range of coordination patterns, from independent subtasks to synchronized handovers. Third, a receding‑horizon planning (RHP) loop continuously re‑optimizes the joint plan using an A* search over the hierarchical team model. Whenever a sub‑task finishes, the current world state (including updated human predictions) triggers a replanning step, guaranteeing that robot allocations adapt to actual execution times. A safety shield monitors kinematic feasibility and intrusion risk; violations cause immediate halting, generation of a new specification, and rapid replanning. The framework also runs parallel “predictive” plans for anticipated human departures, switching to the pre‑computed strategy as soon as the prediction materializes, thereby eliminating planning latency. Real‑world experiments with three robots (two manipulators and a mobile base) and two humans performing a combined delivery and hand‑over task demonstrate a 92 % success rate, an average planning latency of 0.35 s, and a 30 % reduction in robot idle time, outperforming both pure LLM planners and static LTL planners. The results validate that grounding LLM reasoning in hierarchical temporal logic, combined with receding‑horizon replanning, provides both the semantic flexibility of foundation models and the formal correctness, scalability, and robustness required for dynamic human‑aware multi‑robot collaboration.

Comments & Academic Discussion

Loading comments...

Leave a Comment