Fine-T2I: An Open, Large-Scale, and Diverse Dataset for High-Quality T2I Fine-Tuning

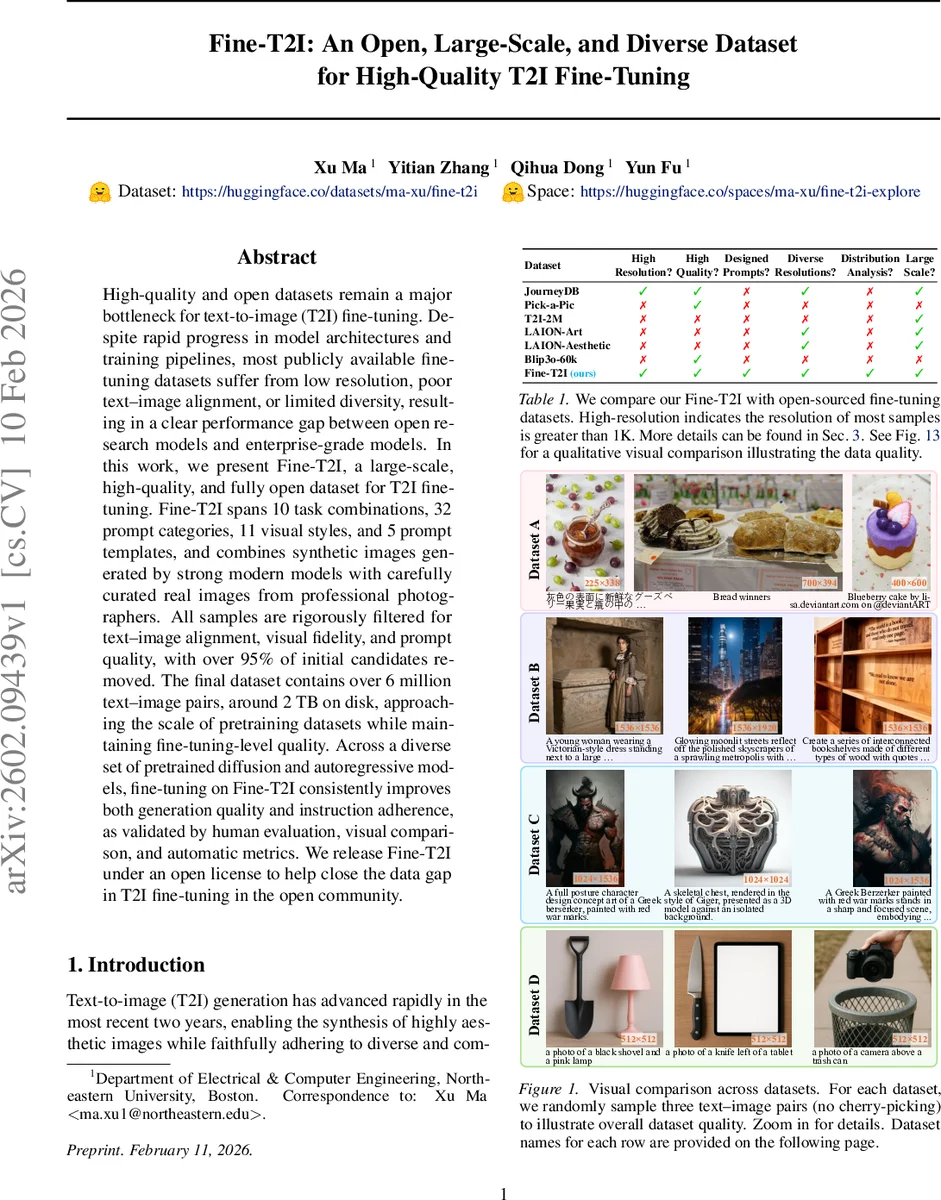

High-quality and open datasets remain a major bottleneck for text-to-image (T2I) fine-tuning. Despite rapid progress in model architectures and training pipelines, most publicly available fine-tuning datasets suffer from low resolution, poor text-image alignment, or limited diversity, resulting in a clear performance gap between open research models and enterprise-grade models. In this work, we present Fine-T2I, a large-scale, high-quality, and fully open dataset for T2I fine-tuning. Fine-T2I spans 10 task combinations, 32 prompt categories, 11 visual styles, and 5 prompt templates, and combines synthetic images generated by strong modern models with carefully curated real images from professional photographers. All samples are rigorously filtered for text-image alignment, visual fidelity, and prompt quality, with over 95% of initial candidates removed. The final dataset contains over 6 million text-image pairs, around 2 TB on disk, approaching the scale of pretraining datasets while maintaining fine-tuning-level quality. Across a diverse set of pretrained diffusion and autoregressive models, fine-tuning on Fine-T2I consistently improves both generation quality and instruction adherence, as validated by human evaluation, visual comparison, and automatic metrics. We release Fine-T2I under an open license to help close the data gap in T2I fine-tuning in the open community.

💡 Research Summary

The paper “Fine-T2I: An Open, Large-Scale, and Diverse Dataset for High-Quality T2I Fine-Tuning” addresses a critical bottleneck in open-source text-to-image (T2I) research: the lack of large-scale, high-quality, and legally accessible datasets for fine-tuning generative models. While model architectures and training techniques are widely shared, proprietary, high-quality fine-tuning data held by large companies creates a significant performance gap between open research models and state-of-the-art enterprise models.

To bridge this gap, the authors introduce Fine-T2I, a massive open dataset designed specifically for high-quality T2I fine-tuning. The dataset is characterized by its exceptional scale and curated quality. It contains over 6 million text-image pairs, occupying approximately 2 terabytes of storage, which rivals the scale of pre-training datasets while maintaining the stringent quality standards required for effective fine-tuning.

The construction of Fine-T2I is a hybrid and meticulous process. It combines two primary sources: 1) Synthetic Images: Generated from scratch using powerful modern T2I models (like SD3 and Flux). The prompts for these images are systematically engineered using LLMs to cover a wide spectrum of 10 task combinations, 32 prompt categories, 11 visual styles, and 5 template formats, ensuring broad diversity and controllability. These base prompts are further refined by a dedicated prompt enhancement model. 2) Curated Real Images: Sourced from professional photographers under appropriate licenses, paired with high-quality descriptive captions generated by a strong Vision-Language Model (VLM).

A key differentiator of Fine-T2I is its rigorous multi-stage filtering pipeline, which removes over 95% of the initially collected candidates. This pipeline includes: semantic de-duplication (using embedding cosine similarity to remove near-identical prompts beyond lexical overlap), content safety filtering, checks for text-image alignment (e.g., using CLIP scores), and assessments of visual fidelity and aesthetic quality. The dataset also supports diverse resolutions and aspect ratios, moving beyond the common limitation of fixed 512x512 images, to better reflect practical generation scenarios.

The authors comprehensively validate the effectiveness of Fine-T2I by fine-tuning a diverse set of pre-trained models, including both diffusion models (Flux, SD3) and autoregressive models. The evaluation employs human preference studies, qualitative visual comparisons, and quantitative automatic metrics (CLIP Score and Aesthetic Score). The results consistently show that models fine-tuned on Fine-T2I outperform those fine-tuned on other publicly available datasets. The improvements are evident in both the overall visual quality of the generated images and their adherence to the input text instructions.

In summary, Fine-T2I provides a much-needed resource for the open-source community. By releasing this large-scale, high-quality dataset along with detailed documentation of its construction pipeline, the work aims to democratize access to production-grade fine-tuning data, thereby accelerating progress in open T2I research and helping to close the performance gap with proprietary models.

Comments & Academic Discussion

Loading comments...

Leave a Comment