Breaking the Pre-Sampling Barrier: Activation-Informed Difficulty-Aware Self-Consistency

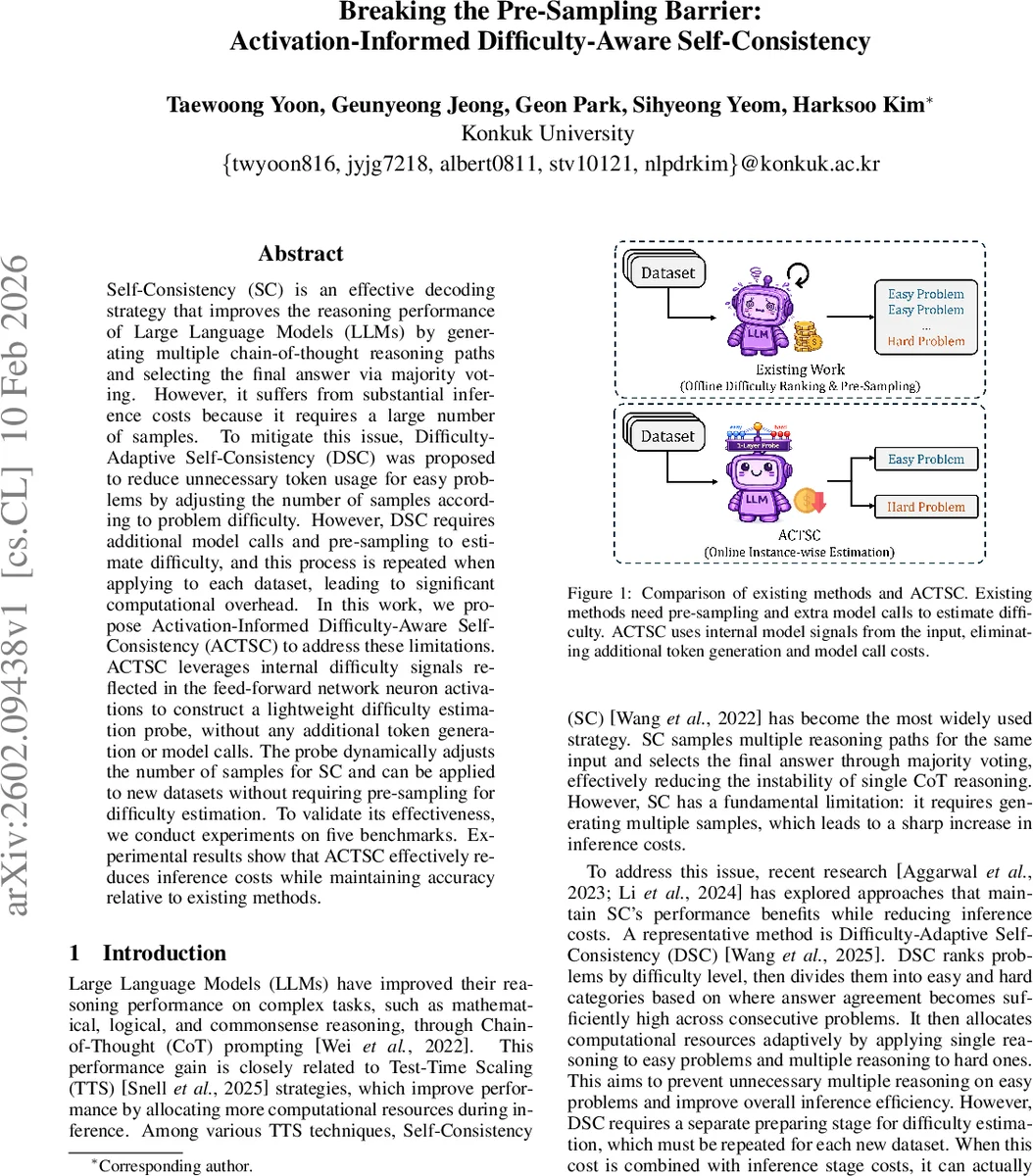

Self-Consistency (SC) is an effective decoding strategy that improves the reasoning performance of Large Language Models (LLMs) by generating multiple chain-of-thought reasoning paths and selecting the final answer via majority voting. However, it suffers from substantial inference costs because it requires a large number of samples. To mitigate this issue, Difficulty-Adaptive Self-Consistency (DSC) was proposed to reduce unnecessary token usage for easy problems by adjusting the number of samples according to problem difficulty. However, DSC requires additional model calls and pre-sampling to estimate difficulty, and this process is repeated when applying to each dataset, leading to significant computational overhead. In this work, we propose Activation-Informed Difficulty-Aware Self-Consistency (ACTSC) to address these limitations. ACTSC leverages internal difficulty signals reflected in the feed-forward network neuron activations to construct a lightweight difficulty estimation probe, without any additional token generation or model calls. The probe dynamically adjusts the number of samples for SC and can be applied to new datasets without requiring pre-sampling for difficulty estimation. To validate its effectiveness, we conduct experiments on five benchmarks. Experimental results show that ACTSC effectively reduces inference costs while maintaining accuracy relative to existing methods.

💡 Research Summary

Large language models (LLMs) achieve impressive reasoning abilities when prompted with Chain‑of‑Thought (CoT), but the performance gain is fragile: a single CoT answer can be wrong even if the model is otherwise competent. Self‑Consistency (SC) mitigates this fragility by generating multiple CoT paths for the same question and selecting the answer that appears most frequently. The downside of SC is the high inference cost: each additional sample requires a full forward‑and‑backward pass and token generation, which quickly becomes prohibitive for real‑world deployment.

Difficulty‑Adaptive Self‑Consistency (DSC) was introduced to reduce this cost by first estimating the difficulty of each problem, then allocating a small number of samples to easy questions and many samples to hard ones. DSC, however, needs a separate pre‑sampling stage for every new dataset: it repeatedly calls the LLM to rank problems, which adds a non‑trivial overhead and often erases the savings achieved during inference.

The paper “Activation‑Informed Difficulty‑Aware Self‑Consistency (ACTSC)” proposes a fundamentally different approach that eliminates the pre‑sampling step altogether. The authors observe that the internal feed‑forward network (FFN) activations of a frozen LLM already encode signals correlated with problem difficulty. By extracting a small subset of “difficulty‑sensitive neurons” (DSNs) and training a lightweight linear probe on them, the model can predict the probability that a given input belongs to the hard difficulty class using only a single forward pass—no extra tokens, no extra model calls.

Probe Construction

- A labeled difficulty dataset (e.g., MA‑TH) is used only once.

- For each neuron n in the FFN, the authors compute a “gap” function:

gap(n, θ) = E

Comments & Academic Discussion

Loading comments...

Leave a Comment