Sci-VLA: Agentic VLA Inference Plugin for Long-Horizon Tasks in Scientific Experiments

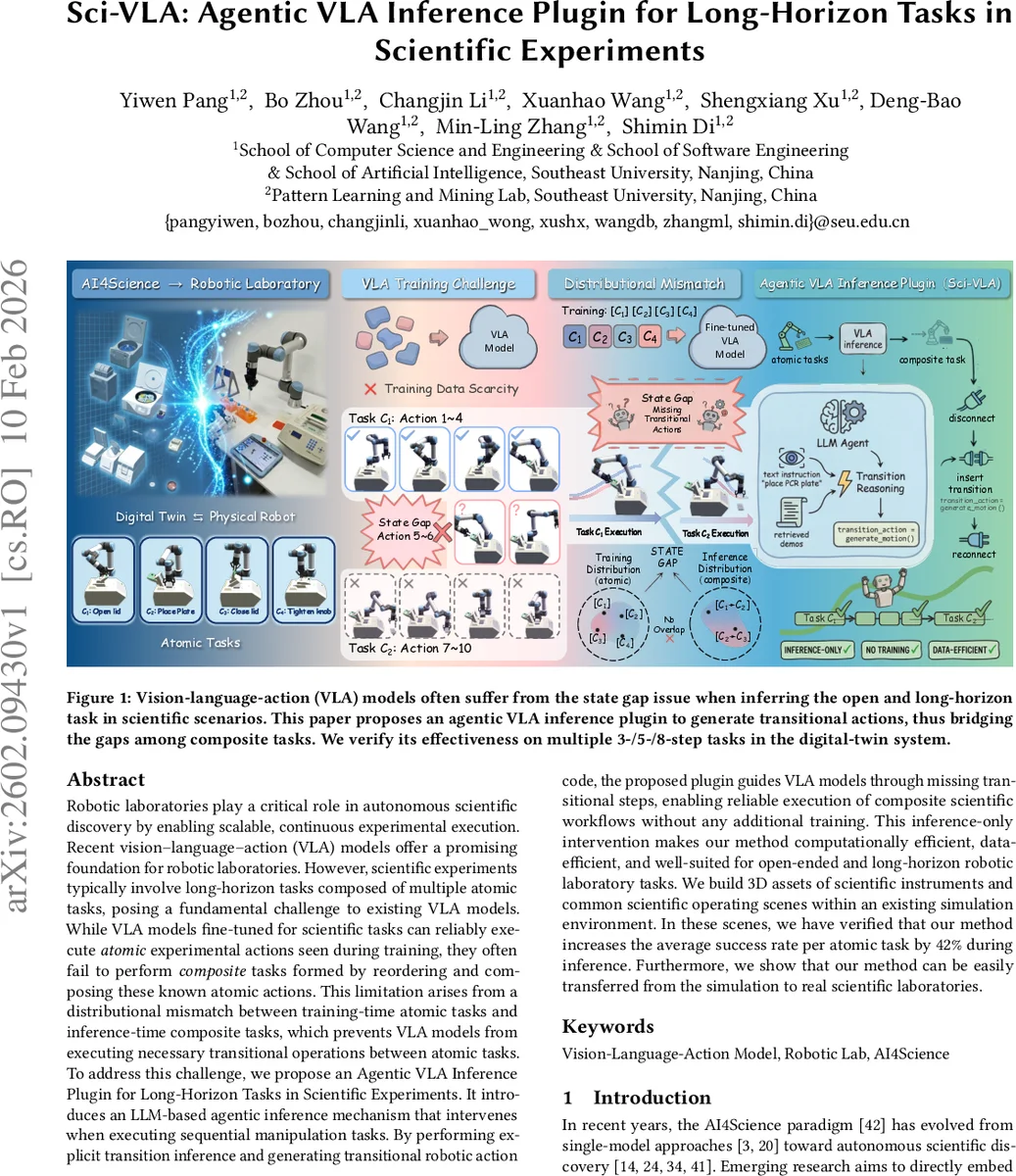

Robotic laboratories play a critical role in autonomous scientific discovery by enabling scalable, continuous experimental execution. Recent vision-language-action (VLA) models offer a promising foundation for robotic laboratories. However, scientific experiments typically involve long-horizon tasks composed of multiple atomic tasks, posing a fundamental challenge to existing VLA models. While VLA models fine-tuned for scientific tasks can reliably execute atomic experimental actions seen during training, they often fail to perform composite tasks formed by reordering and composing these known atomic actions. This limitation arises from a distributional mismatch between training-time atomic tasks and inference-time composite tasks, which prevents VLA models from executing necessary transitional operations between atomic tasks. To address this challenge, we propose an Agentic VLA Inference Plugin for Long-Horizon Tasks in Scientific Experiments. It introduces an LLM-based agentic inference mechanism that intervenes when executing sequential manipulation tasks. By performing explicit transition inference and generating transitional robotic action code, the proposed plugin guides VLA models through missing transitional steps, enabling reliable execution of composite scientific workflows without any additional training. This inference-only intervention makes our method computationally efficient, data-efficient, and well-suited for open-ended and long-horizon robotic laboratory tasks. We build 3D assets of scientific instruments and common scientific operating scenes within an existing simulation environment. In these scenes, we have verified that our method increases the average success rate per atomic task by 42% during inference. Furthermore, we show that our method can be easily transferred from the simulation to real scientific laboratories.

💡 Research Summary

The paper “Sci-VLA: Agentic VLA Inference Plugin for Long-Horizon Tasks in Scientific Experiments” addresses a critical challenge in deploying Vision-Language-Action (VLA) models within robotic laboratories for autonomous scientific discovery. While robotic labs promise scalable and continuous experimentation, and VLA models offer a flexible foundation by understanding natural language instructions and visual scenes, a fundamental mismatch arises when executing long-horizon scientific workflows. These workflows are composed of multiple atomic tasks (e.g., opening a lid, placing a plate, tightening a knob).

The core problem identified is a distributional mismatch between training and inference. VLA models are typically fine-tuned on data where each atomic task starts from an independent, randomized initial state. During inference, however, when these atomic tasks are chained into a novel composite sequence, the end state of one task often does not match the expected start state of the next task seen during training. This “state gap” leaves the robot stuck, unable to initiate the subsequent task because the necessary transitional actions (e.g., repositioning the gripper, moving to a new approach pose) were never present in the training data for that specific transition.

To solve this without costly additional data collection or model retraining, the authors propose Sci-VLA, an agentic inference plugin. Sci-VLA operates purely at inference time, requiring no modifications to the pre-trained/fine-tuned VLA model itself. The system works in an iterative cycle: 1) A fine-tuned VLA model executes an atomic task from a sequence. 2) Upon completion, the plugin interrupts the VLA’s inference. 3) The Transitional Action Generation Module takes the current state (robot joint positions, camera view) and the language instruction for the next atomic task. It uses an LLM agent (GPT-5.2) to generate Python code for transitional actions. To guide this, the module retrieves the target start state for the next task from the original training dataset via semantic search. The LLM is prompted with a safety-conscious code template to produce executable movement commands that bridge the current state to the target state. 4) The Transition Action Insertion Module then executes this generated code. 5) The VLA model resumes inference to execute the next atomic task from its correct starting point. This process repeats until the entire task sequence is complete.

The methodology is evaluated in a simulated environment where 3D assets of common scientific instruments (e.g., thermal cyclers, ozone cleaners) are created. Experiments involve multi-step composite tasks (3, 5, and 8 steps). The plugin is tested on top of several state-of-the-art VLA model backbones, including π0, π0.5, and π0_fast. The results demonstrate a significant performance boost. Sci-VLA increases the average success rate per atomic task by approximately 42% across different models compared to using the VLA models alone. More importantly, it drastically improves the coherence of execution between consecutive tasks, enabling the successful completion of long-horizon workflows that previously failed. The paper also notes the ease of transferring this inference-time plugin from simulation to real-world laboratory settings.

In summary, Sci-VLA presents a novel, efficient, and training-free solution to the long-horizon challenge in scientific robotics. By leveraging an LLM agent as a “plug-in” mediator during inference to dynamically generate and insert missing transitional actions, it effectively bridges the state gap between atomic tasks. This work enhances the practicality of VLA models for open-ended, complex scientific experimentation where task sequences are fluid and predefined programming is inflexible.

Comments & Academic Discussion

Loading comments...

Leave a Comment