PRISM: A Principled Framework for Multi-Agent Reasoning via Gain Decomposition

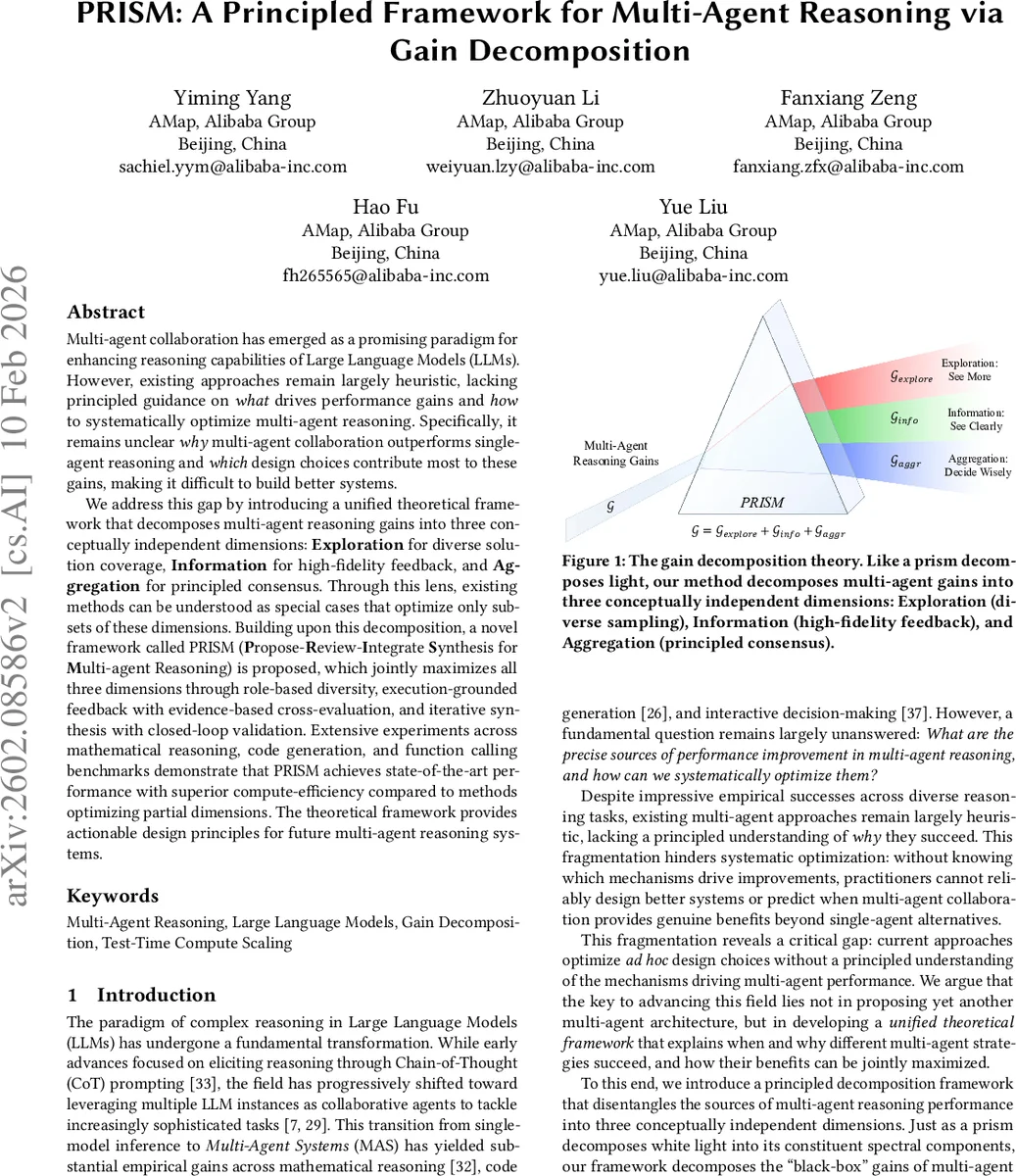

Multi-agent collaboration has emerged as a promising paradigm for enhancing reasoning capabilities of Large Language Models (LLMs). However, existing approaches remain largely heuristic, lacking principled guidance on what drives performance gains and how to systematically optimize multi-agent reasoning. Specifically, it remains unclear why multi-agent collaboration outperforms single-agent reasoning and which design choices contribute most to these gains, making it difficult to build better systems. We address this gap by introducing a unified theoretical framework that decomposes multi-agent reasoning gains into three conceptually independent dimensions: Exploration for diverse solution coverage, Information for high-fidelity feedback, and Aggregation for principled consensus. Through this lens, existing methods can be understood as special cases that optimize only subsets of these dimensions. Building upon this decomposition, a novel framework called PRISM (Propose-Review-Integrate Synthesis for Multi-agent Reasoning) is proposed, which jointly maximizes all three dimensions through role-based diversity, execution-grounded feedback with evidence-based cross-evaluation, and iterative synthesis with closed-loop validation. Extensive experiments across mathematical reasoning, code generation, and function calling benchmarks demonstrate that PRISM achieves state-of-the-art performance with superior compute-efficiency compared to methods optimizing partial dimensions. The theoretical framework provides actionable design principles for future multi-agent reasoning systems.

💡 Research Summary

The paper tackles the problem of understanding why multi‑agent collaboration improves the reasoning abilities of large language models (LLMs) and how to systematically design systems that fully exploit this advantage. The authors introduce a formal “gain decomposition” framework that splits the expected performance gain of a multi‑agent system into three orthogonal components: Exploration, Information, and Aggregation.

In the formalism, a multi‑agent reasoning task is defined as a tuple (X, T, Q, K, E, f) where X is the input space, T the solution space, Q a binary quality indicator, K the number of proposing agents, E an executor that provides feedback, and f an aggregation function that produces the final answer. Under mild assumptions (conditional independence, finite strategy space, deterministic execution, etc.), Theorem 3.1 shows that the expected quality of the multi‑agent output can be expressed as the product of a coverage term C_K (the probability that at least one agent proposes a correct solution) and a selection accuracy term η(f, s) (the probability that the aggregation function selects a correct answer given a feedback signal s). This multiplicative structure yields three additive gains:

- Exploration Gain (G_explore = C_K − p) – the improvement in coverage obtained by having multiple, diverse agents. It grows with the number of agents and the heterogeneity of their reasoning strategies.

- **Information Gain (G_info = C_K·

Comments & Academic Discussion

Loading comments...

Leave a Comment