Free(): Learning to Forget in Malloc-Only Reasoning Models

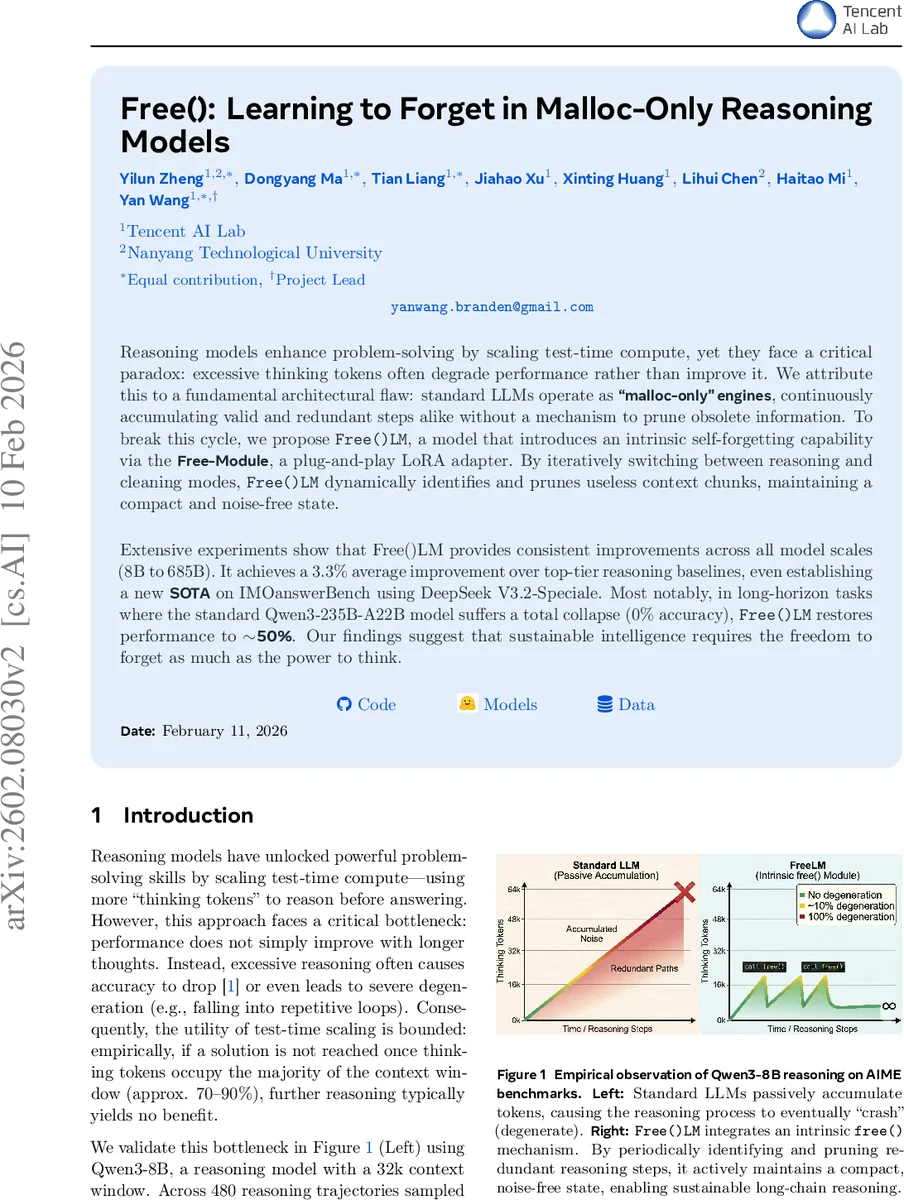

Reasoning models enhance problem-solving by scaling test-time compute, yet they face a critical paradox: excessive thinking tokens often degrade performance rather than improve it. We attribute this to a fundamental architectural flaw: standard LLMs operate as “malloc-only” engines, continuously accumulating valid and redundant steps alike without a mechanism to prune obsolete information. To break this cycle, we propose Free()LM, a model that introduces an intrinsic self-forgetting capability via the Free-Module, a plug-and-play LoRA adapter. By iteratively switching between reasoning and cleaning modes, Free()LM dynamically identifies and prunes useless context chunks, maintaining a compact and noise-free state. Extensive experiments show that Free()LM provides consistent improvements across all model scales (8B to 685B). It achieves a 3.3% average improvement over top-tier reasoning baselines, even establishing a new SOTA on IMOanswerBench using DeepSeek V3.2-Speciale. Most notably, in long-horizon tasks where the standard Qwen3-235B-A22B model suffers a total collapse (0% accuracy), Free()LM restores performance to 50%. Our findings suggest that sustainable intelligence requires the freedom to forget as much as the power to think.

💡 Research Summary

The paper identifies a fundamental architectural flaw in current large language models (LLMs) used for reasoning: they act as “malloc‑only” engines that continuously accumulate every generated token in the KV cache without any mechanism to discard obsolete or redundant information. As a result, when the number of thinking tokens grows, the context window becomes saturated, leading to repetitive loops, noise accumulation, and ultimately catastrophic collapse of performance on long‑horizon tasks.

To address this, the authors introduce Free()LM, a plug‑and‑play LoRA adapter called the Free‑Module that endows an LLM with an intrinsic self‑forgetting capability. The system operates in two alternating modes:

- Reasoning Mode (Unmerged) – the adapter is detached, and the backbone model generates reasoning tokens exactly as a vanilla LLM would.

- Cleaning Mode (Merged) – the adapter is merged into the backbone, prompting the model to scan the current context, identify useless spans, and emit structured pruning commands in JSON format. Each command consists of a “prefix” and a “suffix” string that uniquely anchor the start and end of a span to be removed.

An external executor parses these commands, deletes the identified substrings from the text, and updates the KV cache accordingly (either by re‑prefilling unchanged prefixes or by directly pruning cache entries and rotating positions). After cleaning, the adapter is unmerged and reasoning resumes on the now‑compact context.

A hyper‑parameter L_clean controls how often cleaning is triggered (e.g., every 5 k reasoning tokens). Two cache‑management strategies were explored: re‑prefilling unchanged prefixes and direct KV‑cache pruning. Because most serving stacks (e.g., vLLM) do not support native cache pruning, the authors adopt re‑prefilling for the main experiments.

Training such a self‑forgetting module is non‑trivial. Simple In‑Context Learning (ICL) approaches—asking the model itself or a strong external LLM to suggest deletions—yielded only marginal gains (~1%). To obtain high‑quality supervision, the authors built a data synthesis pipeline: they sampled 1 000 trajectories from DeepMath‑103k, segmented each into 1 k‑token chunks, and used Gemini‑2.5‑Pro to generate candidate pruning operations for each chunk, conditioning on the already‑cleaned prefix to mimic the incremental nature of inference. This produced ~8 000 candidates.

A strict reward filter was then applied: for each candidate, eight independent reasoning rollouts were executed on both the raw and the pruned context. The candidate was kept only if the pruned context’s accuracy was at least as high as the original. This rejection‑sampling step distilled the dataset to 6 648 high‑quality training instances, ensuring the model learns to prune only true noise without harming solution quality.

Experiments span three Qwen3 model sizes (8 B, 30 B, 235 B) and six long‑horizon reasoning benchmarks (AIME 24&25, BrUMO25, HMMT, BeyondAIME, Human’s Last Exam, IMOAnswerBench) plus four standard benchmarks (BBH, MMLU‑Pro, MMLU‑STEM, GPQA‑Diamond) to test safety on short‑horizon tasks. Baselines include vanilla inference, heuristic compression methods (H2O, ThinkCleary), and ICL‑based cleaning (both “No‑Train” and a fine‑tuned Gemini‑2.5‑Pro).

Key results:

- Across all model scales, Free()LM yields an average +3.3 % accuracy improvement while reducing token usage by 12–26 %.

- On the 8 B model, pass@1 rises from 44.24 % (vanilla) to 48.14 % with a token reduction from 17.5 k to 13.8 k.

- On the 30 B model, accuracy improves from 57.47 % to 62.30 % and tokens drop from 18.1 k to 15.9 k.

- The most dramatic effect appears on the 235 B model for long‑horizon tasks: vanilla performance collapses to 0 % when > 80 k thinking tokens are needed, whereas Free()LM restores ≈ 50 % accuracy and cuts token count from 21.7 k to 19.3 k.

- Heuristic compression baselines either degrade accuracy or fail to integrate with serving frameworks, highlighting the advantage of a learned, precise pruning policy.

A qualitative case study shows that Free()LM can excise large redundant blocks without requiring the backbone to regenerate missing information, unlike the Gemini‑based ICL approach which sometimes deletes useful clues and forces costly regeneration.

The authors conclude that active forgetting is essential for sustainable, long‑horizon reasoning. By providing a lightweight, modular LoRA‑based solution, Free()LM demonstrates that LLMs can be equipped with a self‑maintenance loop that keeps the reasoning state compact, reduces computational waste, and ultimately improves problem‑solving performance. This work opens a new direction for integrating memory‑management primitives directly into generative models, suggesting that future advances in AI may rely as much on “forgetting” mechanisms as on raw compute power.

Comments & Academic Discussion

Loading comments...

Leave a Comment