Rethinking Memory Mechanisms of Foundation Agents in the Second Half: A Survey

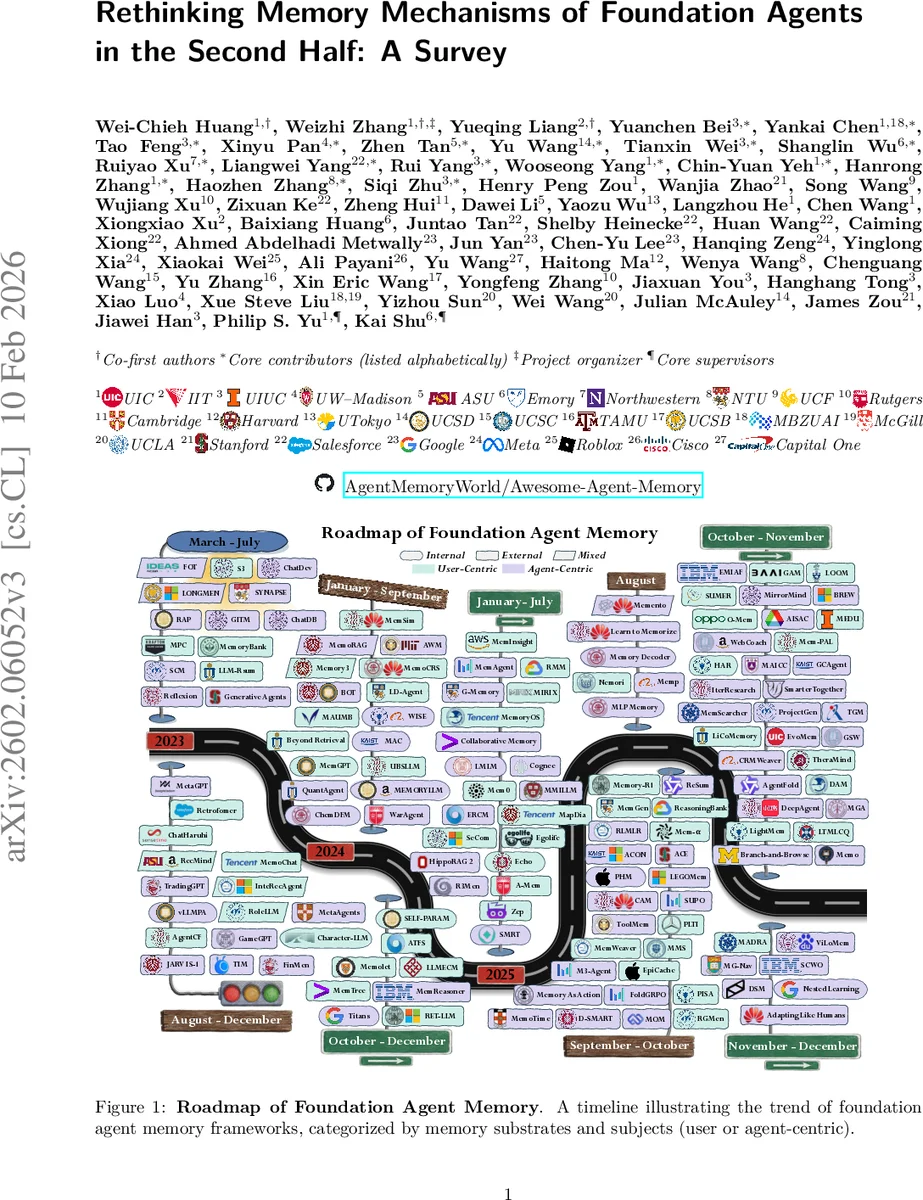

The research of artificial intelligence is undergoing a paradigm shift from prioritizing model innovations over benchmark scores towards emphasizing problem definition and rigorous real-world evaluation. As the field enters the “second half,” the central challenge becomes real utility in long-horizon, dynamic, and user-dependent environments, where agents face context explosion and must continuously accumulate, manage, and selectively reuse large volumes of information across extended interactions. Memory, with hundreds of papers released this year, therefore emerges as the critical solution to fill the utility gap. In this survey, we provide a unified view of foundation agent memory along three dimensions: memory substrate (internal and external), cognitive mechanism (episodic, semantic, sensory, working, and procedural), and memory subject (agent- and user-centric). We then analyze how memory is instantiated and operated under different agent topologies and highlight learning policies over memory operations. Finally, we review evaluation benchmarks and metrics for assessing memory utility, and outline various open challenges and future directions.

💡 Research Summary

The paper positions itself at the transition point in AI research often referred to as the “second half,” where the focus shifts from scaling models and achieving high benchmark scores to delivering real‑world utility in long‑horizon, dynamic, and user‑centric environments. In such settings agents encounter “context explosion” and must continuously acquire, store, manage, and selectively retrieve large amounts of information across extended interactions. The authors argue that memory is the key technology to bridge the gap between idealized benchmark performance and practical deployment.

To bring order to the rapidly expanding literature on foundation‑agent memory, the survey introduces a unified taxonomy based on three orthogonal dimensions:

-

Memory Substrate – distinguishes internal (parametric) memory such as model weights, latent states, and KV‑cache, from external (non‑parametric) memory including vector stores, databases, and hierarchical text logs. Internal memory offers fast, low‑latency access for short‑term reasoning, while external memory provides large‑scale, persistent storage for long‑term personalization.

-

Cognitive Mechanism – draws inspiration from human memory systems and categorizes memory functions into episodic (who/what/when records), semantic (facts and concepts), sensory (raw multimodal embeddings), working (current task‑relevant context and intermediate states), and procedural (skills, tool‑use routines). The survey explains how these mechanisms complement each other: sensory buffers filter raw inputs, working memory holds the current plan, episodic memory supplies past experiences, semantic memory offers abstract knowledge, and procedural memory enables reusable action sequences.

-

Memory Subject – separates agent‑centric memory (the agent’s own trajectories, learned heuristics, and self‑evolved policies) from user‑centric memory (personal preferences, constraints, interaction histories). The authors discuss the need for synchronization between the two, as well as privacy‑preserving techniques such as access control, retention policies, and encryption.

The paper then examines how memory is instantiated in different agent topologies. In single‑agent systems, memory operations (read, write, delete) form a closed loop, with policies for recency‑based eviction, importance‑based compression, and summarization. In multi‑agent systems, additional challenges arise: routing memory requests across agents, maintaining consistency of shared stores, handling versioning, and resolving conflicts when multiple agents update the same entry. The authors highlight emerging architectures that combine internal KV‑caches with external vector databases, and discuss mechanisms for distributed consensus.

A substantial portion of the survey is devoted to learning policies for memory management. Three major approaches are identified:

- Prompt‑based memory augmentation, where retrieved chunks are directly injected into the LLM prompt. This yields quick gains but scales poorly with context length.

- Parameterized memory learning, where memory representations are treated as trainable parameters, allowing the model to internalize frequently accessed facts and procedural patterns through fine‑tuning.

- Reinforcement learning for memory operations, which formulates read/write/delete decisions as actions in an MDP and optimizes them using reward signals such as task success, user satisfaction, or computational cost.

The authors also discuss scalability across two axes: context length and environmental complexity. For limited‑context settings, techniques like KV‑cache reuse and on‑the‑fly summarization are emphasized. For real‑world, multimodal, and open‑world environments, the survey advocates external, sharded, and multimodal‑indexed stores, as well as hierarchical caching to keep latency low while preserving large knowledge bases.

In the evaluation section, the paper catalogs metrics that capture both functional performance (accuracy, recall, retrieval latency) and utility aspects (memory retention span, user satisfaction, cost efficiency). It surveys existing benchmarks such as LongBench, MemBench, and Multi‑Session Dialog, noting that most current suites still focus on short‑term tasks. The authors call for new benchmarks that jointly assess long‑term reasoning, personalization, and robustness to distribution shift.

Finally, the survey outlines six future research directions:

- Continual learning and self‑evolving memory – mechanisms for automatic consolidation, forgetting, and restructuring of stored knowledge over time.

- Multi‑human‑agent memory organization – shared memory schemas that support collaboration among many users and agents while handling privacy and conflict resolution.

- Memory infrastructure and efficiency – low‑cost storage, compression algorithms, and hardware‑aware designs for large‑scale memory.

- Life‑long personalization and trustworthy memory – balancing deep personalization with strict privacy guarantees.

- Memory for multimodal, embodied, and world‑model agents – integrating vision, audio, and proprioceptive streams into a unified memory substrate.

- Real‑world benchmarking and evaluation – building domain‑specific, long‑horizon testbeds in healthcare, education, and industry.

Overall, the survey provides a comprehensive, system‑level framework that links memory substrates, cognitive functions, and subject focus to concrete architectural choices, learning strategies, and evaluation practices. It serves as a roadmap for researchers aiming to build foundation agents that can remember, adapt, and remain useful throughout prolonged, real‑world deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment