Implicit State Estimation via Video Replanning

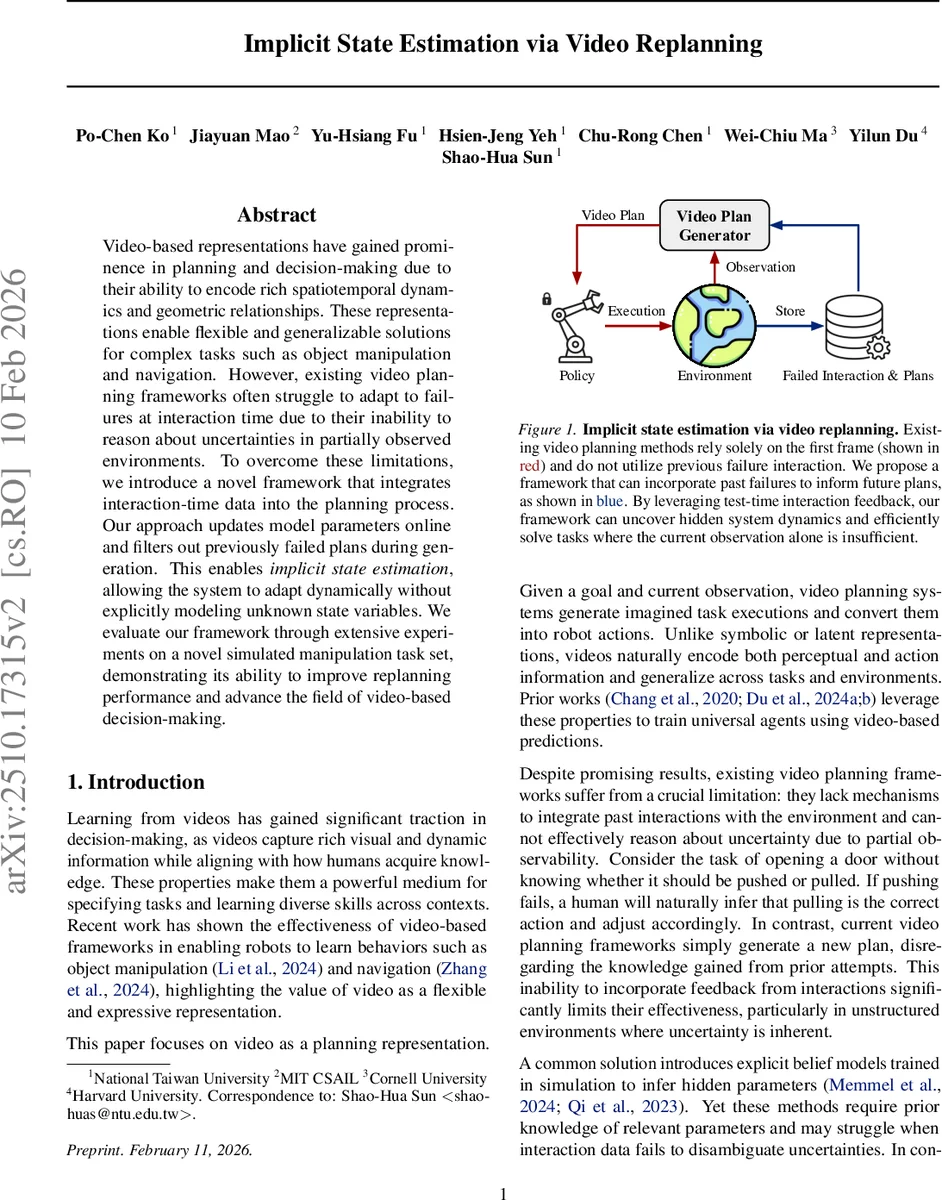

Video-based representations have gained prominence in planning and decision-making due to their ability to encode rich spatiotemporal dynamics and geometric relationships. These representations enable flexible and generalizable solutions for complex tasks such as object manipulation and navigation. However, existing video planning frameworks often struggle to adapt to failures at interaction time due to their inability to reason about uncertainties in partially observed environments. To overcome these limitations, we introduce a novel framework that integrates interaction-time data into the planning process. Our approach updates model parameters online and filters out previously failed plans during generation. This enables implicit state estimation, allowing the system to adapt dynamically without explicitly modeling unknown state variables. We evaluate our framework through extensive experiments on a new simulated manipulation benchmark, demonstrating its ability to improve replanning performance and advance the field of video-based decision-making.

💡 Research Summary

The paper tackles a fundamental limitation of current video‑based planning systems: they rely solely on an initial observation and ignore the rich information contained in failed execution attempts. As a result, when crucial physical parameters of the environment (mass, friction, hinge direction, etc.) are unknown, the planner cannot adapt its predictions and repeatedly generates infeasible plans. To address this, the authors introduce Implicit State Estimation (ISE), a framework that continuously incorporates interaction‑time video data into the planning loop without ever explicitly modeling hidden state variables.

ISE maintains two test‑time buffers: a Failed Plan Buffer (Bₚ) that stores video plans that led to unsuccessful executions, and a Failed Interaction Buffer (Bᵢ) that stores the actual interaction videos together with a binary success flag. At each replanning round the system proceeds through three stages. First, a Video Plan Generator produces a set of candidate future videos conditioned on the current first‑frame f₀ and a latent “state embedding” e. This embedding is meant to capture the hidden physical properties of the objects involved. Second, a Rejection Module selects the candidate whose video embedding is least similar to any plan stored in Bₚ, thereby actively avoiding repeats of previously failed hypotheses. Third, an Action Module translates the selected video into executable robot actions (e.g., via an inverse dynamics model, a goal‑conditioned policy, or a point‑tracking controller). If execution succeeds, the buffers remain unchanged; if it fails, the selected plan is added to Bₚ and the resulting interaction video is added to Bᵢ.

The state embedding e is updated online using a Retrieval Module. Offline, the authors collect an experience dataset D = {(vᵢ, oᵢ, sᵢ)} containing both successful and failed interaction videos, each tagged with an object identifier oᵢ. A pretrained vision encoder (e.g., CLIP or DINOv2) encodes each video into a feature vector eᵢ; for each object ID a canonical embedding eₒ is chosen from a successful trial. At test time, the current interaction video is encoded, distances to all stored embeddings are computed, and a softmax over the negative distances yields a probability distribution. A stochastic sample from this distribution provides a new candidate embedding, which is then refined by a “generative identification module” that shares parameters with the Video Plan Generator. This refinement step performs a few gradient steps (with frozen generator weights) to better align the embedding with the observed dynamics, effectively performing a lightweight, online system identification without explicit parameter models.

Experiments are conducted on a novel manipulation benchmark built on the Meta‑World suite, called the Meta‑World System Identification Suite. The benchmark randomizes hidden parameters such as object mass, friction coefficients, and hinge directions across episodes, forcing the robot to infer these properties from interaction. The authors compare ISE against (i) vanilla video‑based planners that do not use failure feedback, (ii) meta‑reinforcement‑learning baselines that learn latent context vectors, and (iii) explicit system‑identification approaches that maintain a belief over θ. Evaluation metrics include replanning failure rate, video‑to‑goal similarity (L2 distance in embedding space), and overall task success rate.

Results show that ISE reduces replanning failures by more than 30 % relative to the best baseline, achieves higher similarity between generated plans and the true goal videos, and attains higher overall success rates, especially in settings where the hidden parameters are completely unknown and no prior simulation data is available. The ablation study confirms that both components—the plan‑rejection buffer and the stochastic retrieval with refinement—contribute significantly to performance.

Key contributions of the work are:

- Introducing a principled way to fuse real‑time interaction feedback into video‑based planning, enabling implicit inference of hidden physical states.

- Designing a dual‑buffer architecture that explicitly discards previously failed hypotheses while preserving useful failure information for future updates.

- Leveraging pretrained vision encoders and a shared generative identification module to perform online, gradient‑based refinement of latent embeddings without hand‑crafted belief models.

- Providing a new Meta‑World‑based benchmark for evaluating online adaptation in manipulation tasks with unknown dynamics.

The paper opens several avenues for future research: extending ISE to multi‑object scenes with complex contact networks, integrating language‑based goal specifications for multimodal planning, and deploying the system on real‑world robots where sensor noise and latency further challenge online adaptation. Overall, Implicit State Estimation via Video Replanning represents a significant step toward robust, uncertainty‑aware robot planning that can learn from its own mistakes without requiring exhaustive prior modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment