Adapting Noise to Data: Generative Flows from 1D Processes

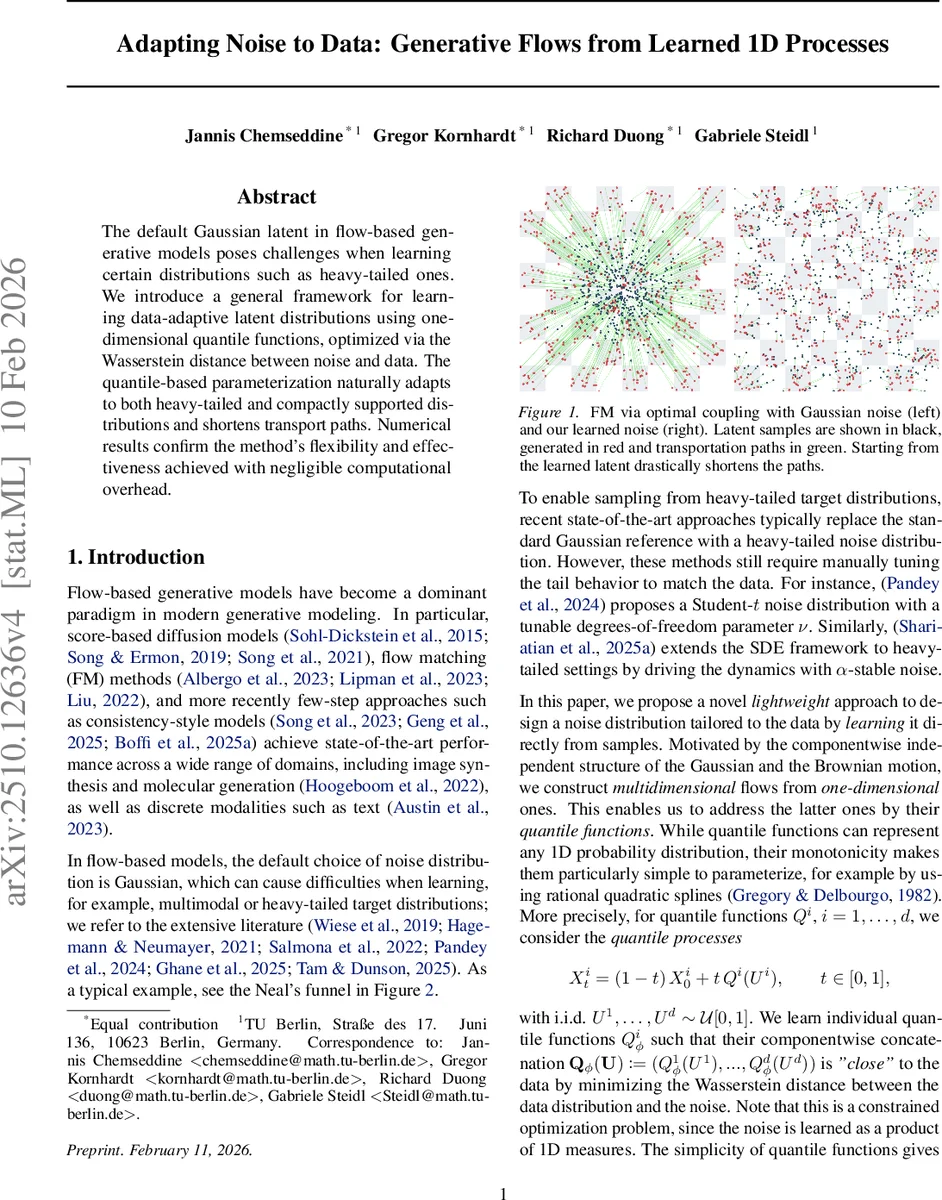

The default Gaussian latent in flow-based generative models poses challenges when learning certain distributions such as heavy-tailed ones. We introduce a general framework for learning data-adaptive latent distributions using one-dimensional quantile functions, optimized via the Wasserstein distance between noise and data. The quantile-based parameterization naturally adapts to both heavy-tailed and compactly supported distributions and shortens transport paths. Numerical results confirm the method’s flexibility and effectiveness achieved with negligible computational overhead.

💡 Research Summary

This paper addresses a fundamental limitation of flow‑based generative models, especially those that rely on Flow Matching (FM), namely the use of a fixed isotropic Gaussian latent distribution. While Gaussian noise works well for many tasks, it struggles with target distributions that exhibit heavy tails, multiple modes, or compact support. Existing remedies replace the Gaussian with heavy‑tailed families such as Student‑t or α‑stable laws, but these require manual tuning of tail parameters and still do not guarantee an optimal match to the data distribution.

The authors propose a completely data‑adaptive approach: instead of prescribing a parametric latent, they learn a one‑dimensional quantile function for each coordinate of the latent space. A quantile function Q(u) is the inverse cumulative distribution function (CDF) and uniquely characterises any one‑dimensional probability law. Crucially, the mapping μ ↦ Q_μ is an isometric embedding of the 2‑Wasserstein space (P₂(ℝ), W₂) into the Hilbert space L²(0,1). Consequently, the squared Wasserstein distance between two distributions equals the L² distance between their quantile functions. By parameterising each Q_i with a monotone rational‑quadratic spline (or any other monotone neural architecture), the authors can directly minimise the Wasserstein distance between the empirical data distribution and the learned latent distribution using stochastic gradient descent.

To integrate these learned one‑dimensional noises into a full‑dimensional flow, the paper adopts a mean‑reverting stochastic process of the form

X_t = f(t) X₀ + N_{g(t)} ,

where f and g are smooth scheduling functions (f(0)=1, f(1)=0, g(0)=0, g(1)=1) and N_t is a vector of independent one‑dimensional processes. By replacing each N_i,t with the quantile process Q_{g(t)}(U_i) where U_i∼Uniform(0,1), the authors obtain a “quantile process”

Z_t = f(t) X₀ + Q_{g(t)}(U) .

At t=0 the process starts at the data point X₀, and at t=1 it ends at a latent distribution defined by the learned quantile functions Q_φ. This construction yields an explicit optimal coupling between data and latent, shortening the transport paths compared with the standard independent Gaussian coupling.

The paper explores three concrete one‑dimensional noise families: (1) the Wiener process (standard Gaussian diffusion), (2) the Kac process, whose associated PDE is a damped wave equation and can generate heavy‑tailed or compactly supported marginals, and (3) a uniform‑based process driven by the gradient flow of the Maximum Mean Discrepancy (MMD). For each, the authors derive the conditional velocity field required by Flow Matching and show how the quantile representation makes them usable in arbitrary dimensions despite known analytical difficulties (e.g., mass non‑conservation for the Kac process in d≥3).

A further contribution is the definition of “quantile interpolants”

I_{s,t}(x,y) = f(s) x + Q_{g(s)}( R_{g(t)}( y − f(t) x ) ) ,

which generalise the linear interpolants used in DDIM and the consistency models of recent diffusion work. The interpolants satisfy the semigroup property and guarantee that applying the learned quantile process at intermediate times reproduces the correct intermediate states, enabling few‑step sampling schemes such as Inductive Moment Matching (IMM).

Experimental evaluation covers challenging synthetic benchmarks, including Neal’s funnel, heavy‑tailed Gaussian mixtures, and compactly supported multimodal distributions. Results demonstrate that the learned quantile latent dramatically reduces the average path length of the ODE solver, improves sample quality metrics (FID, IS, log‑likelihood), and does so with negligible extra computational cost because spline evaluation is cheap and the number of parameters per dimension remains modest. Importantly, no manual hyper‑parameter tuning of tail behavior is required; the model discovers the appropriate shape directly from data.

In summary, the paper introduces a principled, theoretically grounded framework for learning data‑adaptive latent distributions via one‑dimensional quantile functions. By embedding these quantiles into a mean‑reverting flow, the method preserves the elegance of Flow Matching while overcoming the rigidity of Gaussian latents. It opens the door to efficient generation of heavy‑tailed, multimodal, and compactly supported data, and suggests future extensions such as incorporating cross‑dimensional dependencies directly into the quantile parametrisation or exploiting radially symmetric processes for richer high‑dimensional structures.

Comments & Academic Discussion

Loading comments...

Leave a Comment