MILR: Improving Multimodal Image Generation via Test-Time Latent Reasoning

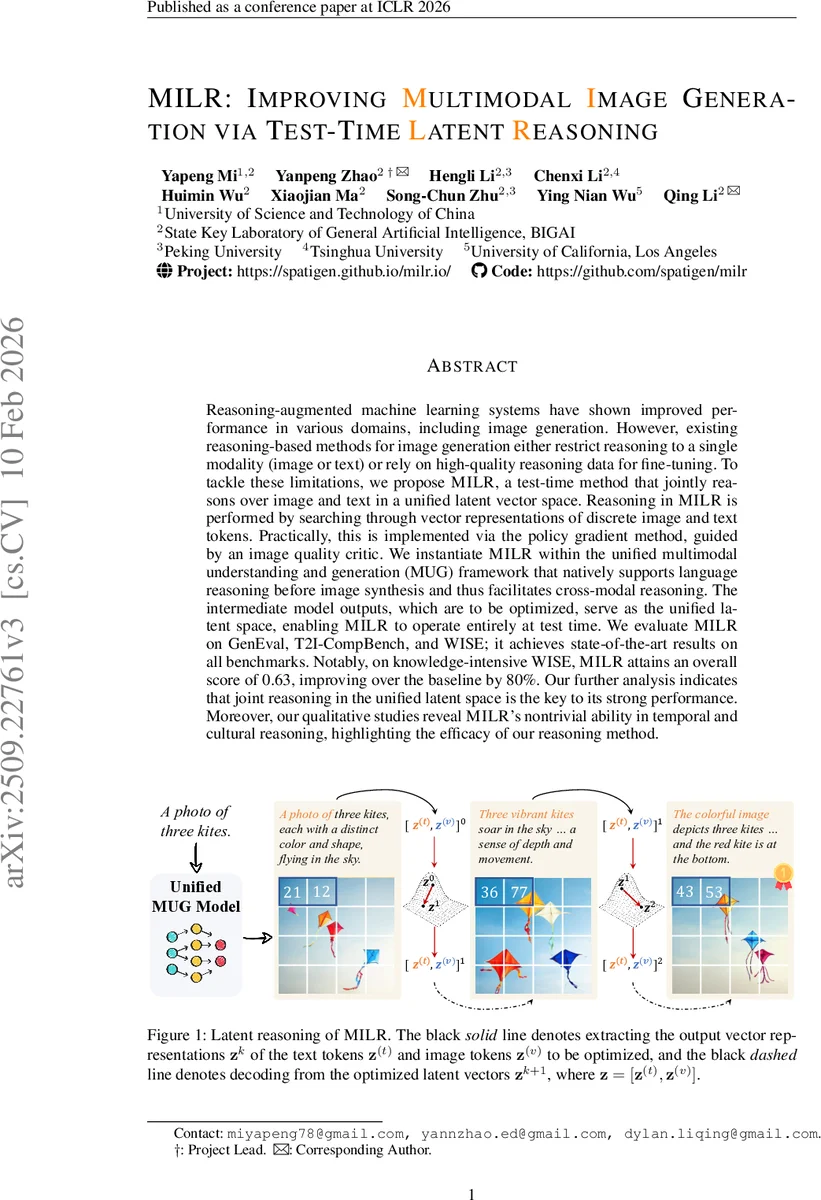

Reasoning-augmented machine learning systems have shown improved performance in various domains, including image generation. However, existing reasoning-based methods for image generation either restrict reasoning to a single modality (image or text) or rely on high-quality reasoning data for fine-tuning. To tackle these limitations, we propose MILR, a test-time method that jointly reasons over image and text in a unified latent vector space. Reasoning in MILR is performed by searching through vector representations of discrete image and text tokens. Practically, this is implemented via the policy gradient method, guided by an image quality critic. We instantiate MILR within the unified multimodal understanding and generation (MUG) framework that natively supports language reasoning before image synthesis and thus facilitates cross-modal reasoning. The intermediate model outputs, which are to be optimized, serve as the unified latent space, enabling MILR to operate entirely at test time. We evaluate MILR on GenEval, T2I-CompBench, and WISE, achieving state-of-the-art results on all benchmarks. Notably, on knowledge-intensive WISE, MILR attains an overall score of 0.63, improving over the baseline by 80%. Our further analysis indicates that joint reasoning in the unified latent space is the key to its strong performance. Moreover, our qualitative studies reveal MILR’s non-trivial ability in temporal and cultural reasoning, highlighting the efficacy of our reasoning method.

💡 Research Summary

The paper introduces MILR (Multimodal Image generation via test‑time Latent Reasoning), a novel test‑time reasoning framework that improves text‑to‑image generation by jointly optimizing latent representations of both text and image tokens. Unlike prior approaches that either reason in a single modality or require extensive fine‑tuning on curated reasoning data, MILR operates entirely at inference time, leaving the underlying model parameters untouched. The method builds on a unified multimodal understanding and generation (MUG) model, which produces intermediate token embeddings (the “latent space”) that are shared across modalities. MILR treats these embeddings as continuous vectors z and applies REINFORCE policy‑gradient updates to maximize a reward R(V_f, c) that measures the compatibility between the generated image V_f and the textual instruction c.

To keep computation tractable, MILR does not optimize all token embeddings; instead it refines only the first λ_t fraction of text token latents and the first λ_v fraction of image token latents (empirically set to 0.2 and 0.02 respectively). After the latent update, the model’s standard autoregressive decoder generates the remaining text tokens, and the image decoder produces the final image. This prefix‑only strategy leverages the observation that early tokens govern global structure while later tokens affect fine details.

Experiments on three widely used benchmarks—GenEval, T2I‑CompBench, and WISE—show that MILR achieves state‑of‑the‑art results across the board. On GenEval it reaches 0.95, matching the best training‑based models and surpassing the strongest test‑time scaling method by 4.4%. On the knowledge‑intensive WISE benchmark it attains an overall score of 0.63, an 80 % improvement over the baseline and a 16.7 % gain over the strongest prior model. Ablation studies confirm that joint cross‑modal reasoning in the unified latent space is the primary driver of performance, and that optimizing both text and image latents together yields higher scores than optimizing either modality alone. Qualitative analyses demonstrate MILR’s ability to handle culturally and temporally nuanced prompts, a capability that prior methods struggle with.

The authors discuss limitations: test‑time latent search adds computational overhead, and the quality of the reward model directly influences optimization direction. Future work may explore more efficient latent sampling, multi‑reward ensembles, or pre‑training of the latent space itself to provide better initialization. Overall, MILR presents a compelling test‑time reasoning paradigm that bridges the modality gap without extra training, delivering superior image generation quality on diverse tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment