Analyzing the Effects of Supervised Fine-Tuning on Model Knowledge from Token and Parameter Levels

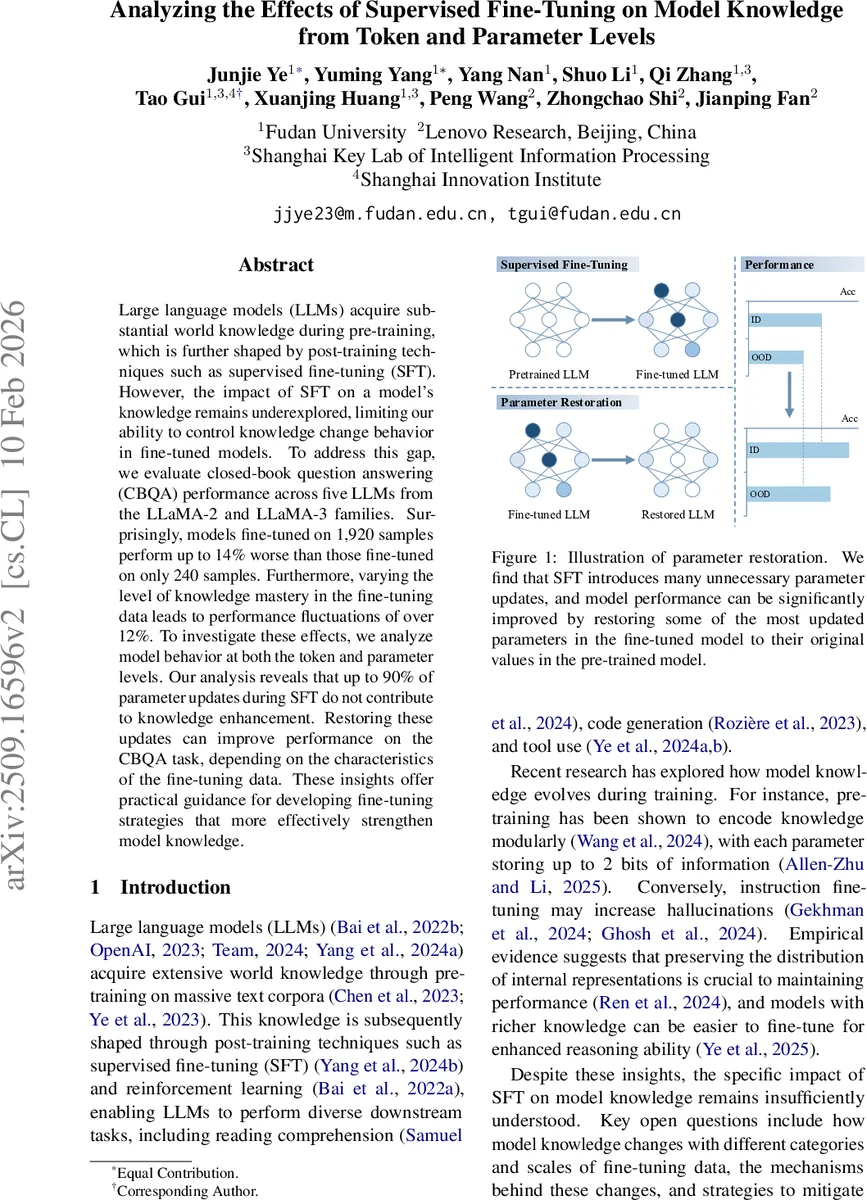

Large language models (LLMs) acquire substantial world knowledge during pre-training, which is further shaped by post-training techniques such as supervised fine-tuning (SFT). However, the impact of SFT on a model’s knowledge remains underexplored, limiting our ability to control knowledge change behavior in fine-tuned models. To address this gap, we evaluate closed-book question answering (CBQA) performance across five LLMs from the LLaMA-2 and LLaMA-3 families. Surprisingly, models fine-tuned on 1,920 samples perform up to 14% worse than those fine-tuned on only 240 samples. Furthermore, varying the level of knowledge mastery in the fine-tuning data leads to performance fluctuations of over 12%. To investigate these effects, we analyze model behavior at both the token and parameter levels. Our analysis reveals that up to 90% of parameter updates during SFT do not contribute to knowledge enhancement. Restoring these updates can improve performance on the CBQA task, depending on the characteristics of the fine-tuning data. These insights offer practical guidance for developing fine-tuning strategies that more effectively strengthen model knowledge.

💡 Research Summary

The paper investigates how supervised fine‑tuning (SFT) influences the factual knowledge stored in large language models (LLMs), a topic that has received relatively little quantitative attention despite the widespread use of SFT to adapt LLMs to downstream tasks. The authors focus on closed‑book question answering (CBQA) as a direct probe of internal knowledge, and they conduct a systematic series of experiments across five models from the LLaMA‑2 and LLaMA‑3 families (LLaMA‑2‑7B, ‑13B, ‑70B, LLaMA‑3‑8B, and LLaMA‑3‑70B).

Data Construction and Mastery Levels

The study builds on the ENTITYQUESTIONS dataset, which contains factual questions spanning 24 Wikipedia topics. For each question, the pre‑trained model’s ability to answer correctly is measured using a multi‑template completion approach. Based on the proportion of correctly completed knowledge points (R_Mk), the training data are divided into five “mastery” categories (D_M^train‑0 … D_M^train‑4). Category 0 contains questions the model cannot answer at all, while category 4 contains questions it almost always answers correctly. This categorization allows the authors to examine how the degree of prior knowledge in the fine‑tuning data affects knowledge retention and acquisition.

Experimental Setup

Three data scales are examined: 60, 240, and 1 920 samples per mastery category. For each combination of model, mastery level, and data size, the authors fine‑tune the model for one epoch using a batch size of 8, AdamW optimizer (learning rate 1e‑5), and cosine learning‑rate scheduling. Prompt templates are kept uniform across training and testing, and each experiment is repeated five times with different random samplings to report mean and variance. Evaluation is performed on both in‑domain (same topics as training) and out‑of‑domain (different topics) test sets, using accuracy as the primary metric.

Key Empirical Findings – Two Phenomena

-

Optimal Data Size is Small – Across all models, performance improves when the fine‑tuning set grows from 60 to 240 examples, but adding more data beyond 240 leads to a sharp decline. The best CBQA accuracy is consistently achieved with 240 samples; with 1 920 samples the accuracy can drop up to 14 % relative to the 240‑sample baseline. This effect is observed regardless of the mastery level, though the magnitude varies.

-

Mastery Level Drives Performance Variability – When the fine‑tuning set reaches the larger size (1 920), the choice of mastery level becomes critical. Models fine‑tuned on D_M^train‑0 (questions they previously did not know) suffer the steepest degradation, while those fine‑tuned on higher‑mastery data (e.g., D_M^train‑2 or D_M^train‑4) retain more of their original knowledge. The gap between the worst and best mastery categories can exceed 12 % in accuracy, which is 1.5× larger than the gap observed at the 240‑sample scale.

Token‑Level Analysis

To understand why larger data harms performance, the authors compute the Kullback‑Leibler (KL) divergence between token‑level logits of the fine‑tuned model and the original pre‑trained model. For small data (≤240), KL divergence decreases, indicating that fine‑tuning does not drastically alter the probability distribution and may even bring it closer to the pre‑trained state. However, at 1 920 samples KL divergence spikes, especially for mastery‑0 data, suggesting that the model’s internal representations are being overwritten in a way that harms factual recall.

Parameter‑Level Analysis and Restoration

The most striking contribution is the parameter‑level investigation. The authors rank all model parameters by the magnitude of their update during SFT. They then progressively restore the top‑k% of changed parameters back to their pre‑trained values, measuring CBQA accuracy after each restoration step. Surprisingly, restoring up to 90 % of the most changed parameters does not degrade performance; in many cases it improves accuracy by more than 10 %. This demonstrates that the majority of SFT‑induced updates are “unnecessary” for knowledge enhancement and may even be detrimental. The effect is strongest when fine‑tuning on low‑mastery data, where unnecessary updates are most abundant.

Implications and Recommendations

The findings overturn the common assumption that more fine‑tuning data always yields better knowledge retention. Instead, the authors recommend:

- Use modest amounts of high‑quality, high‑mastery data (a few hundred examples) for knowledge‑centric fine‑tuning.

- Monitor KL divergence and parameter‑update magnitudes during training to detect when the model is beginning to over‑fit or overwrite useful knowledge.

- Consider “parameter restoration” or selective update strategies that keep the bulk of pre‑trained weights intact while only allowing a small, carefully chosen subset to adapt.

These insights provide a practical roadmap for developers who wish to adapt LLMs to specific domains without sacrificing the rich factual knowledge acquired during pre‑training. The paper also opens avenues for future research on efficient fine‑tuning algorithms that explicitly separate knowledge‑preserving updates from task‑specific adaptations.

Comments & Academic Discussion

Loading comments...

Leave a Comment