Visibility-Aware Language Aggregation for Open-Vocabulary Segmentation in 3D Gaussian Splatting

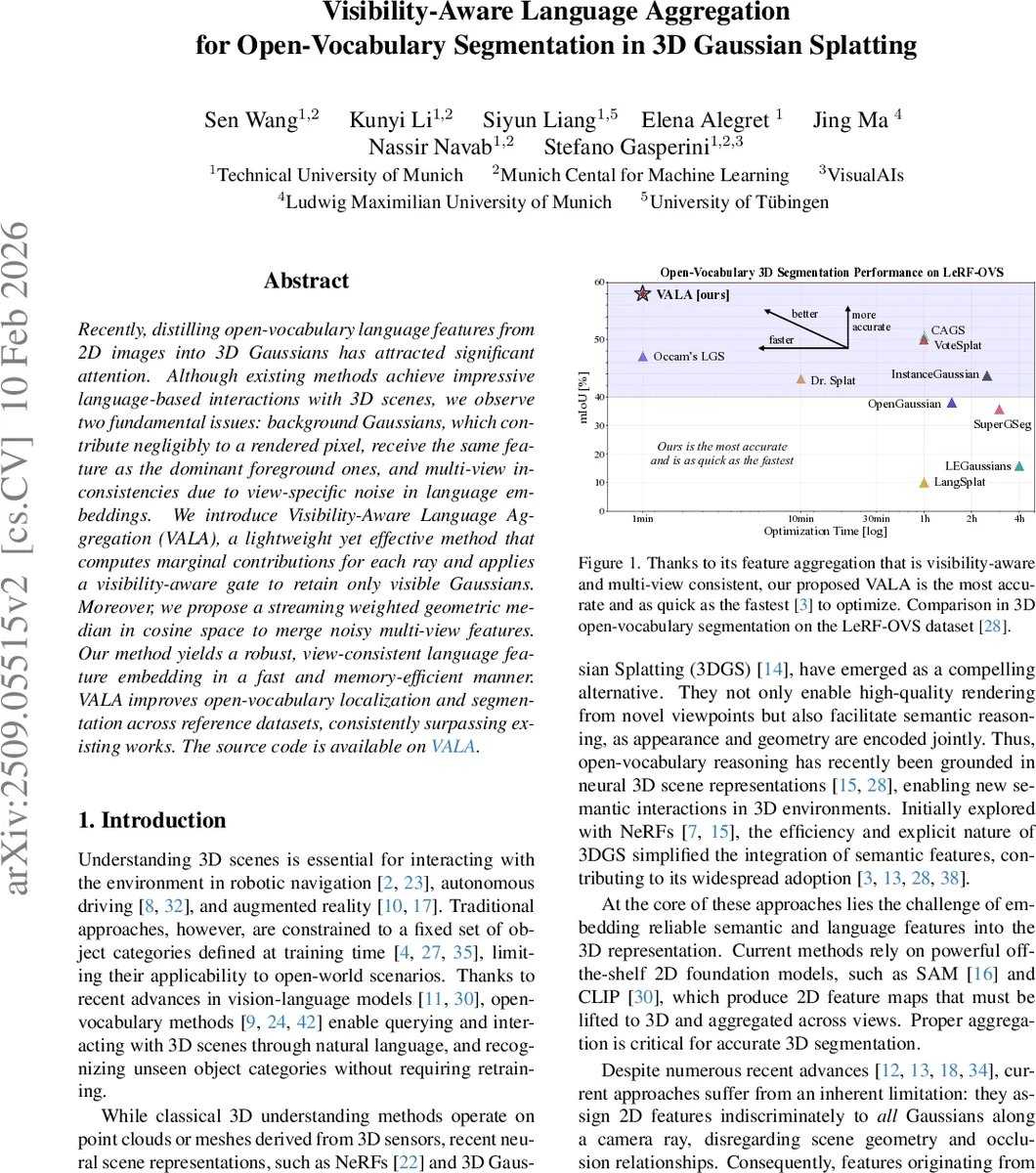

Recently, distilling open-vocabulary language features from 2D images into 3D Gaussians has attracted significant attention. Although existing methods achieve impressive language-based interactions of 3D scenes, we observe two fundamental issues: background Gaussians contributing negligibly to a rendered pixel get the same feature as the dominant foreground ones, and multi-view inconsistencies due to view-specific noise in language embeddings. We introduce Visibility-Aware Language Aggregation (VALA), a lightweight yet effective method that computes marginal contributions for each ray and applies a visibility-aware gate to retain only visible Gaussians. Moreover, we propose a streaming weighted geometric median in cosine space to merge noisy multi-view features. Our method yields a robust, view-consistent language feature embedding in a fast and memory-efficient manner. VALA improves open-vocabulary localization and segmentation across reference datasets, consistently surpassing existing works. More results are available at https://vala3d.github.io

💡 Research Summary

The paper tackles a pressing problem in open‑vocabulary 3D scene understanding: how to transfer rich language‑grounded features from 2‑D foundation models (e.g., CLIP, SAM) into a 3‑D Gaussian Splatting (3DGS) representation without incurring semantic leakage and multi‑view inconsistency. Existing pipelines either assign the same 2‑D embedding to every Gaussian intersected by a camera ray or rely on clustering and contrastive objectives that are computationally heavy and still vulnerable to noisy 2‑D cues. Consequently, background Gaussians that barely contribute to a rendered pixel receive the same semantic supervision as foreground Gaussians, and the same object can be represented by divergent embeddings across different viewpoints (semantic drift).

VALA (Visibility‑Aware Language Aggregation) introduces two complementary mechanisms to resolve these issues while preserving the training‑free, fast, and memory‑efficient nature of 3DGS.

-

Visibility‑Aware Gating (VAG).

- For each camera ray r passing through pixel u, the standard 3DGS rendering equations provide per‑Gaussian opacity αᵢ(r) and front‑to‑back transmittance Tᵢ(r). Their product wᵢ(r)=αᵢ(r)·Tᵢ(r) quantifies the marginal contribution of Gaussian i to the final pixel color.

- VALA aggregates these contributions into a total visibility mass S_tot = Σᵢ wᵢ(r). It then selects a minimal prefix of Gaussians (sorted by descending wᵢ) that accounts for a target fraction τ_view (empirically set between 0.5 and 0.75) of S_tot. This “mass‑coverage” step ensures that only Gaussians that meaningfully affect the pixel are considered.

- To guard against numerical noise, an absolute floor τ_abs is applied, and a quantile‑based cap τ_sq (the (1‑q)‑quantile of the wᵢ distribution) further trims the set. The resulting gated set G_vis contains only visible, high‑impact Gaussians. Language embeddings are then propagated only to Gaussians in G_vis, preventing background leakage.

-

Streaming Weighted Cosine Median for Multi‑View Aggregation.

- Features extracted from multiple views are first L2‑normalized to lie on the unit hypersphere. Direct averaging in Euclidean space can blur outliers but also amplifies view‑specific noise.

- VALA formulates a robust aggregation as the weighted geometric median under cosine distance: minimize Σ_s w_s·(1‑cos(f, f_s)). This objective is non‑smooth, but the authors derive a streaming gradient‑based update that approximates the median without storing all view features simultaneously. At each step, the current estimate c_t is nudged toward the new view feature f_{t+1} with a step size proportional to the weighted cosine similarity, yielding an online, O(1) memory algorithm.

- The resulting embedding is resilient to outliers and yields a view‑consistent representation for each Gaussian.

Experimental Validation.

VALA is evaluated on two open‑vocabulary 3D segmentation benchmarks: LeRF‑OVS (a synthetic dataset with dense language annotations) and ScanNet‑v2 (real indoor scans). The authors compare against state‑of‑the‑art methods such as Occam’s LGS, Dr.Splat, LangSplat, and various clustering‑based pipelines. Metrics include mean Intersection‑over‑Union (mIoU), mean Average Precision (mAP), and language‑to‑3D query accuracy. VALA consistently outperforms baselines, achieving 3–5 percentage‑point gains in mIoU while keeping optimization time under one minute and memory usage comparable to the fastest methods. Qualitative results demonstrate that fine‑grained objects (e.g., “cup on a table”) are correctly localized, whereas prior methods often smear foreground semantics onto supporting surfaces.

Strengths and Contributions.

- Principled use of rendering physics: By leveraging the exact per‑ray contribution wᵢ, VALA aligns semantic supervision with true visual influence, a step that prior works ignored.

- Robust multi‑view fusion: The streaming cosine median provides a lightweight yet effective way to suppress view‑specific noise without costly clustering or contrastive training.

- Training‑free pipeline: VALA does not require per‑scene optimization or additional neural modules, preserving the speed and simplicity of 3DGS.

- Broad applicability: The method can be plugged into any 3DGS pipeline that already computes per‑ray contributions, making it a versatile enhancement for robotics, AR/VR, and autonomous navigation.

Limitations and Future Directions.

- Hyper‑parameters (τ_view, τ_abs, q) are set empirically per dataset; an adaptive scheme would improve usability.

- The streaming median is an approximation; in scenes with extreme view noise, residual semantic drift may persist. More sophisticated non‑convex solvers or hierarchical median schemes could further tighten consistency.

- Current experiments focus on static scenes; extending VALA to dynamic environments (moving objects, changing lighting) would require incremental updates to visibility scores.

Impact.

VALA bridges a critical gap between high‑fidelity neural rendering and open‑vocabulary semantic understanding. By ensuring that only geometrically visible Gaussians receive language supervision and by consolidating multi‑view cues into a robust embedding, it delivers a practical, scalable solution for real‑time 3D perception tasks that demand both visual fidelity and semantic flexibility. The approach is poised to become a standard component in next‑generation 3D perception stacks for embodied AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment