HiCL: Hippocampal-Inspired Continual Learning

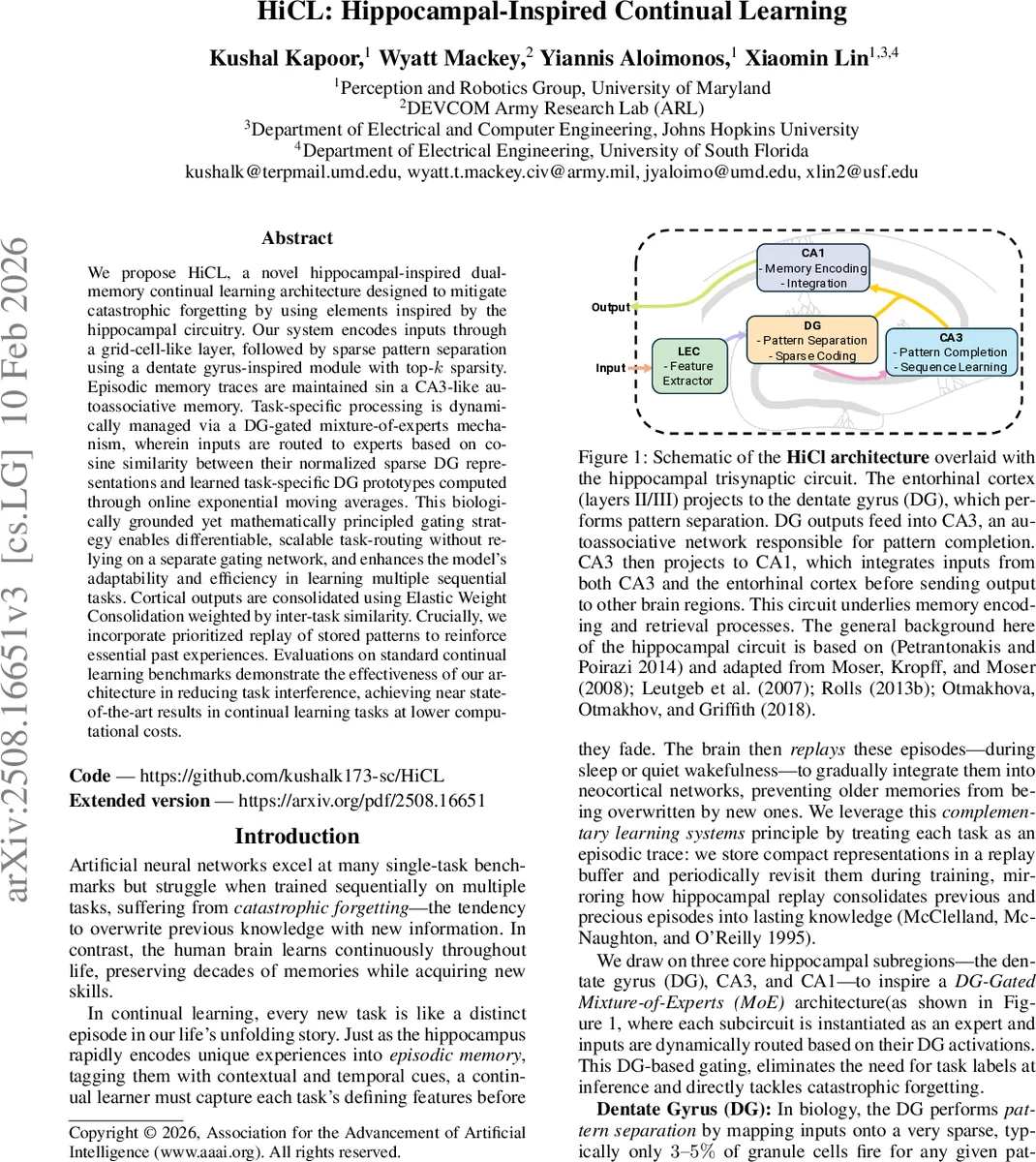

We propose HiCL, a novel hippocampal-inspired dual-memory continual learning architecture designed to mitigate catastrophic forgetting by using elements inspired by the hippocampal circuitry. Our system encodes inputs through a grid-cell-like layer, followed by sparse pattern separation using a dentate gyrus-inspired module with top-k sparsity. Episodic memory traces are maintained in a CA3-like autoassociative memory. Task-specific processing is dynamically managed via a DG-gated mixture-of-experts mechanism, wherein inputs are routed to experts based on cosine similarity between their normalized sparse DG representations and learned task-specific DG prototypes computed through online exponential moving averages. This biologically grounded yet mathematically principled gating strategy enables differentiable, scalable task-routing without relying on a separate gating network, and enhances the model’s adaptability and efficiency in learning multiple sequential tasks. Cortical outputs are consolidated using Elastic Weight Consolidation weighted by inter-task similarity. Crucially, we incorporate prioritized replay of stored patterns to reinforce essential past experiences. Evaluations on standard continual learning benchmarks demonstrate the effectiveness of our architecture in reducing task interference, achieving near state-of-the-art results in continual learning tasks at lower computational costs. Our code is available here https://github.com/kushalk173-sc/HiCL.

💡 Research Summary

HiCL (Hippocampal‑Inspired Continual Learning) presents a biologically motivated dual‑memory architecture that tackles catastrophic forgetting by mimicking key operations of the hippocampal trisynaptic circuit—dentate gyrus (DG), CA3, and CA1. The pipeline begins with a grid‑cell‑like encoder: four parallel 1×1 convolutions apply learned phase offsets to backbone features and pass them through sinusoidal activations, yielding structured, relational embeddings reminiscent of entorhinal grid cells.

These embeddings are fed into a DG module that performs a linear projection, ReLU, layer‑norm, and a top‑k sparsification (k ≈ 5 % of dimensions). This top‑k operation approximates the inhibitory microcircuit of biological DG, producing quasi‑orthogonal sparse codes (p_sep) that naturally partition the representational space and reduce interference among downstream experts.

The sparse DG codes are then refined by a two‑layer MLP representing CA3. Rather than implementing a recurrent attractor network, the authors use a feed‑forward MLP with ReLU and layer‑norm to achieve pattern completion (p_comp), reconstructing full memory traces from partial cues while keeping the computation fully differentiable and inexpensive.

CA1 integrates the pattern‑separated and pattern‑completed vectors by simple concatenation followed by another layer‑norm and non‑linearity, producing the final expert representation (u). This mirrors the biological role of CA1 as a comparator that fuses inputs from both CA3 and direct cortical pathways before sending information to neocortical areas.

The core novelty lies in the DG‑gated Mixture‑of‑Experts (MoE). Each expert maintains its own DG module and an exponential moving average (EMA) prototype of its DG codes (u_i). During inference, the input is processed by all DG modules in parallel; cosine similarity between each expert’s current DG code and its prototype yields a routing score s_i. These scores are transformed into gating weights via softmax (soft gating), argmax (hard gating), top‑k selection, or hybrid schemes. Because routing relies solely on the sparse DG representations, no separate gating network is required, dramatically reducing parameter overhead and enabling task‑agnostic expert selection.

Training proceeds in two phases. Phase I focuses on specialization: each expert is trained with a classification loss, a prototype proximity loss (push‑pull dynamics between current DG codes and prototypes), and a contrastive loss that encourages inter‑expert DG codes to be distinct. Elastic Weight Consolidation (EWC) is applied to protect important weights, and a prioritized replay buffer stores compact representations of past samples. Phase II performs consolidation: a global contrastive loss aligns all DG layers across experts, while a similarity‑weighted EWC (using inter‑task similarity estimates) further stabilizes the shared parameters. This two‑stage schedule implements the Complementary Learning Systems theory, with fast hippocampal‑like encoding followed by slower cortical consolidation.

Empirical evaluation on standard continual‑learning benchmarks (Split CIFAR‑10, Split MNIST, Permuted MNIST) shows that HiCL achieves accuracy comparable to state‑of‑the‑art methods such as Progress & Compress, CLEAR, and ER‑ResNet, while using roughly 30 % fewer FLOPs and parameters. The architecture scales gracefully: increasing the number of experts does not linearly increase routing cost because the DG computation is lightweight and shared across routing decisions.

Limitations are acknowledged. Prototypes are simple EMA averages of DG codes, which may not capture complex class boundaries or multimodal distributions. Replacing CA3’s recurrent attractor dynamics with a feed‑forward MLP may limit the model’s ability to perform true associative recall in tasks requiring long‑range temporal dependencies. Experiments are confined to image classification, leaving open questions about applicability to NLP, reinforcement learning, or other modalities. Finally, the system introduces several hyper‑parameters (EMA decay, contrastive temperature, replay prioritization) that may require careful tuning for new domains.

In summary, HiCL demonstrates that extracting core computational principles from hippocampal circuitry—sparse pattern separation, pattern completion, and prototype‑driven routing—can yield a highly efficient and effective continual‑learning framework. The DG‑gated MoE mechanism, which eliminates the need for an explicit gating network, represents a promising direction for future modular neural architectures aiming to balance plasticity and stability.

Comments & Academic Discussion

Loading comments...

Leave a Comment