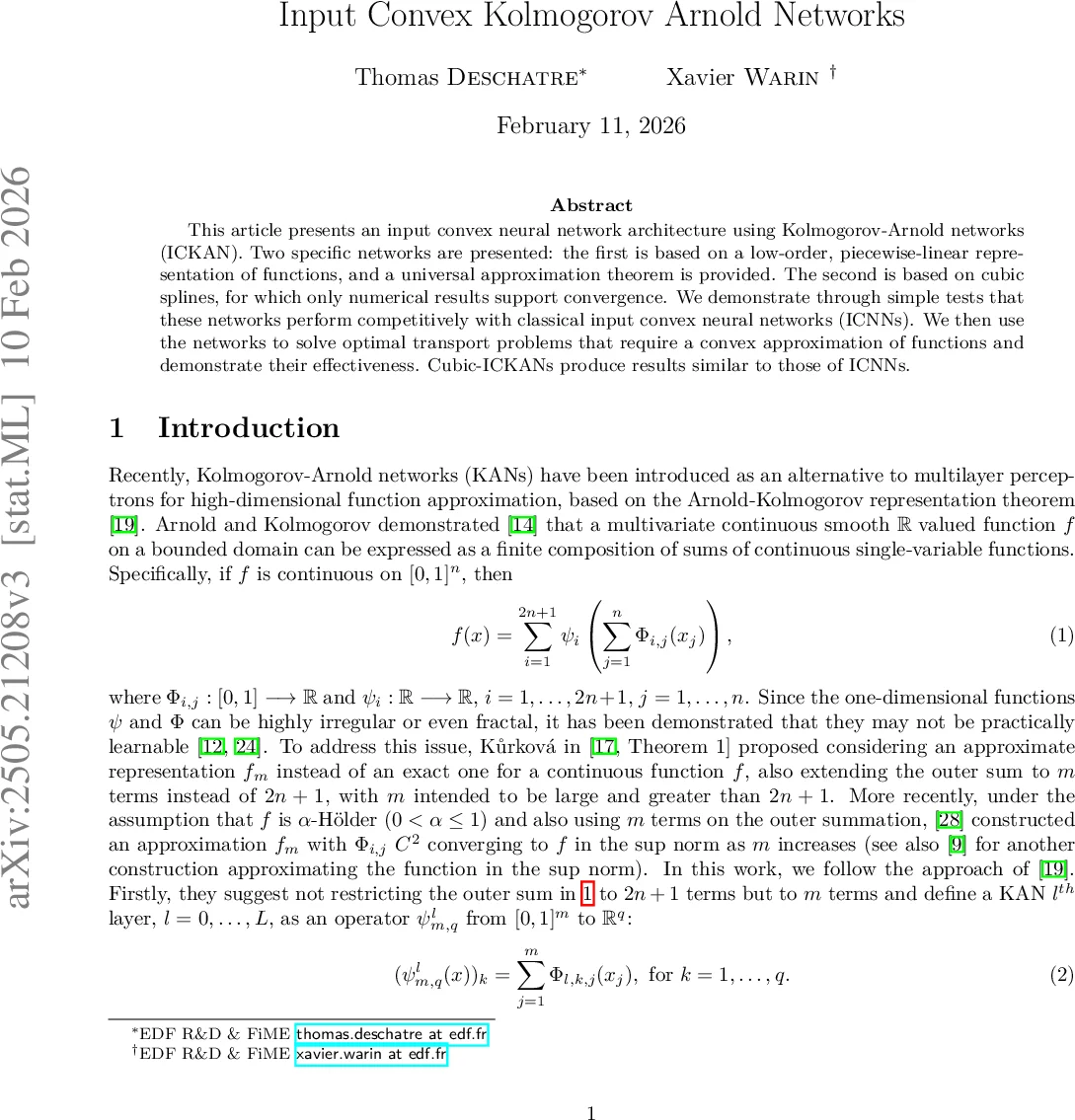

Input Convex Kolmogorov Arnold Networks

This article presents an input convex neural network architecture using Kolmogorov-Arnold networks (ICKAN). Two specific networks are presented: the first is based on a low-order, linear-by-part, representation of functions, and a universal approximation theorem is provided. The second is based on cubic splines, for which only numerical results support convergence. We demonstrate on simple tests that these networks perform competitively with classical input convex neural networks (ICNNs). In a second part, we use the networks to solve some optimal transport problems needing a convex approximation of functions and demonstrate their effectiveness. Comparisons with ICNNs show that cubic ICKANs produce results similar to those of classical ICNNs.

💡 Research Summary

This paper introduces Input Convex Kolmogorov‑Arnold Networks (ICKAN), a novel family of neural architectures that combine the universal representation power of Kolmogorov‑Arnold Networks (KAN) with the convexity guarantees of Input‑Convex Neural Networks (ICNN). The authors propose two concrete instantiations. The first, P1‑ICKAN, uses piecewise‑linear (P1) approximations for the one‑dimensional inner functions of the KAN representation. Convexity is enforced by constraining the differences of successive slopes to be non‑negative via a max operator, and by requiring all bias‑like parameters to be non‑negative. The second, Cubic‑ICKAN, replaces the linear basis with Hermite cubic splines, allowing a smoother representation of both the function and its gradient. Convexity of the spline is ensured by imposing monotonicity on the spline’s first derivative through a combination of max and sigmoid functions. Both architectures can operate with a fixed uniform grid or with an adaptive grid whose interior knot positions are learned jointly with the other parameters.

The theoretical contribution centers on the piecewise‑linear variant. Two universal approximation theorems are proved: one for the adaptive‑grid case (Theorem 2.1) and one for the fixed‑grid case (Theorem 2.2). Both state that, for any Lipschitz convex function on the unit hypercube, there exists a sequence of P1‑ICKANs (with suitably chosen depth, width, and number of grid points) whose sup‑norm error can be made arbitrarily small. The proofs rely on the fact that each layer implements a sum of convex one‑dimensional functions, and that the max‑based slope constraints preserve convexity under composition. No convergence guarantees are provided for the cubic spline version; its validity is demonstrated only through numerical experiments.

Empirically, the authors evaluate three families of tasks. First, they approximate a synthetic convex function f(x)=∑_{i=1}^d (|x_i|+|1−x_i|)+xᵀAx (with A positive definite) in dimensions d=3 and d=7. Training uses Adam (lr = 10⁻³), batch size 1 000, and 200 k iterations. Across a grid of hyper‑parameters, the best ICNN (ReLU, 2–5 layers, up to 320 neurons per layer) is used as a baseline. P1‑ICKAN with an adaptive grid matches the best ICNN in d=3 and outperforms it in d=7, while the non‑adaptive version is slightly worse. Second, a two‑dimensional control toy problem shows that both ICKAN variants converge reliably, with the cubic version providing smoother gradient estimates that can be useful for policy‑gradient methods. Third, the networks are applied to optimal transport: the Brenier potential φ* (a convex function whose gradient yields the optimal map) is learned from samples of two distributions. Cubic‑ICKAN achieves error comparable to ICNN while requiring roughly 15 % less computation time, illustrating the benefit of a higher‑order representation when gradients are needed.

The paper also extends the framework to partial convexity, introducing Partial ICKAN (PICKAN) and comparing it with Partial ICNN (PICNN). Experiments confirm that PICKAN respects convexity only on the designated input coordinates and attains performance on par with PICNN.

Strengths of the work include a clear theoretical foundation for the linear variant, the innovative use of adaptive grids to improve approximation efficiency, and a thorough experimental comparison with state‑of‑the‑art ICNNs across several domains. Limitations are the lack of a convergence theorem for the cubic spline variant, the relatively high computational overhead inherent to KAN‑style architectures, and the focus on low‑dimensional synthetic benchmarks, leaving open questions about scalability to high‑dimensional real‑world problems.

In conclusion, ICKAN offers a promising new direction for convex function approximation, bridging the gap between the expressive KAN framework and the convexity‑preserving design of ICNNs. Future work should aim at establishing rigorous convergence results for the spline version, optimizing the implementation for large‑scale settings (e.g., via low‑rank approximations or efficient kernel tricks), and testing the approach on demanding applications such as high‑dimensional optimal transport, reinforcement learning, and convex‑constrained optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment