ReaMOT: A Benchmark and Framework for Reasoning-based Multi-Object Tracking

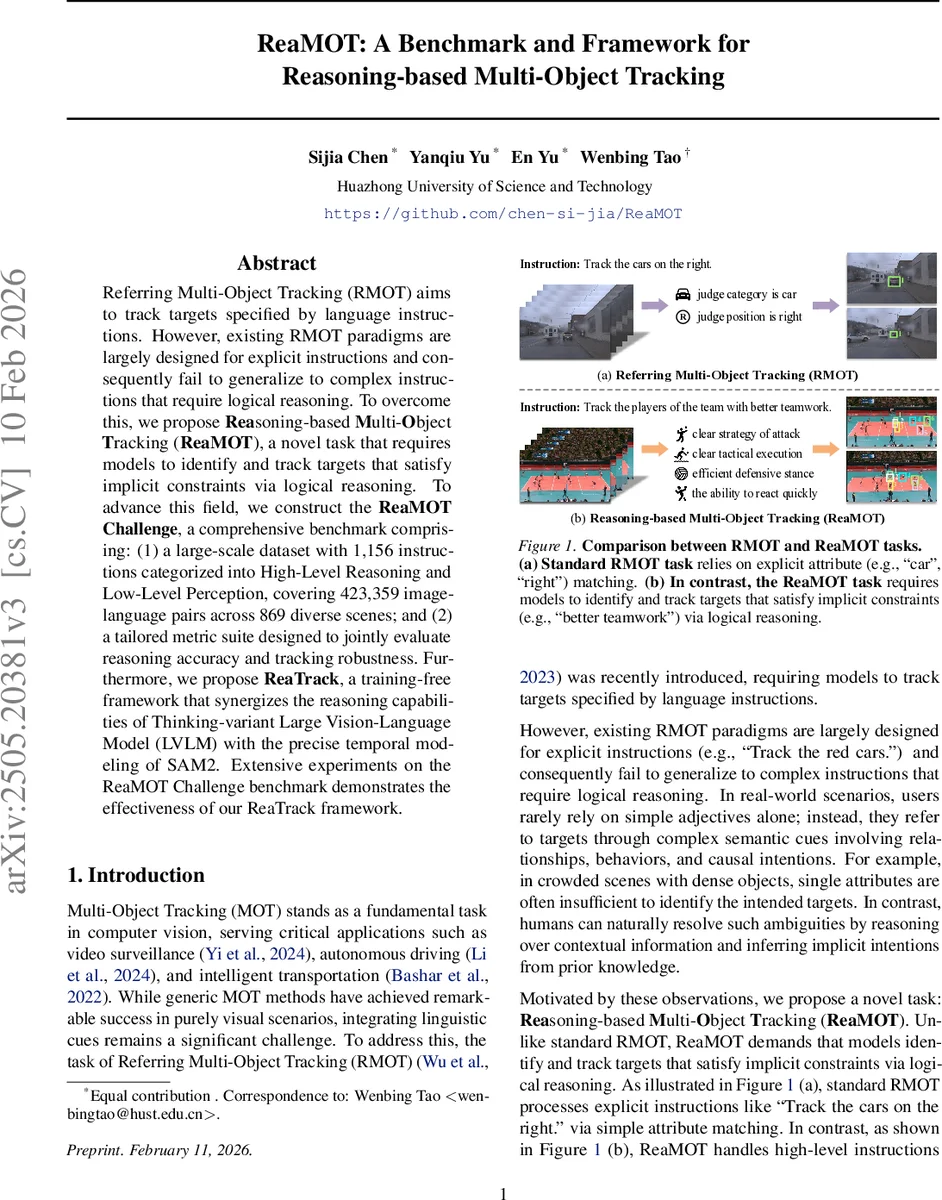

Referring Multi-Object Tracking (RMOT) aims to track targets specified by language instructions. However, existing RMOT paradigms are largely designed for explicit instructions and consequently fail to generalize to complex instructions that require logical reasoning. To overcome this, we propose Reasoning-based Multi-Object Tracking (ReaMOT), a novel task that requires models to identify and track targets that satisfy implicit constraints via logical reasoning. To advance this field, we construct the ReaMOT Challenge, a comprehensive benchmark comprising: (1) a large-scale dataset with 1,156 instructions categorized into High-Level Reasoning and Low-Level Perception, covering 423,359 image-language pairs across 869 diverse scenes; and (2) a tailored metric suite designed to jointly evaluate reasoning accuracy and tracking robustness. Furthermore, we propose ReaTrack, a training-free framework that synergizes the reasoning capabilities of Thinking-variant Large Vision-Language Model (LVLM) with the precise temporal modeling of SAM2. Extensive experiments on the ReaMOT Challenge benchmark demonstrates the effectiveness of our ReaTrack framework.

💡 Research Summary

The paper introduces ReaMOT, a new benchmark and task that extends Referring Multi‑Object Tracking (RMOT) from handling explicit, attribute‑based instructions to processing complex, implicit language commands that require logical reasoning. Existing RMOT methods excel at matching simple descriptors (e.g., “red car”) but fail when the instruction encodes higher‑level concepts such as “the players of the team with better teamwork.” To fill this gap, the authors define the ReaMOT task, which demands that a model infer hidden constraints, reason over contextual cues, and then consistently track the identified objects across video frames.

To support this task, the ReaMOT Challenge dataset is constructed from 12 publicly available MOT and video datasets (Argoverse‑HD, DanceTrack, GMOT‑40, KITTI, etc.), yielding 869 diverse scenes, 423 359 image‑language pairs, and 1 156 language instructions. Instructions are split into High‑Level Reasoning (868) and Low‑Level Perception (288) categories. A carefully designed attribute taxonomy—covering spatial position, motion, costume, human attributes, object attributes, specific nouns, auxiliary modifiers, and others—guides the annotation process. The pipeline consists of three stages: (1) manual pre‑selection of key frames containing multiple objects with both shared and distinguishing features; (2) GPT‑4o‑assisted feature analysis where bounding‑box annotated frames are fed to the language model with prompts derived from the attribute taxonomy, producing detailed descriptions of appearance, motion, relationships, and discriminative traits; (3) human verification and synthesis of these descriptions into final language instructions. This hybrid human‑AI workflow ensures high‑quality, reasoning‑intensive instructions.

A novel metric suite is proposed to evaluate both reasoning correctness and tracking robustness. In addition to traditional MOT metrics (MOTA, IDF1), the authors introduce Reasoning‑HO Accuracy (RHO_TA), Reasoning‑IDF1 (RIDF1), Recall (RRcll), Precision (RPrcn), and a combined ReaMOT‑A (RMO_TA) that jointly reflect semantic alignment and temporal consistency.

The core technical contribution is ReaTrack, a training‑free framework that leverages the reasoning power of a “Thinking‑variant” Large Vision‑Language Model (LVLM) together with the temporal propagation capabilities of SAM2. ReaTrack comprises three synergistic modules:

- Reasoning‑Aware Detection – The LVLM receives the current frame and the natural‑language instruction, performs multi‑step logical inference, and outputs a set of candidate masks that semantically satisfy the instruction.

- Mask‑Based Temporal Propagation – SAM2 takes the LVLM‑generated masks from previous frames and propagates them forward, producing motion priors and robust visual cues for each candidate across time.

- Reasoning‑Motion Association – A matching algorithm fuses the semantic scores from the LVLM with the motion predictions from SAM2, handling track lifecycle events (initiation, continuation, extrapolation, termination) while correcting mismatches via reasoning feedback.

Because each component relies on state‑of‑the‑art pretrained models, ReaTrack operates in a zero‑shot setting without any task‑specific fine‑tuning. Extensive experiments on the ReaMOT Challenge demonstrate that ReaTrack achieves a RHO_TA of 42.28 % on the High‑Level Reasoning subset—approximately six times higher than the best prior method—and outperforms baselines across all proposed metrics. Ablation studies confirm that removing the LVLM reasoning module drastically reduces performance, and that SAM2’s mask propagation is essential for maintaining track continuity.

The paper’s strengths lie in (i) defining a realistic, reasoning‑centric tracking problem; (ii) constructing a large, well‑annotated dataset through a rigorous human‑AI pipeline; (iii) proposing a comprehensive evaluation protocol that separates semantic reasoning from temporal tracking; and (iv) delivering a practical, training‑free system that sets new performance baselines. Limitations include heavy reliance on the LVLM’s inference quality (errors in reasoning propagate downstream), computational overhead of SAM2 for high‑resolution video, and the absence of end‑to‑end fine‑tuning experiments that could further boost performance.

Future directions suggested by the authors involve integrating LVLM and tracking modules into a joint learning framework, developing lightweight alternatives to SAM2 for real‑time deployment, and exploring graph‑based or cross‑modal attention mechanisms to capture more intricate social and tactical relationships. Overall, ReaMOT and ReaTrack open a promising research avenue at the intersection of language reasoning, vision, and temporal dynamics, pushing multi‑object tracking toward more human‑like understanding of complex instructions.

Comments & Academic Discussion

Loading comments...

Leave a Comment