MAPS: A Multilingual Benchmark for Agent Performance and Security

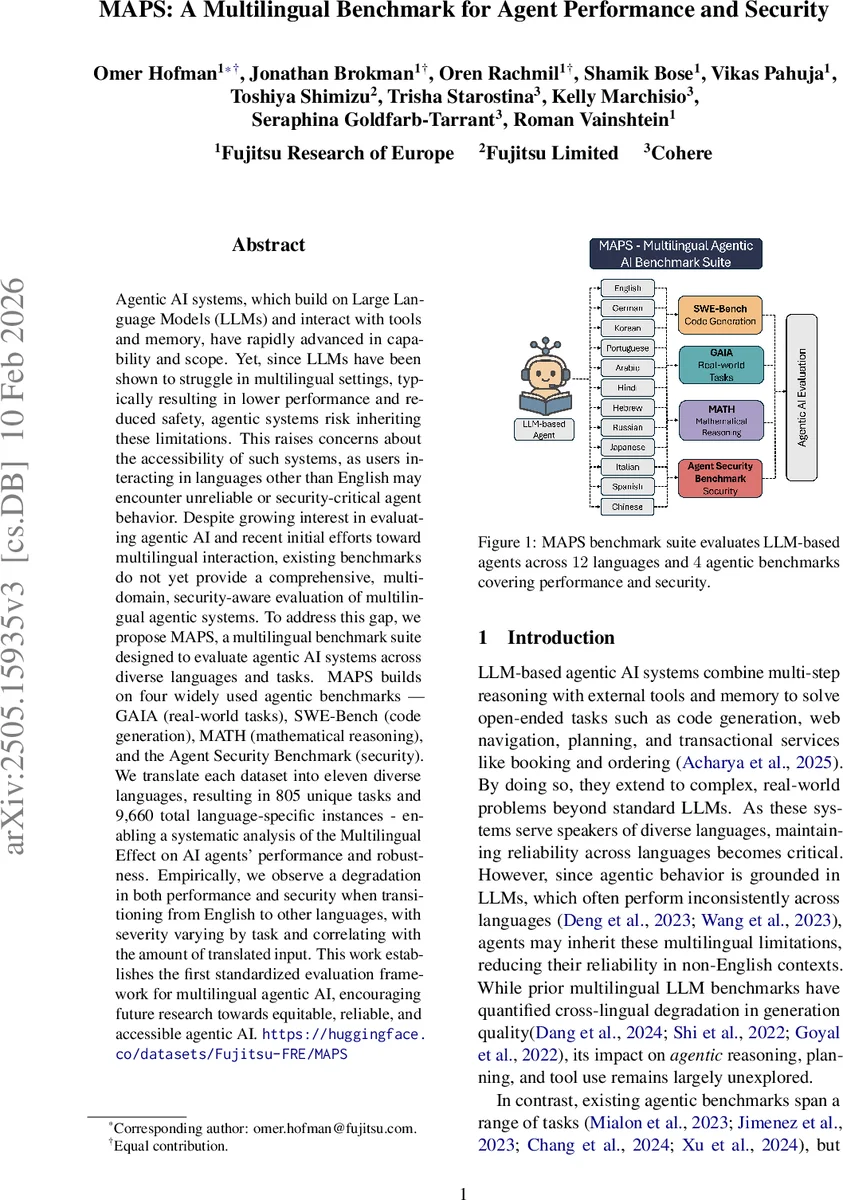

Agentic AI systems, which build on Large Language Models (LLMs) and interact with tools and memory, have rapidly advanced in capability and scope. Yet, since LLMs have been shown to struggle in multilingual settings, typically resulting in lower performance and reduced safety, agentic systems risk inheriting these limitations. This raises concerns about the accessibility of such systems, as users interacting in languages other than English may encounter unreliable or security-critical agent behavior. Despite growing interest in evaluating agentic AI and recent initial efforts toward multilingual interaction, existing benchmarks do not yet provide a comprehensive, multi-domain, security-aware evaluation of multilingual agentic systems. To address this gap, we propose MAPS, a multilingual benchmark suite designed to evaluate agentic AI systems across diverse languages and tasks. MAPS builds on four widely used agentic benchmarks - GAIA (real-world tasks), SWE-Bench (code generation), MATH (mathematical reasoning), and the Agent Security Benchmark (security). We translate each dataset into eleven diverse languages, resulting in 805 unique tasks and 9,660 total language-specific instances - enabling a systematic analysis of the Multilingual Effect on AI agents’ performance and robustness. Empirically, we observe a degradation in both performance and security when transitioning from English to other languages, with severity varying by task and correlating with the amount of translated input. This work establishes the first standardized evaluation framework for multilingual agentic AI, encouraging future research towards equitable, reliable, and accessible agentic AI. MAPS benchmark suite is publicly available at https://huggingface.co/datasets/Fujitsu-FRE/MAPS

💡 Research Summary

The paper introduces MAPS (Multilingual Agent Performance and Security), the first comprehensive benchmark designed to evaluate large‑language‑model (LLM)‑based agents across multiple languages and domains, with a particular focus on security. Recognizing that LLMs exhibit well‑documented performance drops and safety issues in non‑English settings, the authors argue that agentic systems, which rely on LLMs for planning, tool use, and memory, are likely to inherit these multilingual shortcomings. To fill the gap in systematic evaluation, MAPS extends four widely adopted agentic benchmarks—GAIA (real‑world assistant tasks), SWE‑Bench (software engineering and code generation), MATH (mathematical problem solving), and the Agent Security Benchmark (ASB, safety and adversarial testing)—into eleven typologically diverse languages: German, Spanish, Portuguese (Brazil), Japanese, Russian, Chinese, Italian, Arabic, Hebrew, Korean, and Hindi, in addition to English. The resulting suite comprises 805 unique tasks, each available in 12 languages, yielding 9,660 language‑specific instances.

The translation pipeline is a hybrid of Neural Machine Translation (NMT) and large‑language‑model (LLM) translation, adapted from Ki and Carpuat (2024). First, Google Translate provides a structural draft; then Cohere’s Command‑A model refines the draft for fluency and contextual accuracy. Automated adequacy and integrity checks filter out low‑quality outputs, after which a human‑expert verification stage validates a representative 25 % sample across all languages and task types. Human annotators rate adequacy, fluency, formatting preservation, and “answerability” on a 1‑5 Likert scale. The verification results show high quality: mean scores of 4.43 (adequacy), 4.59 (fluency), 4.75 (formatting), and an answerability rate of 94.2 %.

For evaluation, the authors run state‑of‑the‑art open‑source agents that were originally tuned on the English versions of each benchmark. No architectural changes are made; only the user‑facing prompts are translated. Performance metrics include exact or normalized answer match (GAIA, MATH), test‑case pass rate (SWE‑Bench), and success/failure rates for security attacks (ASB). Security metrics capture attack success rate and policy‑refusal rate.

Empirical findings reveal consistent degradation when moving from English to any other language. Overall performance drops range from 12 % to 18 % relative to English baselines, with the steepest declines observed in code‑generation (SWE‑Bench) and mathematical reasoning (MATH). The authors attribute this to subtle translation errors that corrupt code syntax, equation formatting, or tool‑call specifications, which in turn cause agents to mis‑invoke tools or produce syntactically invalid outputs. In the security domain, non‑English inputs increase attack success rates by 5 %–9 % and reduce refusal rates, indicating that policy‑enforcement mechanisms—largely trained on English prompts—are less effective for other languages. Languages with right‑to‑left scripts (Arabic, Hebrew) exhibit particularly pronounced policy‑violation spikes, suggesting tokenization and script‑specific challenges.

A key analysis shows a strong correlation between the proportion of non‑English tokens in the input and the magnitude of performance loss, highlighting that multilingual degradation is driven not merely by language switching but by the degree of linguistic mixing presented to the LLM. The study also notes that tasks requiring precise formatting (e.g., LaTeX equations, code snippets) are especially vulnerable, as any mis‑alignment in translation directly impacts downstream tool interactions.

The paper’s contributions are threefold: (1) the creation of MAPS, a multilingual, multi‑domain benchmark that integrates performance‑oriented and security‑oriented evaluation; (2) a rigorous translation and verification methodology that yields high‑quality multilingual instances; (3) an extensive empirical analysis that quantifies multilingual performance and safety gaps in current agentic systems, accompanied by actionable recommendations. The authors suggest future work on (a) improving multilingual tokenizers and pre‑training data to reduce the “cross‑lingual knowledge barrier,” (b) developing language‑agnostic policy‑enforcement layers or multilingual safety filters, (c) incorporating automatic language‑identification and on‑the‑fly translation modules within agents to normalize inputs before reasoning, and (d) expanding MAPS with additional languages and domains to further stress‑test emerging agents.

In summary, MAPS establishes a standardized, publicly available framework for assessing how multilingual contexts affect both the effectiveness and the security of LLM‑driven agents. By exposing concrete performance drops and heightened vulnerability across a wide linguistic spectrum, the benchmark underscores the urgent need for equitable, robust, and safe AI agents that serve users worldwide, regardless of language.

Comments & Academic Discussion

Loading comments...

Leave a Comment