MPA: Multimodal Prototype Augmentation for Few-Shot Learning

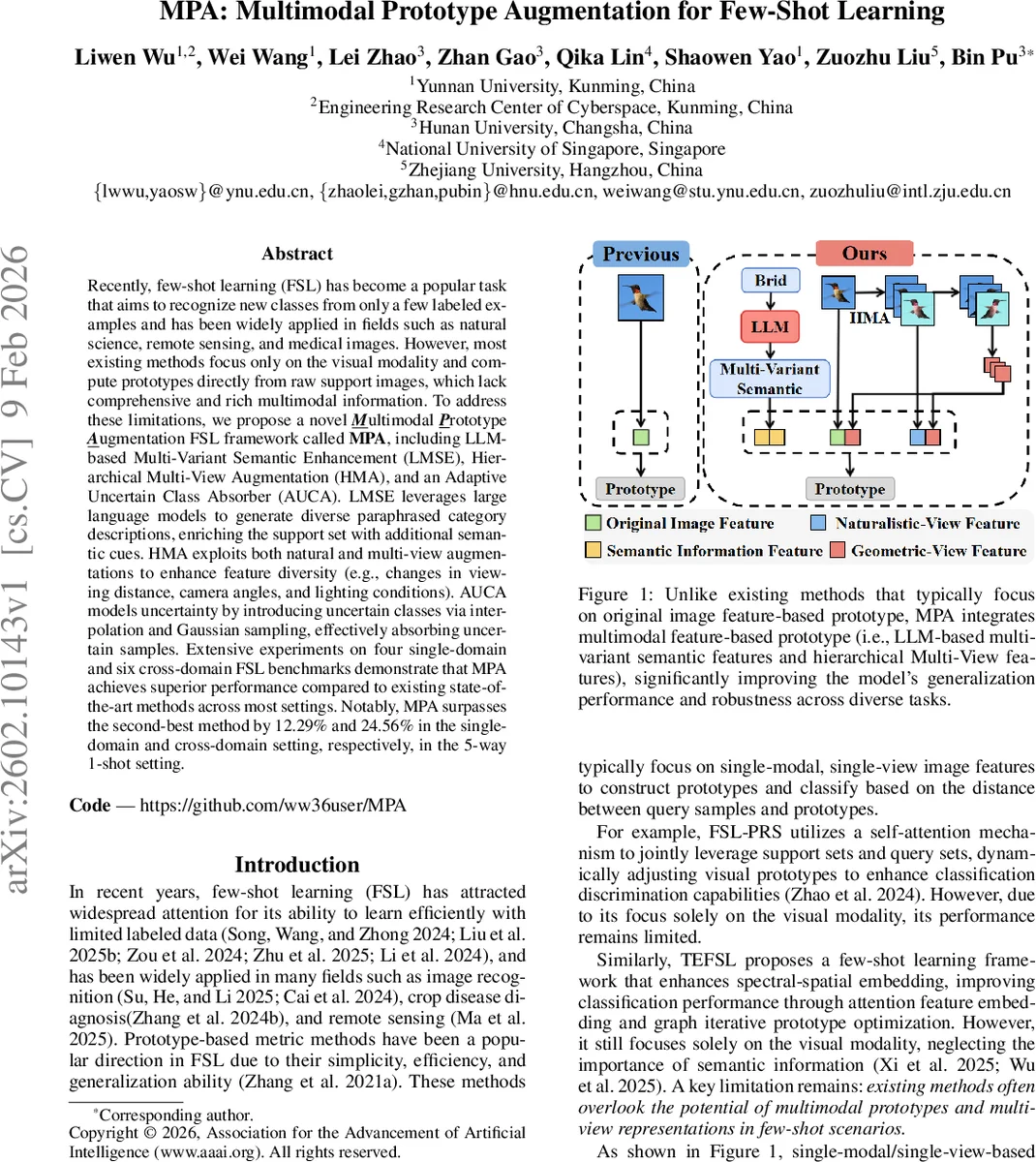

Recently, few-shot learning (FSL) has become a popular task that aims to recognize new classes from only a few labeled examples and has been widely applied in fields such as natural science, remote sensing, and medical images. However, most existing methods focus only on the visual modality and compute prototypes directly from raw support images, which lack comprehensive and rich multimodal information. To address these limitations, we propose a novel Multimodal Prototype Augmentation FSL framework called MPA, including LLM-based Multi-Variant Semantic Enhancement (LMSE), Hierarchical Multi-View Augmentation (HMA), and an Adaptive Uncertain Class Absorber (AUCA). LMSE leverages large language models to generate diverse paraphrased category descriptions, enriching the support set with additional semantic cues. HMA exploits both natural and multi-view augmentations to enhance feature diversity (e.g., changes in viewing distance, camera angles, and lighting conditions). AUCA models uncertainty by introducing uncertain classes via interpolation and Gaussian sampling, effectively absorbing uncertain samples. Extensive experiments on four single-domain and six cross-domain FSL benchmarks demonstrate that MPA achieves superior performance compared to existing state-of-the-art methods across most settings. Notably, MPA surpasses the second-best method by 12.29% and 24.56% in the single-domain and cross-domain setting, respectively, in the 5-way 1-shot setting.

💡 Research Summary

The paper addresses a fundamental limitation of most few‑shot learning (FSL) approaches: their exclusive reliance on visual information when constructing class prototypes. In many realistic scenarios—especially fine‑grained classification or cross‑domain transfer—visual cues alone are insufficient, leading to poor generalization. To overcome this, the authors propose a novel framework called Multimodal Prototype Augmentation (MPA) that enriches prototypes with three complementary sources of information: (1) semantic text generated by large language models (LLMs), (2) diverse visual perspectives obtained through hierarchical multi‑view augmentations, and (3) synthetic “uncertain” classes created by interpolating between existing class prototypes and sampling from a normal distribution.

LLM‑Based Multi‑Variant Semantic Enhancement (LMSE)

For each class name, an LLM (e.g., GPT‑4) is prompted to produce an original description plus four paraphrased variants. These textual variants are encoded with the CLIP text encoder, yielding a set of high‑dimensional semantic embeddings. By concatenating or averaging these embeddings with the visual embeddings of support images, the prototype gains rich linguistic context (attributes, functional cues, etc.) that is especially valuable when visual differences are subtle (e.g., bird species).

Hierarchical Multi‑View Augmentation (HMA)

The visual side is expanded by applying a hierarchy of augmentations. First, naturalistic transformations such as central cropping at multiple scales (120, 170, 200 px), rotations (45°, 90°, 180°, 270°, 315°), and color jitter (brightness, contrast, saturation, hue) simulate variations in viewpoint, illumination, and sensor noise. Second, geometric augmentations like horizontal flipping generate complementary views. Each augmented image is passed through the CLIP image encoder, producing a set of view‑specific feature vectors that are later aggregated with the original image features. This multi‑view pool dramatically increases intra‑class diversity while preserving label consistency, mitigating the over‑fitting risk inherent in few‑shot settings.

Adaptive Uncertain Class Absorber (AUCA)

To further improve robustness, AUCA constructs a synthetic “uncertain” class for each training episode. The method first computes cosine similarities between all class prototypes, normalizes them, and derives an adaptive interpolation weight λ based on the average inter‑class distance. Two sources of synthetic data are then mixed: (i) linear interpolation between two randomly selected class prototypes (with interpolation coefficient α ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment