Generalizing GNNs with Tokenized Mixture of Experts

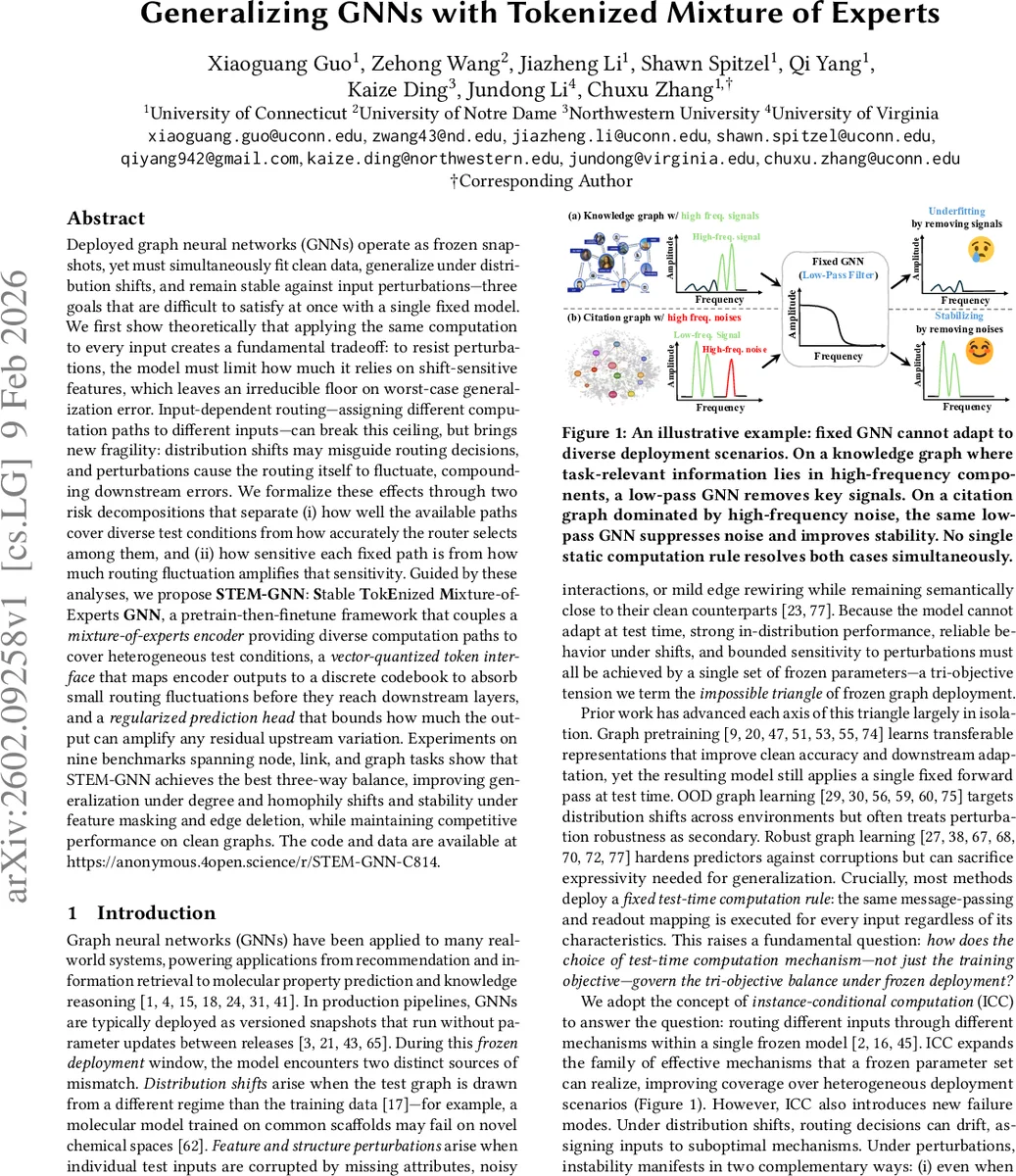

Deployed graph neural networks (GNNs) are frozen at deployment yet must fit clean data, generalize under distribution shifts, and remain stable to perturbations. We show that static inference induces a fundamental tradeoff: improving stability requires reducing reliance on shift-sensitive features, leaving an irreducible worst-case generalization floor. Instance-conditional routing can break this ceiling, but is fragile because shifts can mislead routing and perturbations can make routing fluctuate. We capture these effects via two decompositions separating coverage vs selection, and base sensitivity vs fluctuation amplification. Based on these insights, we propose STEM-GNN, a pretrain-then-finetune framework with a mixture-of-experts encoder for diverse computation paths, a vector-quantized token interface to stabilize encoder-to-head signals, and a Lipschitz-regularized head to bound output amplification. Across nine node, link, and graph benchmarks, STEM-GNN achieves a stronger three-way balance, improving robustness to degree/homophily shifts and to feature/edge corruptions while remaining competitive on clean graphs.

💡 Research Summary

The paper tackles a fundamental challenge in deploying graph neural networks (GNNs) as frozen models: they must simultaneously achieve high clean‑data accuracy, robust generalization across diverse test‑time distributions, and stability against input perturbations such as feature masking or edge rewiring. The authors first formalize this “impossible triangle” by defining three risks—clean fitting (Rₜ), worst‑environment out‑of‑distribution risk (Rₒₒd), and stability risk (Rₛₜₐb). They prove that under static inference, where a single message‑passing rule is applied to every input, a uniform stability budget forces the model to limit its reliance on high‑frequency (perturbation‑sensitive) components. This creates an irreducible lower bound β₁(α, ε) on Rₒₒd, showing that static GNNs cannot simultaneously minimize all three risks.

To break this barrier, the authors introduce instance‑conditional computation (ICC). ICC equips a frozen GNN with a routing function rθ(z) that selects, per‑instance, a computation path from a set of possible mechanisms, and an execution map F that runs the selected path. This expands the family of effective models available at deployment, allowing different inputs to rely on different high‑frequency components and thus reducing the worst‑case risk. However, ICC introduces a new fragility: routing decisions may drift under distribution shift or small perturbations, leading to (i) execution of a path that is itself sensitive to noise, or (ii) a change of path that amplifies downstream errors. The authors decompose ICC risk into (a) coverage vs. selection quality for OOD performance, and (b) base sensitivity vs. drift amplification for stability, providing a clear analytical framework.

Guided by this theory, they propose STEM‑GNN (Stable Tokenized Mixture‑of‑Experts GNN), a pre‑train‑then‑fine‑tune pipeline comprising three tightly coupled components:

-

MoE Encoder – a mixture‑of‑experts message‑passing backbone that learns a diverse set of expert modules under shared parameters. At inference, each node’s representation is a weighted combination of experts determined by the routing network, thereby expanding mechanism coverage without increasing the parameter count.

-

Vector‑Quantized (VQ) Token Interface – the continuous encoder outputs are discretized into a fixed codebook of tokens. Small perturbations that do not cross quantization boundaries produce zero change in the token, effectively absorbing low‑magnitude drift before it reaches the prediction head.

-

Lipschitz‑Regularized Head – a Frobenius‑norm penalty is applied to the final linear head to bound its Lipschitz constant. This limits how much any residual variation—whether from a token switch or from the encoder—can be amplified in the final output.

The combination yields a “coverage‑expansion + routing‑stabilization + sensitivity‑control” triad that directly addresses the three risks identified earlier.

Empirical evaluation spans nine benchmarks covering node classification, link prediction, and graph‑level classification. Compared with state‑of‑the‑art baselines (including static GNNs, pre‑training only methods, OOD‑focused adapters, and robust GNNs), STEM‑GNN consistently achieves:

- Improved OOD Generalization – notably under degree distribution shifts and varying homophily levels, where the MoE encoder selects paths better suited to the new structural regime.

- Higher Perturbation Robustness – feature masking, edge deletion, and random noise cause significantly smaller degradation in accuracy, thanks to the VQ token’s buffering effect and the Lipschitz‑controlled head.

- Competitive Clean Accuracy – on the original test graphs, STEM‑GNN matches or slightly exceeds the best baseline, demonstrating that robustness does not come at the expense of standard performance.

Ablation studies confirm that each component contributes uniquely: removing the MoE reduces OOD gains, dropping the VQ interface raises stability loss, and omitting Lipschitz regularization leads to amplified output variance under token switches. The paper also provides visualizations of routing distributions and token transition frequencies, illustrating that routing remains stable for most inputs while still adapting when necessary.

In summary, the work offers a rigorous theoretical justification for why static GNN inference cannot meet all deployment requirements, introduces a principled ICC framework, and delivers a practical system—STEM‑GNN—that simultaneously improves clean accuracy, out‑of‑distribution generalization, and perturbation robustness in frozen‑deployment scenarios. This advances the state of the art in robust graph learning and provides a blueprint for future models that must operate reliably under real‑world distribution shifts.

Comments & Academic Discussion

Loading comments...

Leave a Comment