RAPID: Risk of Attribute Prediction-Induced Disclosure in Synthetic Microdata

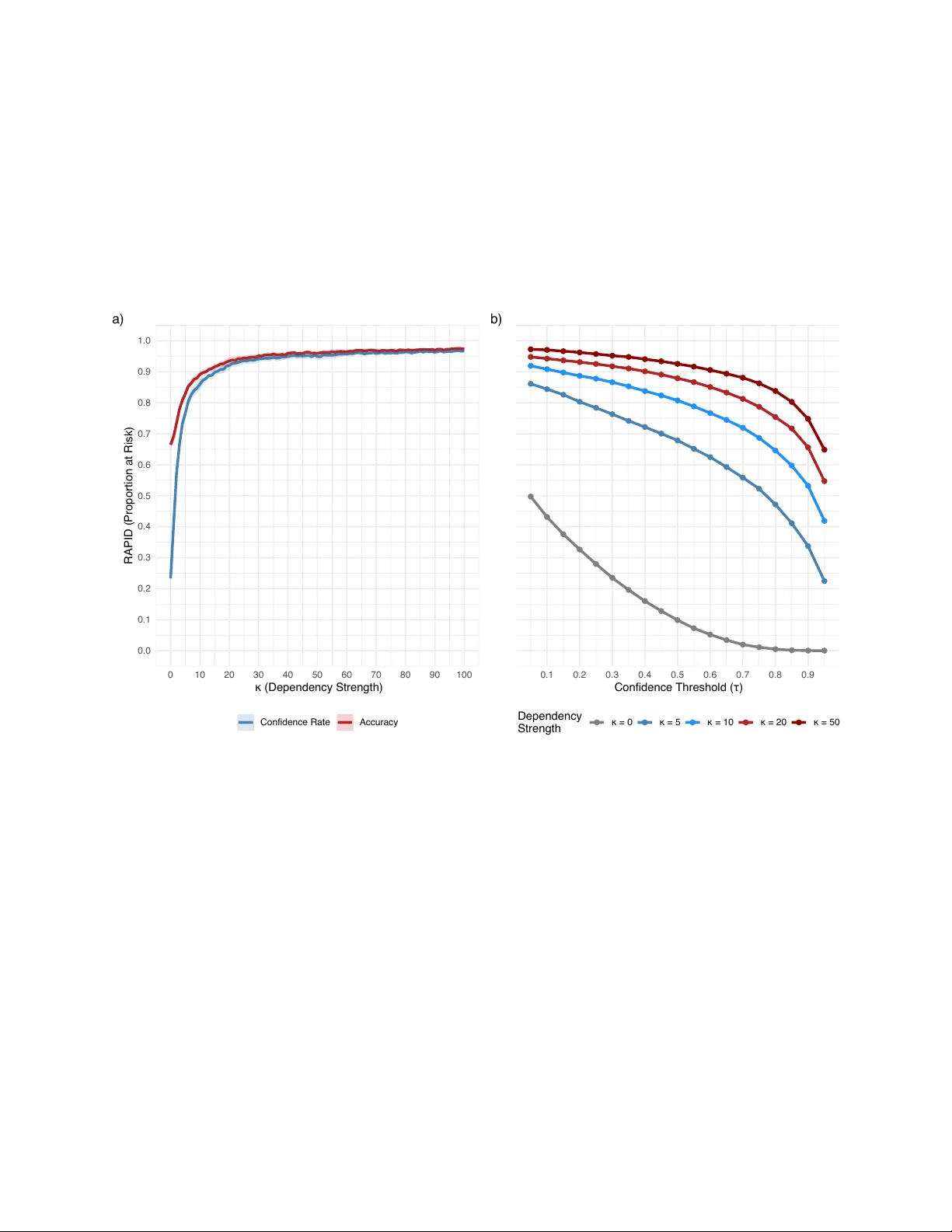

Statistical data anonymization increasingly relies on fully synthetic microdata, for which classical identity disclosure measures are less informative than an adversary's ability to infer sensitive attributes from released data. We introduce RAPID (R…

Authors: Matthias Templ, Oscar Thees, Roman Müller