SceneSmith: Agentic Generation of Simulation-Ready Indoor Scenes

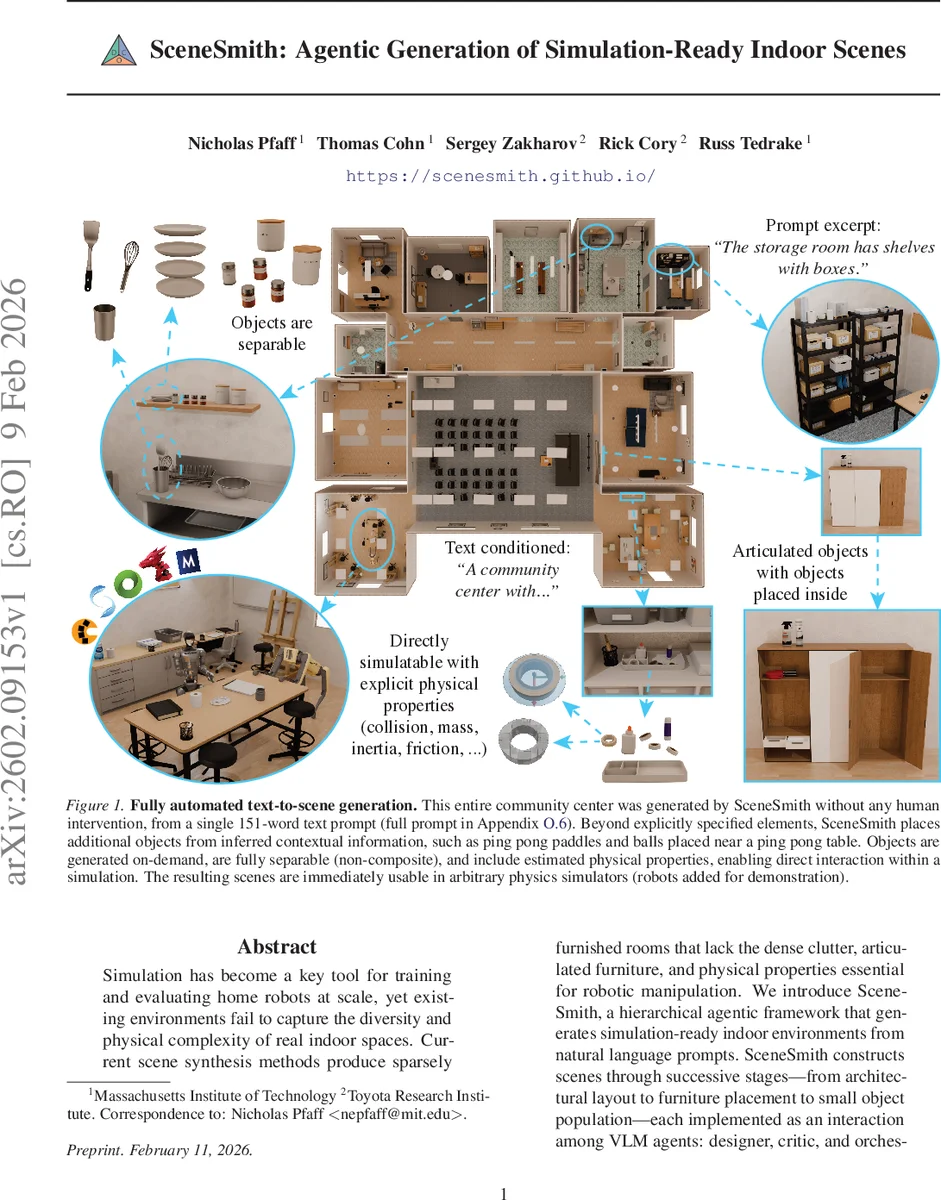

Simulation has become a key tool for training and evaluating home robots at scale, yet existing environments fail to capture the diversity and physical complexity of real indoor spaces. Current scene synthesis methods produce sparsely furnished rooms that lack the dense clutter, articulated furniture, and physical properties essential for robotic manipulation. We introduce SceneSmith, a hierarchical agentic framework that generates simulation-ready indoor environments from natural language prompts. SceneSmith constructs scenes through successive stages$\unicode{x2013}$from architectural layout to furniture placement to small object population$\unicode{x2013}$each implemented as an interaction among VLM agents: designer, critic, and orchestrator. The framework tightly integrates asset generation through text-to-3D synthesis for static objects, dataset retrieval for articulated objects, and physical property estimation. SceneSmith generates 3-6x more objects than prior methods, with <2% inter-object collisions and 96% of objects remaining stable under physics simulation. In a user study with 205 participants, it achieves 92% average realism and 91% average prompt faithfulness win rates against baselines. We further demonstrate that these environments can be used in an end-to-end pipeline for automatic robot policy evaluation.

💡 Research Summary

SceneSmith introduces a hierarchical, agentic framework that automatically generates simulation‑ready indoor environments from natural‑language prompts. The system proceeds through four major stages: (1) architectural layout creation, (2) furniture placement, (3) wall‑ and ceiling‑mounted object insertion, and (4) small manipulable object population on identified support surfaces. Each stage is orchestrated by three cooperating vision‑language model (VLM) agents—a Designer that proposes modifications, a Critic that evaluates proposals for semantic plausibility, physical feasibility, and prompt alignment, and an Orchestrator that manages the iterative loop, accepts or rejects proposals, and maintains checkpoints for rollback.

A rich toolbox underpins the agents. Observation tools provide object metadata, poses, and rendered views; modification tools enable placement, snapping, and composite assembly; asset acquisition tools route requests either to a text‑to‑3D generator for static items or to a curated 3D database for articulated furniture; feasibility tools perform collision, reachability, and stability checks. Role‑based access ensures the Designer only manipulates the scene, while the Critic is limited to evaluation tools, reducing bias. Memory is managed with a turn‑based summarization scheme: the most recent two turns are stored in full, earlier turns are compressed into LLM‑generated summaries, keeping context while limiting growth.

Asset generation is tightly integrated. When the Designer requests an object, a routing module decides whether to synthesize it via a modern text‑to‑3D model (e.g., diffusion‑based) or retrieve a pre‑existing articulated model from datasets such as ShapeNet or PartNet. The router also attaches physical attributes—collision meshes, mass, inertia, friction—so that the resulting asset can be directly imported into physics engines like PyBullet, Isaac Gym, or Unity without further processing.

The authors evaluate SceneSmith on 210 diverse prompts covering residential, office, and community‑center scenarios. On average, each room contains 71 objects (3–6× more than baselines that generate 11–23 objects). Inter‑object collisions are kept below 2 % and 95.6 % of objects remain stable after a short physics simulation, compared to 3–29 % collisions and 8–61 % stability for prior methods.

A user study with 205 participants compared scenes generated by SceneSmith against several baselines. Participants rated realism and prompt faithfulness on a pairwise basis; SceneSmith achieved 92.2 % realism win rate and 91.5 % prompt‑faithfulness win rate, both statistically significant improvements.

Beyond perception, the paper demonstrates an end‑to‑end robot policy evaluation pipeline. A natural‑language task description (e.g., “pick up the cup from the kitchen counter and place it on the table”) is fed to SceneSmith, which creates a matching cluttered kitchen scene. A robot policy is then executed in simulation, and a separate evaluator agent reasons over symbolic scene state and visual observations to verify task completion. This fully automated loop showcases how SceneSmith can supply diverse, physically plausible training and testing environments at scale, reducing the cost of data collection for general‑purpose home robots.

In summary, SceneSmith advances indoor scene synthesis by (1) unifying asset generation and scene assembly under a single agentic workflow, (2) guaranteeing simulation‑ready physical properties, (3) dramatically increasing object density while maintaining low collision rates and high stability, and (4) proving utility in downstream robotic evaluation. Future work may explore higher‑fidelity text‑to‑3D models, more sophisticated physical property estimation, and scaling the multi‑agent system to collaborative multi‑robot scenario generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment