SciDataCopilot: An Agentic Data Preparation Framework for AGI-driven Scientific Discovery

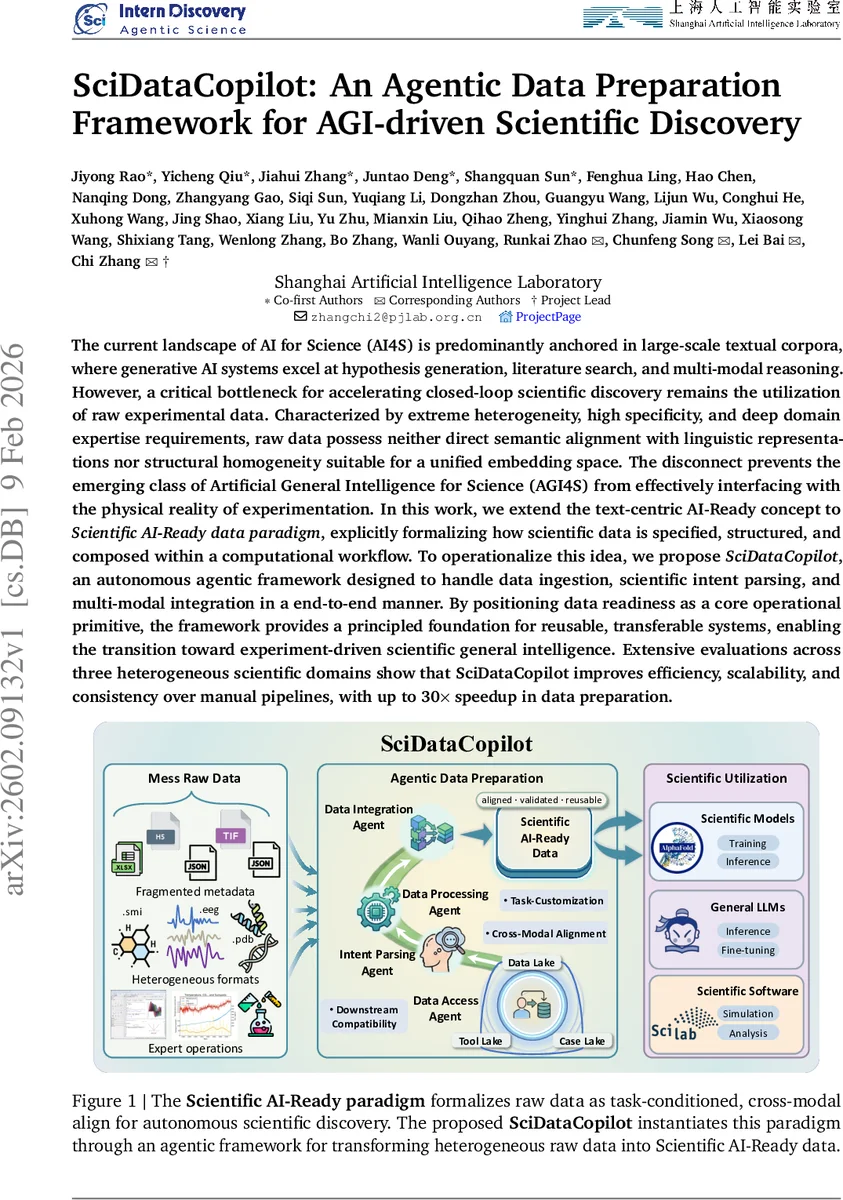

The current landscape of AI for Science (AI4S) is predominantly anchored in large-scale textual corpora, where generative AI systems excel at hypothesis generation, literature search, and multi-modal reasoning. However, a critical bottleneck for accelerating closed-loop scientific discovery remains the utilization of raw experimental data. Characterized by extreme heterogeneity, high specificity, and deep domain expertise requirements, raw data possess neither direct semantic alignment with linguistic representations nor structural homogeneity suitable for a unified embedding space. The disconnect prevents the emerging class of Artificial General Intelligence for Science (AGI4S) from effectively interfacing with the physical reality of experimentation. In this work, we extend the text-centric AI-Ready concept to Scientific AI-Ready data paradigm, explicitly formalizing how scientific data is specified, structured, and composed within a computational workflow. To operationalize this idea, we propose SciDataCopilot, an autonomous agentic framework designed to handle data ingestion, scientific intent parsing, and multi-modal integration in a end-to-end manner. By positioning data readiness as a core operational primitive, the framework provides a principled foundation for reusable, transferable systems, enabling the transition toward experiment-driven scientific general intelligence. Extensive evaluations across three heterogeneous scientific domains show that SciDataCopilot improves efficiency, scalability, and consistency over manual pipelines, with up to 30$\times$ speedup in data preparation.

💡 Research Summary

The paper addresses a critical gap in AI‑for‑Science (AI4S): while large‑scale text corpora have enabled impressive advances in hypothesis generation, literature search, and multimodal reasoning, the utilization of raw experimental data remains a bottleneck. Raw scientific data are highly heterogeneous, domain‑specific, and lack the semantic alignment required for current large language model (LLM) pipelines. To bridge this gap, the authors introduce the “Scientific AI‑Ready” data paradigm, which shifts the focus from generic data cleanliness to a task‑conditioned, downstream‑compatible, and cross‑modal data specification.

Building on this paradigm, the authors propose SciDataCopilot, an autonomous, agentic framework that decomposes scientific data preparation into four coordinated agents:

- Data Access Agent – discovers, retrieves, and structures metadata from diverse repositories (e.g., PDB, GEO, climate archives) and maps them onto a common schema.

- Intent Parsing Agent – uses LLM‑based natural‑language understanding to translate user scientific intents into explicit data‑requirement specifications, including variables, experimental constraints, and quality criteria.

- Data Processing Agent – orchestrates domain‑specific toolkits (RDKit for chemistry, MNE‑Python for neurophysiology, xarray for geoscience) to clean, transform, and validate data. It features a self‑repair loop that automatically retries or substitutes processing steps when errors occur, and logs all parameters for reproducibility.

- Data Integration Agent – aligns and composes processed data across modalities (molecular structures, time‑series signals, raster images) respecting temporal, spatial, and semantic relationships, producing a unified “Scientific AI‑Ready” dataset ready for downstream models such as LLMs, graph neural networks, or physics‑based simulators.

The framework is evaluated on three heterogeneous domains:

- Enzyme catalysis – a single natural‑language instruction generated 214 K enzyme‑reaction records, expanding existing databases by ~20× and achieving a 15‑fold speedup over manual collection.

- Neuroscience (EEG/MEG) – four sub‑tasks (alpha extraction, EOG regression, ICA decomposition, large‑scale preprocessing) were standardized, matching expert quantitative performance while delivering 3–5× faster end‑to‑end execution and full execution traces.

- Earth science (meteorological data) – cross‑disciplinary data preparation under strict temporal constraints yielded >30× efficiency gains compared with spreadsheet‑based workflows.

Quantitative metrics (throughput, reproducibility, error‑recovery success, downstream model performance) consistently show 10–30× speed improvements and >95 % reproducibility relative to baseline pipelines.

The authors acknowledge limitations: the need for domain‑specific schema definition incurs upfront effort; adding new experimental protocols requires schema updates; and LLM‑driven intent parsing can be sensitive to prompt design and model size. Future work is outlined to include meta‑learning for automatic schema expansion, reinforcement‑learning‑based agent coordination, closed‑loop experimentation with real‑time feedback, and enhanced security/privacy controls for data access.

In summary, SciDataCopilot offers a principled, scalable, and domain‑agnostic solution for transforming raw experimental data into AI‑ready assets, thereby removing a major obstacle to AGI‑driven scientific discovery and paving the way for truly closed‑loop, experiment‑centric AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment