Benchmarking the Energy Savings with Speculative Decoding Strategies

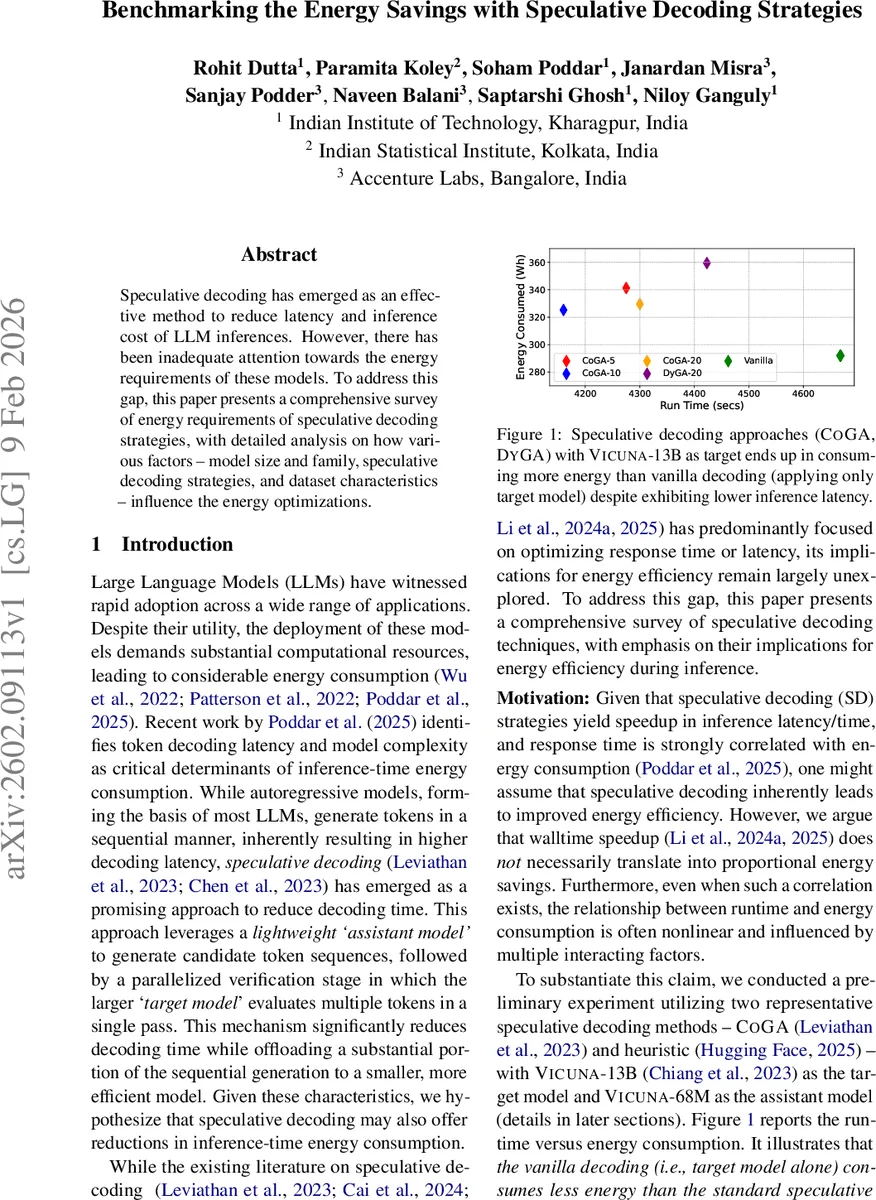

Speculative decoding has emerged as an effective method to reduce latency and inference cost of LLM inferences. However, there has been inadequate attention towards the energy requirements of these models. To address this gap, this paper presents a comprehensive survey of energy requirements of speculative decoding strategies, with detailed analysis on how various factors – model size and family, speculative decoding strategies, and dataset characteristics – influence the energy optimizations.

💡 Research Summary

The paper “Benchmarking the Energy Savings with Speculative Decoding Strategies” investigates the often‑overlooked energy dimension of speculative decoding (SD) for large language models (LLMs). While prior work has shown that SD can dramatically cut inference latency by using a lightweight assistant model to draft multiple tokens and a larger target model to verify them in parallel, the authors ask whether these speed gains automatically translate into lower energy consumption. To answer this, they conduct a systematic, multi‑factor benchmark covering four model families (Vicuna, LLaMA, FLAN‑T5, Qwen), eight target‑assistant pairings, four SD strategies (CoGA‑x, DyGA‑x, EAGLE‑2, EAGLE‑3, and Medusa), and three standard inference tasks (code generation on HumanEval, mathematical reasoning on GSM‑8K, and summarization on CNN‑DM).

Experimental Setup

All experiments run on a single NVIDIA A5000 GPU (24 GB) with an Intel Xeon Silver 4210R CPU, except the 70 B models which use an A6000 (48 GB). Target models are loaded with NF4 4‑bit quantization; assistant models are considerably smaller (e.g., Vicuna‑68M vs Vicuna‑13B). The authors feed prompts one‑by‑one (batch size = 1) and measure energy using the CodeCarbon library, which records GPU, CPU, and RAM power draw in watt‑hours. They define three key metrics: GPU energy‑saving factor (γ_GPU), total system energy‑saving factor (γ_Total), and speed‑up factor (γ_t), all normalized to the energy or time required to generate 1 K tokens.

Key Findings

-

Speed does not guarantee energy savings. Basic SD methods (CoGA‑20, DyGA‑20) achieve 1.3–1.5× latency reduction but only modestly improve γ_GPU (≈0.8–0.9) and often leave γ_Total around 1.0, meaning total energy consumption is unchanged or even higher than vanilla autoregressive decoding. In contrast, the more sophisticated EAGLE‑3 yields 2.5–2.9× speed‑up and γ_Total of 1.8–2.5×, demonstrating that algorithmic sophistication matters.

-

Model family matters. LLaMA‑8B and LLaMA‑70B show modest energy gains even with simple SD, while Vicuna models rarely benefit from CoGA/DyGA and sometimes consume more energy. FLAN‑T5 (encoder‑decoder) consistently achieves up to 2.0× total energy reduction with basic SD, thanks to its architecture’s parallelism. Qwen models exhibit the weakest energy savings (≈1.0× or below), suggesting that very large, newer models may be less amenable to current SD techniques.

-

Task dependence is strong. The code‑generation benchmark (HumanEval) produces the highest γ_Total (up to 2.5×) and γ_t (up to 2.9×) because the assistant’s drafts are highly accurate, reducing verification passes. Summarization (CNN‑DM) often yields γ_Total ≤ 1.0, and for LLaMA‑70B the SD approaches even increase energy use, highlighting that tasks with longer, more ambiguous outputs diminish the benefit of speculative drafts. GSM‑8K shows intermediate results, with EAGLE methods still outperforming basic SD.

-

Assistant‑target size ratio is critical. Larger gaps (e.g., a 13B target with a 68M assistant) produce steeper “energy‑vs‑speed” slopes, confirming that a small assistant off‑loads most compute. However, when the assistant is too weak to generate reliable drafts (as observed with some LLaMA‑1B assistants), the expected gains disappear.

-

Implementation platform influences results. Comparing HuggingFace and vLLM on identical configurations shows HuggingFace consistently achieving 5–10 % higher γ_t and γ_Total, likely due to more efficient GPU kernel usage in the former.

Analysis and Recommendations

- Non‑linear relationship: Energy savings scale non‑linearly with latency reduction; the interaction of model architecture, draft quality, and task complexity dictates the outcome.

- Strategy selection: For energy‑constrained deployments, prioritize EAGLE‑2/3 or other draft‑tree methods, especially on tasks where the assistant can produce high‑quality drafts (code, short summaries).

- Model pairing: Choose assistant models at least an order of magnitude smaller than the target, but verify that draft accuracy remains acceptable; otherwise, the verification overhead nullifies gains.

- Hardware considerations: Results are based on single‑GPU, batch‑size‑1 settings; multi‑GPU or larger batch deployments may shift the balance, and further work is needed to generalize findings.

Conclusion and Future Work

The study provides the first comprehensive energy‑focused benchmark of speculative decoding, revealing that speed improvements alone are insufficient to guarantee greener inference. Energy efficiency depends on a confluence of factors: model family, size disparity, decoding strategy sophistication, and task characteristics. The authors propose practical guidelines for practitioners and outline future directions, including multi‑GPU scaling, integration with quantization/pruning pipelines, automated strategy selection via meta‑learning, and real‑world carbon‑footprint accounting in cloud and edge environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment