Autoregressive Image Generation with Masked Bit Modeling

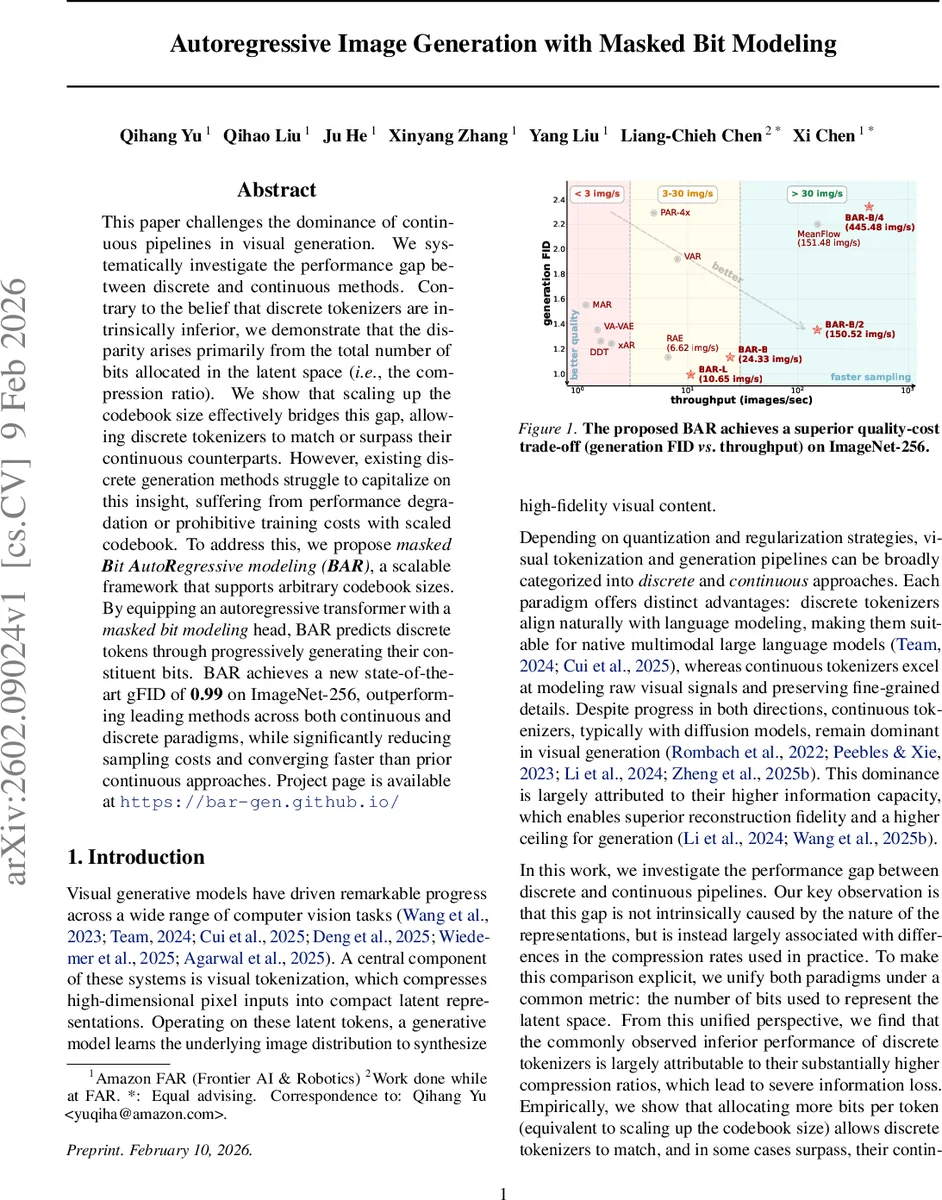

This paper challenges the dominance of continuous pipelines in visual generation. We systematically investigate the performance gap between discrete and continuous methods. Contrary to the belief that discrete tokenizers are intrinsically inferior, we demonstrate that the disparity arises primarily from the total number of bits allocated in the latent space (i.e., the compression ratio). We show that scaling up the codebook size effectively bridges this gap, allowing discrete tokenizers to match or surpass their continuous counterparts. However, existing discrete generation methods struggle to capitalize on this insight, suffering from performance degradation or prohibitive training costs with scaled codebook. To address this, we propose masked Bit AutoRegressive modeling (BAR), a scalable framework that supports arbitrary codebook sizes. By equipping an autoregressive transformer with a masked bit modeling head, BAR predicts discrete tokens through progressively generating their constituent bits. BAR achieves a new state-of-the-art gFID of 0.99 on ImageNet-256, outperforming leading methods across both continuous and discrete paradigms, while significantly reducing sampling costs and converging faster than prior continuous approaches. Project page is available at https://bar-gen.github.io/

💡 Research Summary

The paper “Masked Bit Modeling for Autoregressive Image Generation” investigates why discrete visual tokenizers have historically lagged behind continuous pipelines (primarily VAE‑based diffusion models) in image generation. The authors argue that the performance gap is not intrinsic to the discrete representation itself but is largely due to the amount of information allocated to the latent space, measured as a “bit budget.” By unifying both paradigms under this metric—bits per image = spatial tokens × log₂(codebook size) for discrete models, and bits per image = spatial tokens × 16 × channel dimension for continuous models—they demonstrate that discrete tokenizers with a low bit budget suffer from higher compression and thus poorer reconstruction quality.

Through systematic experiments, they show that increasing the codebook size (thereby raising the bit budget) narrows and eventually eliminates the reconstruction gap. Using a Fixed‑Size Quantizer (FSQ) that allows codebooks up to 2²⁵⁶ entries, they scale the latent channel dimension from 10 to 256 bits, achieving reconstruction rFID 0.33 at a 65 536‑bit budget—outperforming a strong SD‑VAE baseline (rFID 0.62). This confirms that discrete tokenizers can match or surpass continuous ones when given sufficient bits.

However, scaling the codebook creates a new bottleneck: standard autoregressive transformers predict a categorical index over a vocabulary that can reach millions or billions of tokens, making the final linear head memory‑intensive and statistically hard to train. Prior works either cap codebook size (≈2¹⁸) or resort to a bits‑based head that predicts the entire bit string at once, both of which degrade generation quality.

To overcome this, the authors propose Masked Bit Autoregressive modeling (BAR). Instead of predicting a token index, BAR predicts the constituent bits of each token sequentially, using a masked language‑model style head attached to the transformer. This design decouples the prediction complexity from the vocabulary size: the model only needs to output a binary decision per bit, regardless of how many bits constitute the codebook entry. Consequently, BAR can handle arbitrarily large codebooks without exploding memory or computation.

BAR is built on top of FSQ tokenizers, enabling seamless scaling. Multiple BAR variants (BAR‑B/4, BAR‑B/2, BAR‑B, BAR‑L) are trained on ImageNet‑256. The best model (BAR‑L, 415 M parameters) achieves a generative FID (gFID) of 0.99, setting a new state‑of‑the‑art across both discrete and continuous methods. In terms of throughput, BAR‑B/4 reaches 445 images per second, while BAR‑B runs at 24.3 img/s, delivering 3–4× faster sampling than leading diffusion models such as MeanFlow or recent continuous pipelines, while maintaining comparable or superior fidelity.

The paper situates BAR relative to prior work: MaskBit feeds bit tokens into a transformer but still predicts codebook indices, limiting scalability; Infinity uses an external bit‑corrector and a V‑AR generator, adding complexity. BAR’s fully self‑contained masked‑bit head offers a cleaner, more scalable solution.

In conclusion, the study provides three key contributions: (1) a unified bit‑budget analysis that reveals the true source of the discrete‑continuous performance gap; (2) empirical evidence that scaling discrete codebooks closes this gap in reconstruction; (3) the BAR framework, which enables efficient autoregressive generation with arbitrarily large vocabularies, delivering state‑of‑the‑art image quality and sampling speed. The work opens avenues for integrating discrete tokenizers with large multimodal language models and for exploring bit‑level compression in other generative domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment