ShapeCond: Fast Shapelet-Guided Dataset Condensation for Time Series Classification

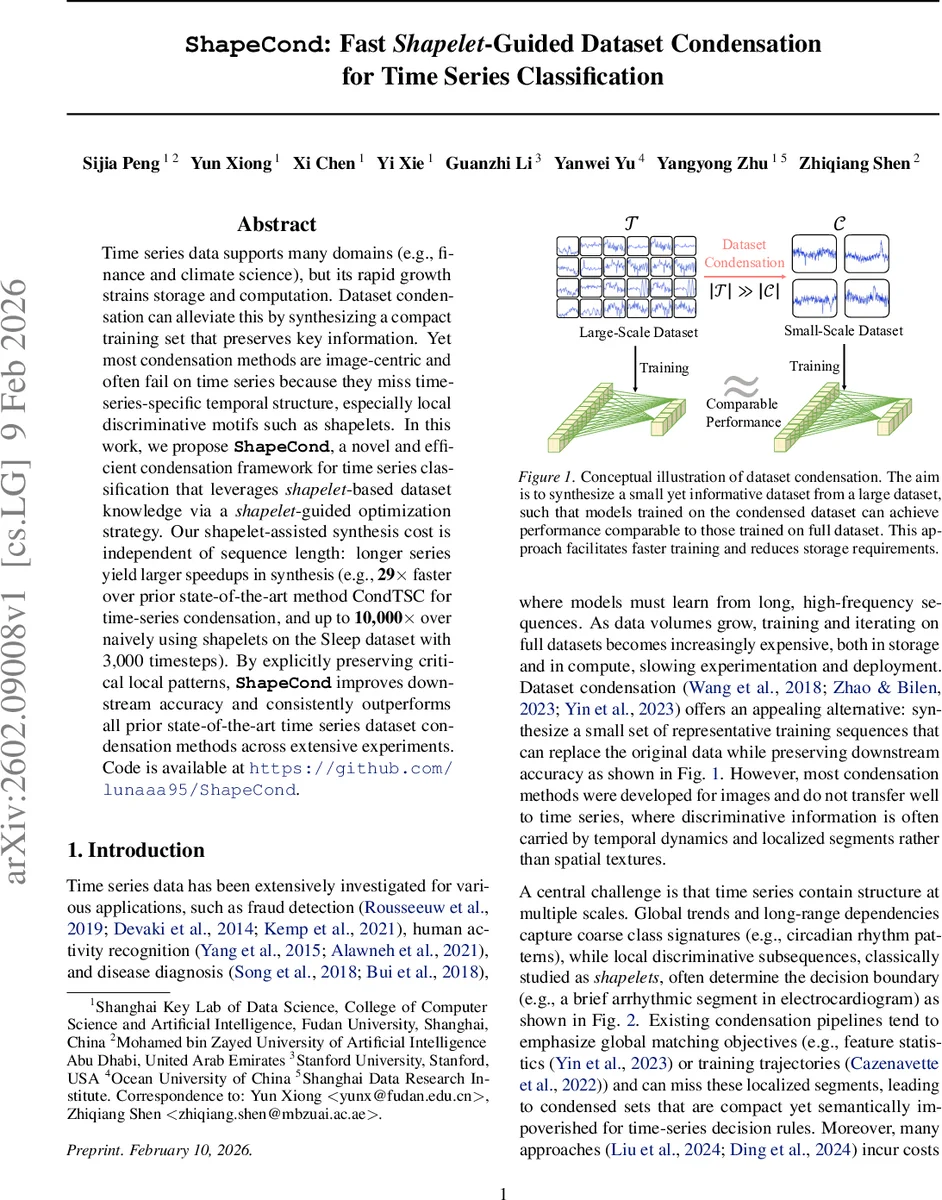

Time series data supports many domains (e.g., finance and climate science), but its rapid growth strains storage and computation. Dataset condensation can alleviate this by synthesizing a compact training set that preserves key information. Yet most condensation methods are image-centric and often fail on time series because they miss time-series-specific temporal structure, especially local discriminative motifs such as shapelets. In this work, we propose ShapeCond, a novel and efficient condensation framework for time series classification that leverages shapelet-based dataset knowledge via a shapelet-guided optimization strategy. Our shapelet-assisted synthesis cost is independent of sequence length: longer series yield larger speedups in synthesis (e.g., 29$\times$ faster over prior state-of-the-art method CondTSC for time-series condensation, and up to 10,000$\times$ over naively using shapelets on the Sleep dataset with 3,000 timesteps). By explicitly preserving critical local patterns, ShapeCond improves downstream accuracy and consistently outperforms all prior state-of-the-art time series dataset condensation methods across extensive experiments. Code is available at https://github.com/lunaaa95/ShapeCond.

💡 Research Summary

The paper introduces ShapeCond, a novel dataset condensation framework specifically designed for time‑series classification. Traditional condensation methods, largely developed for images, ignore the temporal dynamics and local discriminative subsequences—known as shapelets—that often drive classification decisions in time‑series data. ShapeCond addresses this gap by integrating shapelet knowledge directly into the synthesis process, thereby preserving both global temporal structure and critical local motifs while achieving substantial computational savings.

Key Contributions

-

Fast Shapelet Discovery – The authors redesign classic shapelet mining to be scalable. They (a) randomly prune a fraction p of the training samples, reducing the number of candidate shapelets from M to (1‑p)·M, and (b) restrict distance calculations to a fixed‑size temporal neighborhood around each candidate’s original position. This “position‑constrained” distance evaluation removes dependence on the series length L, yielding constant‑time (O(1)) distance computation. Candidates are scored by information gain, and the top‑k shapelets form a dataset‑level shapelet pool S*.

-

Knowledge Fetching – Each time series is transformed into a k-dimensional vector of distances to the selected shapelets, providing an explicit representation of local discriminative patterns that will guide synthesis.

-

Dual‑View Data Synthesis (Global–Local Temporal Structure Optimization) – The condensation objective combines two complementary losses:

- Global loss (L_task): driven by gradients of a neural encoder (e.g., 1‑D CNN or Transformer) to ensure the synthetic series retain overall trends, long‑range dependencies, and morphology of the original data.

- Local loss (L_shapelet): a shapelet‑matching term that forces the synthetic series to stay close to the selected shapelets, preserving the short, decisive motifs.

The total loss is L = λ₁·L_task + λ₂·L_shapelet, with λ₁, λ₂ balancing the two aspects. Optimization alternates between updating the synthetic dataset C (treated as differentiable tensors) and updating the encoder parameters θ. Because L_shapelet is independent of L, the overall synthesis cost remains essentially independent of sequence length.

Complexity Analysis – Standard shapelet discovery incurs O(N·L·M) distance calculations (N = number of series, L = length, M = candidates). ShapeCond reduces this to O((1‑p)²·N·M) with constant‑time distance evaluation, eliminating the L factor. The synthesis stage’s cost is dominated by the encoder’s forward/backward passes, which scale modestly with L and are far cheaper than the exhaustive distance searches of prior methods.

Experimental Evaluation – The authors benchmark ShapeCond on more than 30 public time‑series classification datasets (UCR/UEA archives) and a high‑resolution physiological Sleep dataset (3,000 timesteps). Compression ratios range from 1 % to 5 % of the original training set. Results show:

- Accuracy – ShapeCond outperforms the previous state‑of‑the‑art CondTSC by an average of 17.56 % absolute accuracy, with especially large gains (≥10 %) on domains where local motifs dominate (e.g., ECG, human activity).

- Speed – The fast shapelet discovery yields up to 29× speedup over CondTSC for typical benchmarks, and up to 10,000× speedup on the Sleep dataset compared with naïve shapelet‑based condensation. The synthesis cost remains nearly constant as L grows, making the method practical for long‑horizon recordings.

- Ablation – Removing either the global or local loss degrades performance, confirming the complementary role of both objectives. Varying the pruning ratio p up to 0.7 incurs negligible accuracy loss while halving computational effort.

Limitations and Future Work – ShapeCond requires selecting the number of shapelets k and the pruning ratio p, which may need dataset‑specific tuning. Very short series with limited local structure may see reduced benefits. The current implementation is single‑GPU; extending to multi‑GPU or distributed settings, exploring alternative encoders, and adapting the framework to unsupervised or semi‑supervised scenarios are identified as promising directions.

Conclusion – ShapeCond delivers a fast, shapelet‑guided condensation pipeline that simultaneously preserves global temporal dynamics and essential local discriminative motifs. By achieving high compression ratios without sacrificing classification performance and by decoupling synthesis cost from sequence length, it offers a practical solution for storage‑constrained and compute‑limited environments such as edge devices, real‑time monitoring systems, and large‑scale time‑series analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment