Paradox of De-identification: A Critique of HIPAA Safe Harbour in the Age of LLMs

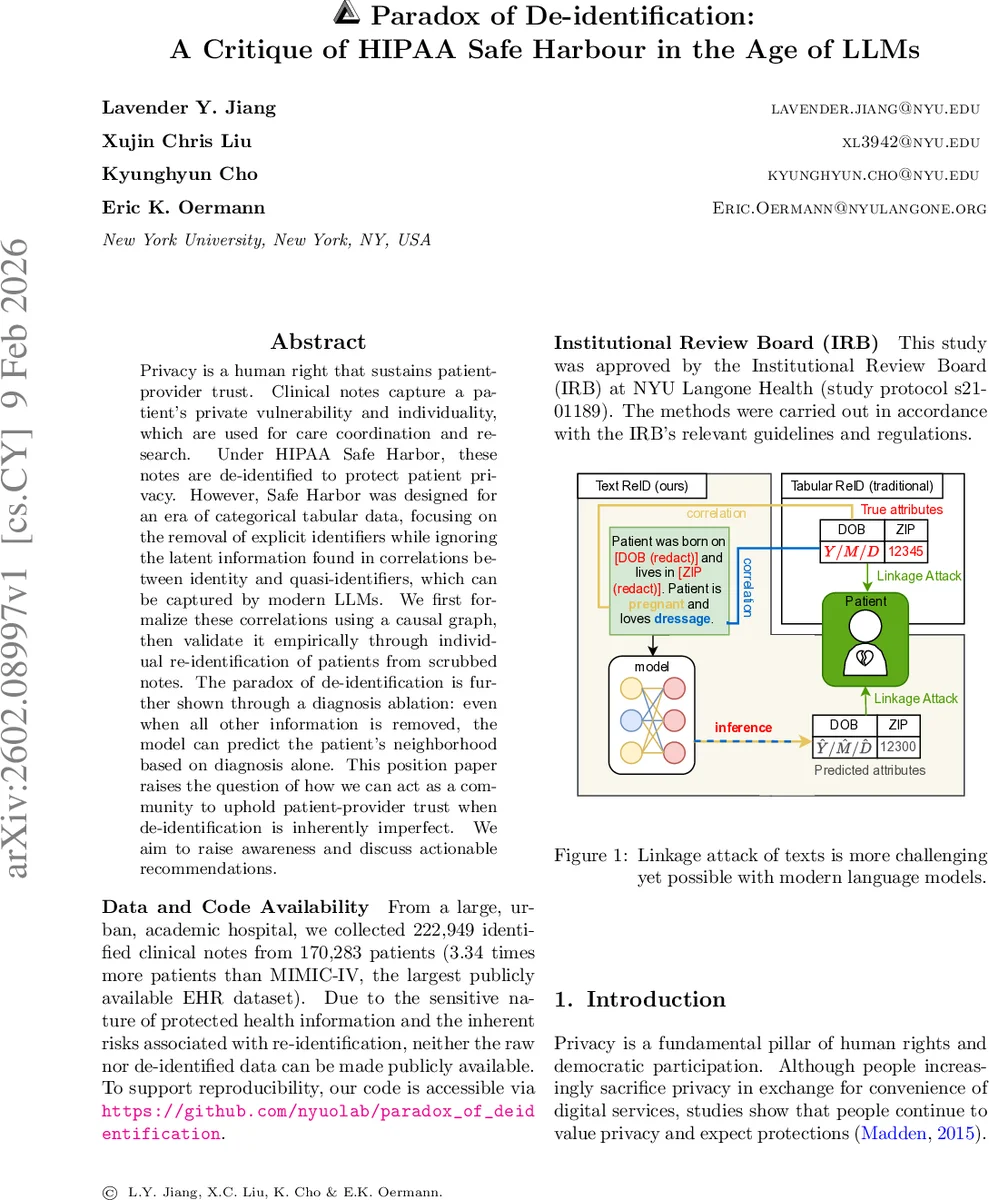

Privacy is a human right that sustains patient-provider trust. Clinical notes capture a patient’s private vulnerability and individuality, which are used for care coordination and research. Under HIPAA Safe Harbor, these notes are de-identified to protect patient privacy. However, Safe Harbor was designed for an era of categorical tabular data, focusing on the removal of explicit identifiers while ignoring the latent information found in correlations between identity and quasi-identifiers, which can be captured by modern LLMs. We first formalize these correlations using a causal graph, then validate it empirically through individual re-identification of patients from scrubbed notes. The paradox of de-identification is further shown through a diagnosis ablation: even when all other information is removed, the model can predict the patient’s neighborhood based on diagnosis alone. This position paper raises the question of how we can act as a community to uphold patient-provider trust when de-identification is inherently imperfect. We aim to raise awareness and discuss actionable recommendations.

💡 Research Summary

The paper “Paradox of De-identification: A Critique of HIPAA Safe Harbour in the Age of LLMs” argues that the current HIPAA Safe Harbour approach to de‑identifying clinical notes is fundamentally inadequate in the era of large language models (LLMs). The authors begin by framing privacy as a human right essential to the patient‑provider relationship and noting that HIPAA’s Safe Harbour was designed for categorical tabular data, focusing on the removal of 18 explicit identifiers. They contend that this approach ignores latent correlations between quasi‑identifiers and patient identity that modern LLMs can exploit.

To formalize the problem, the authors construct a causal graph with four latent variables: patient identity (I), sensitive attributes (S) such as date of birth and ZIP code, medical information (M) such as diagnoses, and non‑sensitive information (N) such as hobbies. The clinical note (X) is generated from S, M, and N. De‑identification removes the direct S→X edge but leaves two back‑door paths— I→N→X and I→M→X—through which identity can still be inferred. This graph illustrates that merely redacting explicit identifiers does not sever all statistical ties to the patient.

Empirically, the authors collected 222,949 identified clinical notes from 170,283 patients at NYU Langone, a dataset more than three times larger than MIMIC‑IV. They applied the UCSF Philter tool to produce Safe‑Harbour‑compliant de‑identified notes. Six demographic attributes (sex, note year, month, borough, ZIP‑code‑derived median income, insurance type) were selected to approximate the classic “triad” (sex, birth date, ZIP) that can uniquely identify most U.S. residents. Using a fine‑tuned BERT‑base model, they trained separate classifiers for each attribute. Even with as few as 1,000 training examples, the models significantly outperformed random baselines across all attributes; sex was predicted with >99.7 % accuracy.

For the re‑identification attack, the predicted attributes were used to query an external population database. A top‑k matching strategy was employed: for each attribute the top k predicted classes were selected, and the intersection of all attribute matches defined a candidate pool Ω. The authors measured three probabilities: group re‑identification success (P_G), individual identification given a correct group (P_I|G), and overall unique re‑identification (P_reid = P_G × P_I|G). Results showed that de‑identified notes yielded P_reid far above the random baseline, demonstrating that the remaining back‑door paths are exploitable.

A particularly striking finding is the “diagnosis‑only” ablation. Using only diagnosis information, a model could predict a patient’s borough with an AUC of 58.57 %; when combined with the full de‑identified note, AUC rose to 78.35 %, approaching the 82.78 % achieved with fully identified notes. This narrow gap indicates that the majority of re‑identification risk stems not from the protected identifiers but from the medical and non‑sensitive content that is retained for clinical utility.

The discussion situates these results within prior literature on linkage attacks (Sweeney 2000), the “broken promises of privacy” (Ohm 2009), and the limitations of differential privacy in health data (Dwork & Roth 2014). The authors argue that the current “scrub‑and‑share” paradigm is structurally flawed: it attempts to balance utility and privacy by severing only the explicit identifier path while ignoring the inherent correlation between clinical content and identity. They call for a shift beyond minimal legal compliance toward mathematically grounded privacy guarantees (e.g., differential privacy), synthetic data generation, or controlled access frameworks.

In conclusion, the paper labels de‑identification under Safe Harbour as a paradox: the very information that makes clinical notes valuable for research and care (diagnoses, treatment details) also provides the strongest signals for re‑identification. As LLMs become more capable, the gap between privacy protection and data utility widens, demanding coordinated action from regulators, researchers, and industry to redesign privacy safeguards for the modern AI era.

Comments & Academic Discussion

Loading comments...

Leave a Comment