Generalizing Sports Feedback Generation by Watching Competitions and Reading Books: A Rock Climbing Case Study

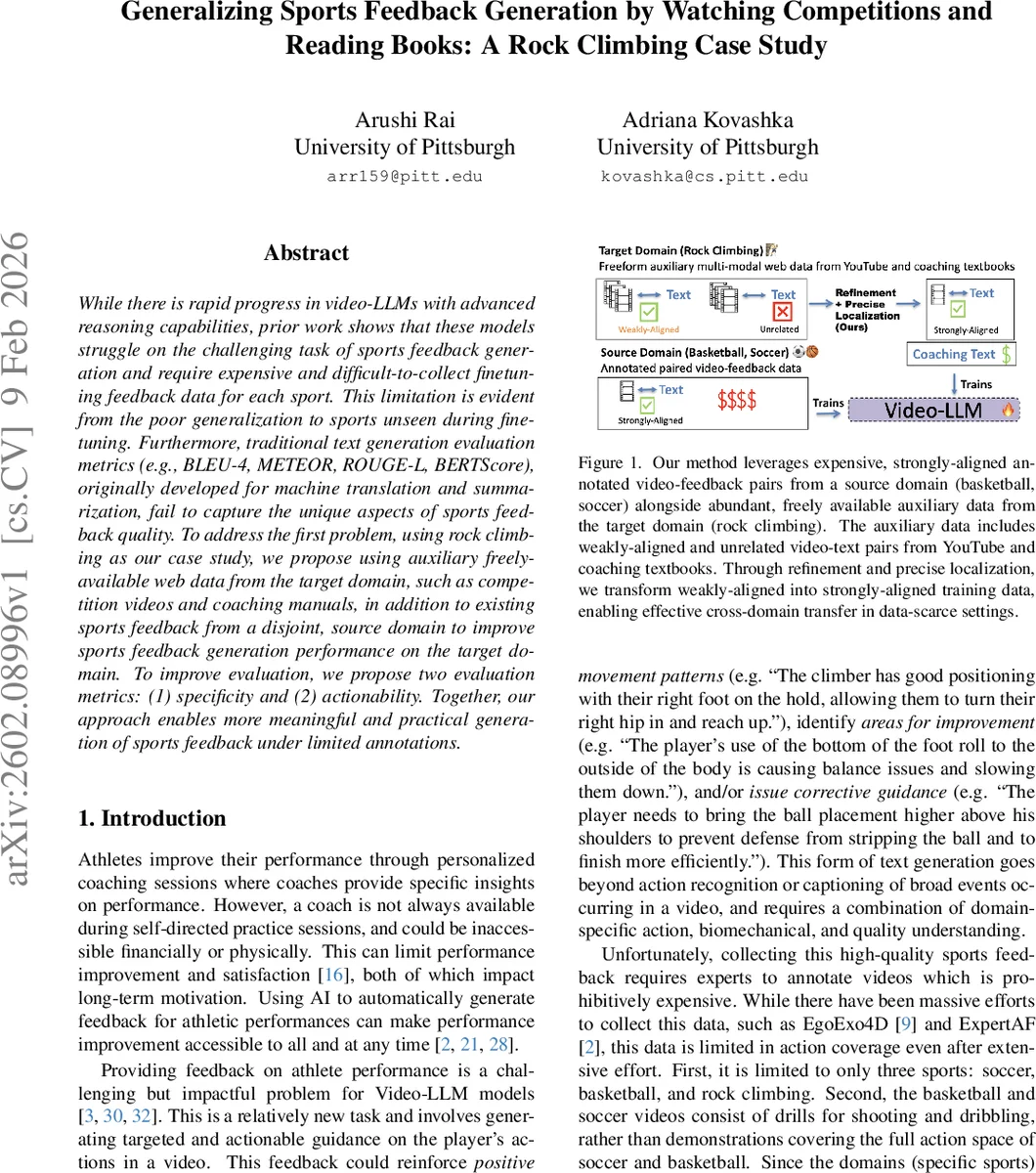

While there is rapid progress in video-LLMs with advanced reasoning capabilities, prior work shows that these models struggle on the challenging task of sports feedback generation and require expensive and difficult-to-collect finetuning feedback data for each sport. This limitation is evident from the poor generalization to sports unseen during finetuning. Furthermore, traditional text generation evaluation metrics (e.g., BLEU-4, METEOR, ROUGE-L, BERTScore), originally developed for machine translation and summarization, fail to capture the unique aspects of sports feedback quality. To address the first problem, using rock climbing as our case study, we propose using auxiliary freely-available web data from the target domain, such as competition videos and coaching manuals, in addition to existing sports feedback from a disjoint, source domain to improve sports feedback generation performance on the target domain. To improve evaluation, we propose two evaluation metrics: (1) specificity and (2) actionability. Together, our approach enables more meaningful and practical generation of sports feedback under limited annotations.

💡 Research Summary

The paper tackles two fundamental challenges in automatic sports feedback generation with video‑large language models (video‑LLMs): (1) the scarcity of annotated feedback data for each sport, which hampers generalization to unseen domains, and (2) the inadequacy of conventional text‑generation metrics (BLEU, ROUGE, BERTScore) to capture the nuanced qualities of feedback such as specificity and actionability. Using rock climbing as a case study, the authors propose a data‑centric approach that leverages freely available auxiliary multimodal resources from the target domain—namely competition videos with expert commentary and a coaching manual—alongside existing high‑quality feedback from a disjoint source domain (basketball and soccer).

Data collection and refinement: The authors scrape 2,300 competition videos from YouTube, filter out short clips, and retain 1,440 videos longer than 20 minutes, yielding 18,615 video‑clip/commentary pairs. The commentary is obtained via automatic speech recognition (ASR) transcripts, which are noisy and only weakly aligned with the visual content. To transform this weak data into a strong training signal, they introduce a two‑stage refinement pipeline. First, a 14‑billion‑parameter LLM (Phi‑4) classifies each ASR segment for relevance and, if relevant, summarizes it to retain only action‑quality information, discarding about 80 % of the raw narration. Second, Whisper provides word‑level timestamps for the refined text, and a second LLM prompt aligns these timestamps precisely with the video frames. This yields strongly aligned multimodal pairs that capture detailed biomechanical cues (e.g., “right foot on the sloper”) and timing information.

Model training: The refined rock‑climbing data (both multimodal and text‑only from the manual) are combined with the source‑domain feedback dataset (ExpertAF) to fine‑tune a video‑LLM. The intuition is that fundamental motor skills—timing, coordination, power, balance—transfer across sports, while the auxiliary data supplies domain‑specific terminology and biomechanical insight. Experiments show that this cross‑domain fine‑tuning dramatically improves out‑of‑distribution feedback generation on rock climbing: BLEU‑4 improves by 106 %, METEOR by 36 %, ROUGE‑L by 39 %, and BERTScore by 25 % compared to a baseline trained only on source‑domain feedback.

Evaluation metrics: Recognizing that reference‑based scores do not reflect feedback usefulness, the authors propose two LLM‑based, reference‑free metrics grounded in motor‑learning theory’s Knowledge of Performance (KP) framework: (1) Specificity, measuring how descriptively the feedback pinpoints body parts, movement patterns, and causal links; (2) Actionability, assessing whether the feedback offers concrete, implementable corrective instructions. They prompt an LLM to score each generated feedback on these dimensions and validate the scores against human annotations, achieving high Pearson correlations (≈0.78–0.81), thereby confirming that the automatic metrics reliably reflect human judgments.

Contributions and implications: The study demonstrates that (i) inexpensive, publicly available video‑commentary and textual resources can be systematically refined to serve as high‑quality training data for sports feedback, reducing reliance on costly expert annotations; (ii) domain‑specific, aspect‑level evaluation metrics provide more meaningful assessments of feedback quality than traditional n‑gram or semantic similarity scores; (iii) the methodology is generalizable to other sports where annotated feedback is scarce. The authors suggest future work on scaling the pipeline to additional disciplines, integrating real‑time feedback pipelines, and exploring human‑in‑the‑loop refinement to further bridge the gap between AI‑generated advice and professional coaching.

Comments & Academic Discussion

Loading comments...

Leave a Comment