A Behavioural and Representational Evaluation of Goal-Directedness in Language Model Agents

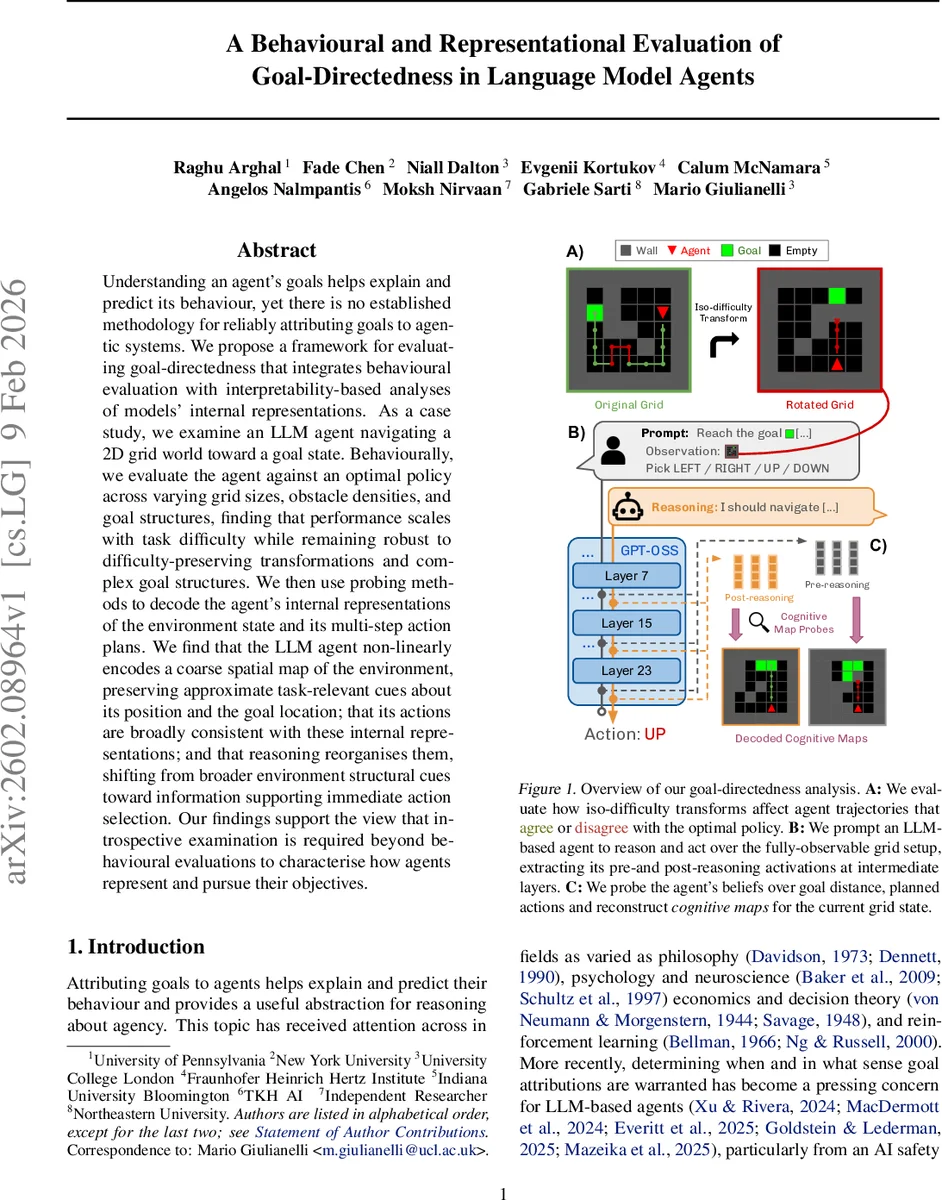

Understanding an agent’s goals helps explain and predict its behaviour, yet there is no established methodology for reliably attributing goals to agentic systems. We propose a framework for evaluating goal-directedness that integrates behavioural evaluation with interpretability-based analyses of models’ internal representations. As a case study, we examine an LLM agent navigating a 2D grid world toward a goal state. Behaviourally, we evaluate the agent against an optimal policy across varying grid sizes, obstacle densities, and goal structures, finding that performance scales with task difficulty while remaining robust to difficulty-preserving transformations and complex goal structures. We then use probing methods to decode the agent’s internal representations of the environment state and its multi-step action plans. We find that the LLM agent non-linearly encodes a coarse spatial map of the environment, preserving approximate task-relevant cues about its position and the goal location; that its actions are broadly consistent with these internal representations; and that reasoning reorganises them, shifting from broader environment structural cues toward information supporting immediate action selection. Our findings support the view that introspective examination is required beyond behavioural evaluations to characterise how agents represent and pursue their objectives.

💡 Research Summary

The paper introduces a comprehensive framework for assessing goal‑directedness in language‑model agents by jointly examining external behavior and internal representations. Recognizing that purely behavioral metrics can conflate capability limitations with genuine lack of goal orientation, the authors argue that introspective analysis of an agent’s latent beliefs is essential for a reliable attribution of goals.

Experimental Platform

The study uses a fully observable 2‑D grid world (MiniGrid) where an LLM‑based agent must navigate from a start cell to a goal cell. The grid is rendered as a text sequence with a one‑to‑one token per cell, eliminating tokenization ambiguities. The agent is instantiated with GPT‑OSS‑20B, chosen for its manageable size and strong reasoning abilities. Grid sizes range from 7×7 to 15×15, and obstacle density d varies from 0.0 (empty) to 1.0 (maze‑like). For each size‑density pair, ten random grids are generated, and the agent is evaluated on ten trajectories per grid using a sampling temperature of 0.7 and a horizon of 1.5 × optimal path length.

Behavioral Evaluation

The authors compute two primary metrics: per‑step action accuracy (fraction of actions that match an optimal A* policy) and Jensen–Shannon divergence (JSD) between the empirical action distribution of the agent and the optimal policy. Additional metrics—goal success rate, policy entropy, and expected calibration error—are reported in the appendix. Results show a monotonic decline in accuracy and a rise in JSD as grid size and obstacle density increase, confirming that task difficulty directly impacts observable performance. Accuracy also drops linearly with distance to the goal for distances under 20 steps, after which estimates become noisier.

To test whether the agent is biased toward particular spatial configurations, the authors define four “iso‑difficulty” transformations that preserve grid size, obstacle density, and optimal path length: reflection, 90° rotation, swapping start and goal positions, and transposition. Paired Wilcoxon tests reveal no statistically significant differences in any behavioral metric across transformed versus original grids, indicating that the agent’s performance is driven by difficulty rather than specific layout features.

Probing Internal Representations

Beyond behavior, the core contribution lies in decoding the agent’s hidden states. Linear and non‑linear probe classifiers are trained on activations from multiple transformer layers to predict (a) the agent’s current coordinates, (b) the goal coordinates, (c) the Manhattan distance to the goal, and (d) a multi‑step action plan. The probes uncover a “cognitive map” that encodes a coarse spatial layout and approximate goal location in a non‑linear fashion. Importantly, the nature of these representations changes across the reasoning process. Pre‑reasoning activations retain broader spatial cues and longer‑horizon plans, whereas post‑reasoning activations sharpen focus on the immediate next action, suggesting a dynamic re‑organisation of goal‑related information during internal deliberation.

The decoded representations correlate strongly with observed actions: when the agent deviates from the optimal policy, its internal map still preserves consistent goal information, implying that mis‑behaviour stems from planning or execution errors rather than a loss of goal intent.

Implications and Future Work

The study demonstrates that (1) behavioral alignment with an optimal policy is necessary but insufficient for confirming goal‑directedness, (2) LLM agents internally maintain compressed, non‑linear encodings of task‑relevant spatial information, and (3) reasoning processes actively reshape these encodings to prioritize immediate decision‑making. These findings have direct relevance to AI safety and alignment, where monitoring internal goal representations could provide early warnings of misalignment even when outward behavior appears benign.

Limitations include the reliance on fully observable environments; extending the framework to partially observable or multi‑agent settings would test the robustness of the probing approach under uncertainty. Moreover, the current probes are classifier‑based; future work could explore causal probing or generative models to capture richer aspects of internal belief dynamics.

In sum, the paper offers a novel, white‑box methodology for evaluating goal‑directedness, validates it on a concrete navigation task, and uncovers how LLM‑based agents internally encode and manipulate goal‑related information. This bridges a critical gap between external performance metrics and the interpretability of latent agency, paving the way for more transparent and controllable AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment