Contrastive Learning for Diversity-Aware Product Recommendations in Retail

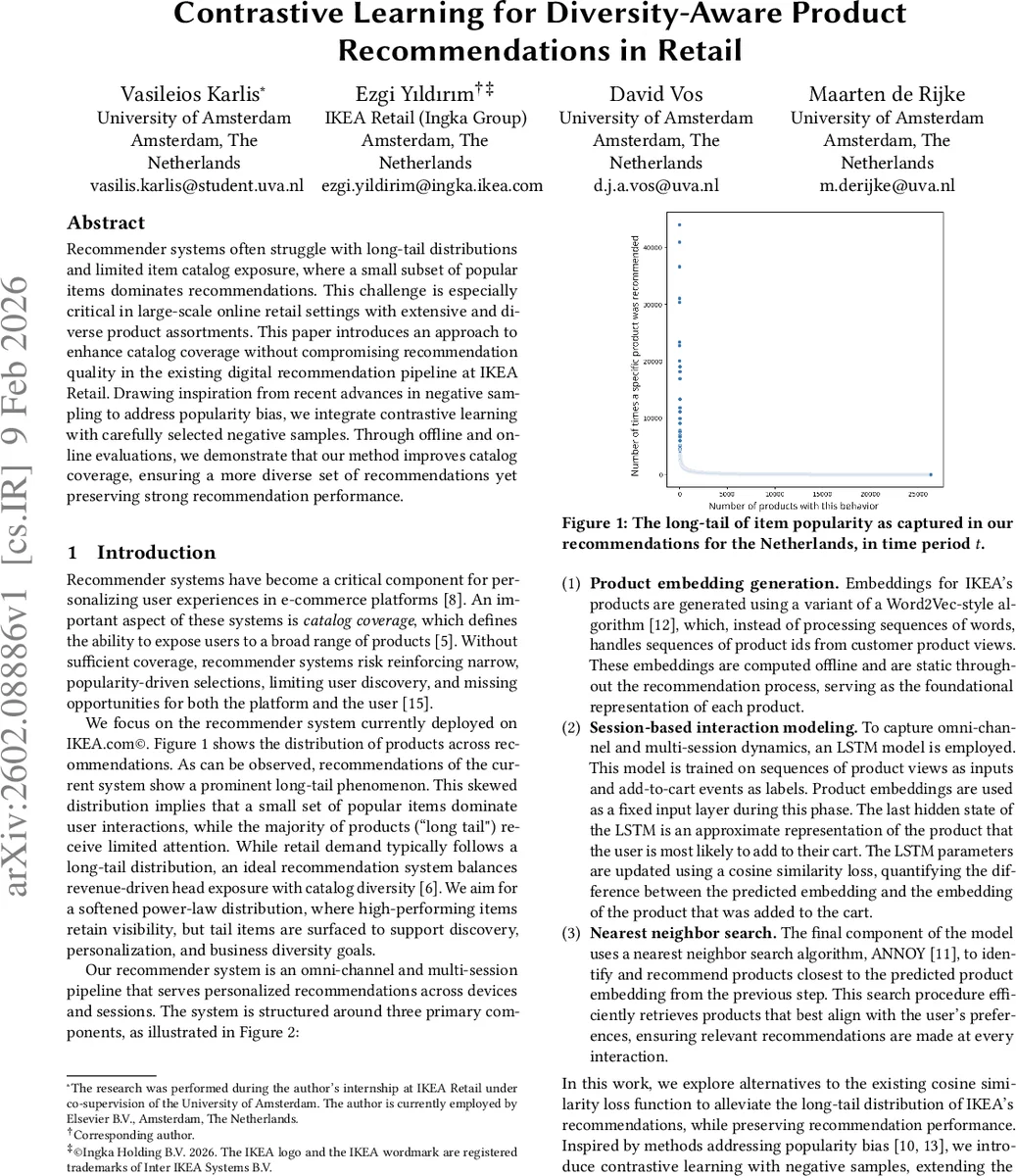

Recommender systems often struggle with long-tail distributions and limited item catalog exposure, where a small subset of popular items dominates recommendations. This challenge is especially critical in large-scale online retail settings with extensive and diverse product assortments. This paper introduces an approach to enhance catalog coverage without compromising recommendation quality in the existing digital recommendation pipeline at IKEA Retail. Drawing inspiration from recent advances in negative sampling to address popularity bias, we integrate contrastive learning with carefully selected negative samples. Through offline and online evaluations, we demonstrate that our method improves catalog coverage, ensuring a more diverse set of recommendations yet preserving strong recommendation performance.

💡 Research Summary

The paper addresses the pervasive long‑tail and popularity‑bias problem in large‑scale e‑commerce recommendation systems, using IKEA’s production‑grade pipeline as a testbed. The existing system consists of three stages: (1) static product embeddings generated by a Word2Vec‑style algorithm that treats sequences of product IDs as “sentences”, (2) a session‑based LSTM that ingests a user’s recent product‑view sequence and is trained with a cosine‑similarity loss to bring the final hidden state close to the embedding of the item actually added to the cart, and (3) a nearest‑neighbor retrieval step (ANNOY) that returns the items whose embeddings are nearest to the predicted vector. While this architecture yields solid ranking performance, its loss function only rewards similarity to the positive item and completely ignores negative information, leading to a recommendation list dominated by a small set of popular products.

To mitigate this, the authors introduce contrastive learning into the pipeline. They keep the cosine‑similarity backbone but augment it with two contrastive loss variants: a weighted loss that adds a penalty term proportional to the average cosine similarity between the prediction and a set of negative samples (controlled by hyper‑parameters α = 2 and β = 1), and a cross‑entropy loss that treats the in‑batch negatives as competing classes and applies a temperature τ = 0.05. Both losses require a set of negative samples, and the paper explores two sampling strategies. The first, “in‑batch negative sampling with a sampling cap”, treats all other positive items in the current mini‑batch as negatives but limits the number used (e.g., 5, 100) to control computational cost. The second, “adaptive top‑k in‑batch negative sampling”, first computes cosine similarities between the prediction and all in‑batch negatives, then selects the k most similar (hard) negatives (k = 5 in experiments) for the contrastive update, thereby focusing learning on the most informative mistakes.

Experiments are conducted on two datasets: a proprietary IKEA Netherlands click‑stream (product‑view sequences with add‑to‑cart labels) and the public RetailRocket dataset, both processed with the same Word2Vec‑style embedding step. Evaluation uses both accuracy‑oriented (NDCG@10) and diversity‑oriented metrics (catalog coverage and Gini coefficient). Results show consistent gains in diversity without sacrificing ranking quality. On the IKEA data, the weighted loss with 100 in‑batch negatives improves catalog coverage by 26.4 % and reduces the Gini coefficient by 6.5 % while also increasing NDCG@10 by 6.4 % compared to the baseline cosine loss. On RetailRocket, the weighted loss combined with top‑5 hard negatives yields the highest coverage (0.0476) and a low Gini (0.9849). Notably, the cross‑entropy loss with 100 in‑batch negatives also performs well, indicating that both contrastive formulations are viable.

A live A/B test on IKEA’s website validates the offline findings: the weighted loss with 100 in‑batch negatives raises catalog coverage by 2.53 % and improves a diversity metric by 9.59 % relative to the production baseline, with no degradation in click‑through or conversion. The authors discuss that the optimal loss‑function and negative‑sampling configuration depends on dataset characteristics (e.g., catalog size, sparsity) and that future work will focus on more efficient negative‑sample selection, possibly leveraging model‑based informativeness scores to further reduce training cost while preserving the diversity boost.

In summary, the paper makes four key contributions: (1) reformulating a cosine‑similarity‑based sequential recommender as a contrastive learning problem, (2) introducing weighted and cross‑entropy contrastive losses compatible with embedding‑output models, (3) adapting two recent negative‑sampling strategies (in‑batch with a cap and adaptive top‑k hard negatives) to the recommendation setting, and (4) demonstrating, both offline and online, that these modifications substantially increase catalog exposure and fairness (lower Gini) while maintaining or improving ranking accuracy. The work provides a practical roadmap for large retailers seeking to balance revenue‑driven head‑item exposure with the business need to surface long‑tail products, thereby enhancing user discovery, personalization, and overall catalog utilization.

Comments & Academic Discussion

Loading comments...

Leave a Comment