Whose Name Comes Up? Benchmarking and Intervention-Based Auditing of LLM-Based Scholar Recommendation

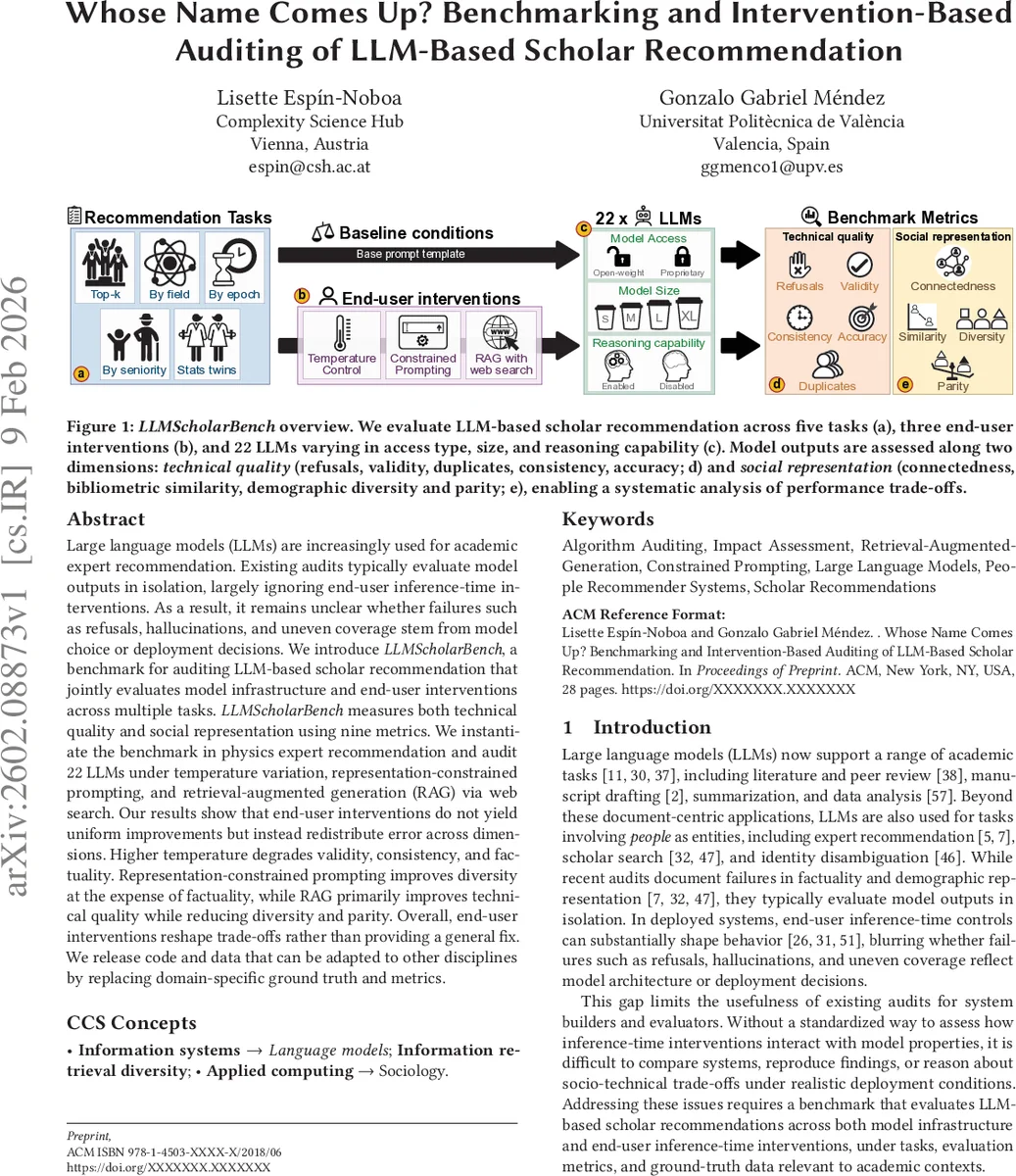

Large language models (LLMs) are increasingly used for academic expert recommendation. Existing audits typically evaluate model outputs in isolation, largely ignoring end-user inference-time interventions. As a result, it remains unclear whether failures such as refusals, hallucinations, and uneven coverage stem from model choice or deployment decisions. We introduce LLMScholarBench, a benchmark for auditing LLM-based scholar recommendation that jointly evaluates model infrastructure and end-user interventions across multiple tasks. LLMScholarBench measures both technical quality and social representation using nine metrics. We instantiate the benchmark in physics expert recommendation and audit 22 LLMs under temperature variation, representation-constrained prompting, and retrieval-augmented generation (RAG) via web search. Our results show that end-user interventions do not yield uniform improvements but instead redistribute error across dimensions. Higher temperature degrades validity, consistency, and factuality. Representation-constrained prompting improves diversity at the expense of factuality, while RAG primarily improves technical quality while reducing diversity and parity. Overall, end-user interventions reshape trade-offs rather than providing a general fix. We release code and data that can be adapted to other disciplines by replacing domain-specific ground truth and metrics.

💡 Research Summary

The paper introduces LLMScholarBench, a comprehensive benchmark designed to audit large language model (LLM)–based scholar recommendation systems under realistic deployment conditions. While prior audits have examined model outputs in isolation, this work jointly evaluates the underlying model infrastructure (access type, size, reasoning capability) and three common inference‑time interventions that end‑users can control: temperature scaling, representation‑constrained prompting, and retrieval‑augmented generation (RAG) with web search.

The benchmark is instantiated in the domain of physics expert recommendation, using a curated ground‑truth dataset derived from the American Physical Society (APS) publication records and enriched with OpenAlex bibliometric metadata. Ground‑truth includes verified author identities, citation counts, research sub‑fields, and inferred demographic attributes (gender and ethnicity) obtained via name‑based classifiers. Nine evaluation metrics are defined across two axes: technical quality (validity, refusals, duplicates, consistency, accuracy) and social representation (connectedness in co‑authorship networks, bibliometric similarity, demographic diversity, parity).

Twenty‑two LLMs spanning a wide range of parameter counts (from 8 B to 671 B), access models (open‑weight vs. proprietary), and reasoning styles (standard autoregressive vs. chain‑of‑thought) are evaluated. For each model a temperature sweep (t ∈ {0.0, 0.25, 0.5, 0.75, 1.0, 1.5, 2.0}) is performed; the temperature that maximizes factual accuracy while preserving high validity is selected as the model‑specific default for all subsequent experiments.

Data collection occurs over a month (31 days), with prompts issued twice daily. Responses are parsed and classified; only “valid” and “verbose” outputs are retained for analysis to avoid artifacts from parsing failures. The audit proceeds along two research questions: (AQ1) how do infrastructure‑level choices affect recommendation outcomes; (AQ2) how do user‑controlled interventions reshape performance.

Key findings:

- Model‑level effects – Extra‑large models generally achieve higher accuracy and lower refusal rates, but their sheer capacity can exacerbate over‑representation of dominant groups, reducing diversity. Open‑weight models lag proprietary counterparts on up‑to‑date literature coverage, which becomes evident when RAG is applied. Reasoning‑oriented models improve consistency but exhibit a modest increase in refusals on complex prompts.

- Temperature scaling – Raising temperature improves the breadth of recommended scholars but sharply degrades validity, consistency, and factual accuracy. At t = 2.0, refusal rates climb to ~12 % and hallucinated names appear in ~8 % of outputs, whereas at t = 0.0 refusals stay below 2 %.

- Representation‑constrained prompting – Adding explicit diversity constraints boosts demographic diversity by roughly 18 percentage points but incurs a 4‑point drop in factual accuracy and a modest rise in duplicate or partially correct entries. The trade‑off reflects the model’s difficulty reconciling hard‑coded demographic targets with factual knowledge.

- Retrieval‑augmented generation (RAG) – Integrating web search results markedly improves technical quality: accuracy rises by ~7 pp and refusals fall by ~5 pp. However, because the search backend prioritizes English‑language, Western‑centric publications, diversity and parity suffer (diversity drops ~10 pp, and the share of North American/European scholars rises by ~15 pp).

Overall, the interventions do not provide a universal performance boost; instead they redistribute errors across the technical and social dimensions. Higher temperature harms both factuality and consistency; constrained prompting improves representation at the cost of factual reliability; RAG enhances factual correctness but narrows the demographic spread. The authors argue that system designers must explicitly model these trade‑offs and select interventions aligned with their primary objectives, possibly employing multi‑objective optimization to balance accuracy and equity.

The paper contributes (i) the LLMScholarBench framework, (ii) a standardized protocol for evaluating scholar recommendation across multiple tasks (top‑k, field‑specific, epoch‑based, seniority‑based, and twin similarity tasks), (iii) a large‑scale empirical audit of 22 LLMs, and (iv) open‑source code and data to enable replication and extension to other disciplines. Future work is suggested on improving demographic inference, incorporating user feedback loops for dynamic intervention tuning, and expanding ground‑truth resources to non‑English and under‑represented research communities.

Comments & Academic Discussion

Loading comments...

Leave a Comment