VideoVeritas: AI-Generated Video Detection via Perception Pretext Reinforcement Learning

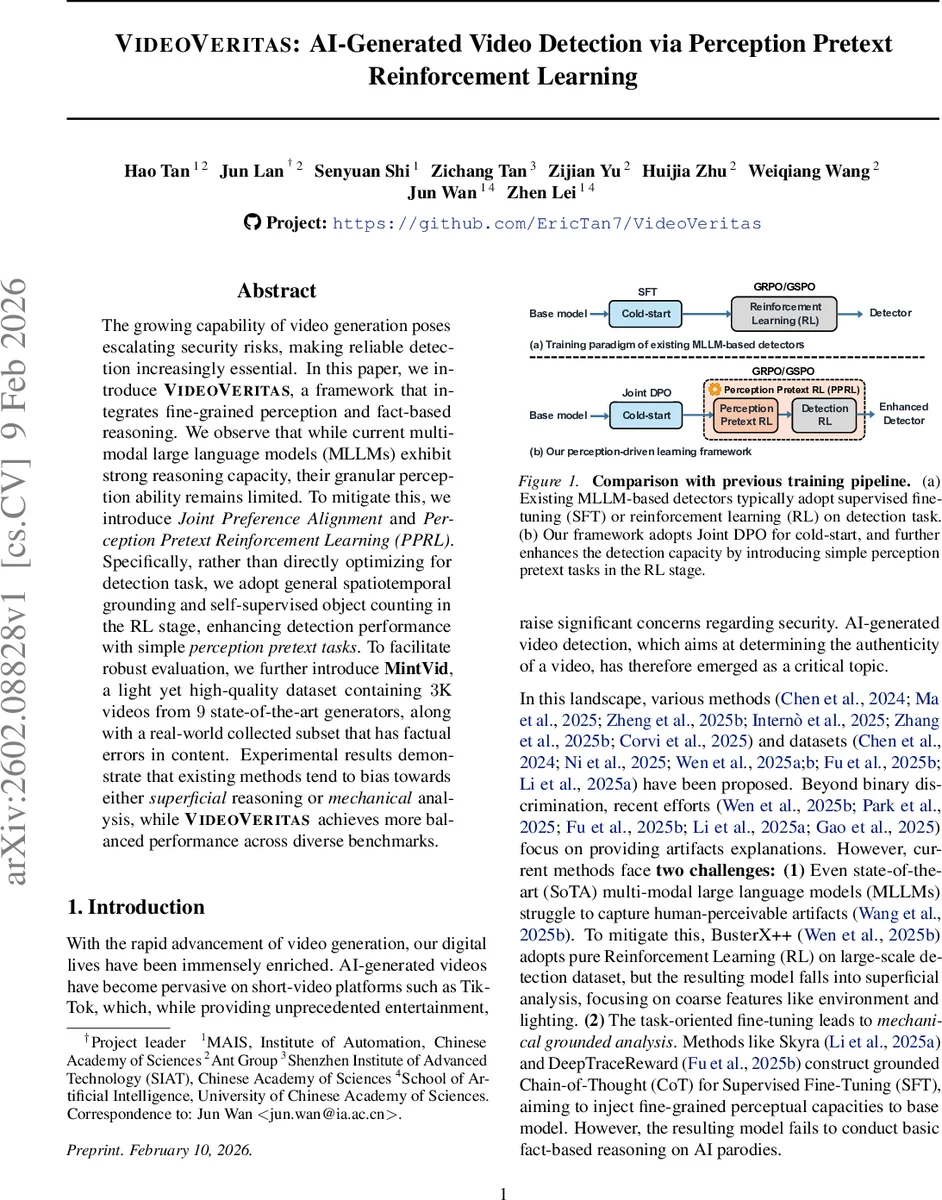

The growing capability of video generation poses escalating security risks, making reliable detection increasingly essential. In this paper, we introduce VideoVeritas, a framework that integrates fine-grained perception and fact-based reasoning. We observe that while current multi-modal large language models (MLLMs) exhibit strong reasoning capacity, their granular perception ability remains limited. To mitigate this, we introduce Joint Preference Alignment and Perception Pretext Reinforcement Learning (PPRL). Specifically, rather than directly optimizing for detection task, we adopt general spatiotemporal grounding and self-supervised object counting in the RL stage, enhancing detection performance with simple perception pretext tasks. To facilitate robust evaluation, we further introduce MintVid, a light yet high-quality dataset containing 3K videos from 9 state-of-the-art generators, along with a real-world collected subset that has factual errors in content. Experimental results demonstrate that existing methods tend to bias towards either superficial reasoning or mechanical analysis, while VideoVeritas achieves more balanced performance across diverse benchmarks.

💡 Research Summary

The paper addresses the growing security concerns posed by increasingly realistic AI‑generated videos. While recent multimodal large language models (MLLMs) such as Gemini‑3.0 and GPT‑5.1 excel at semantic understanding and logical reasoning, they still lack fine‑grained spatiotemporal perception—i.e., the ability to notice subtle artifacts that humans can see, such as object interactions, physics inconsistencies, or brief visual glitches. Moreover, existing detection pipelines either fine‑tune directly on the binary detection label (Supervised Fine‑Tuning, SFT) or apply pure Reinforcement Learning (RL) on the detection task. Both approaches tend to bias the model toward superficial cues (lighting, background) or mechanical, overly‑structured analysis, sacrificing the model’s capacity for fact‑based reasoning.

To overcome these limitations, the authors propose VideoVeritas, a two‑stage training framework that couples Joint Preference Alignment (JPA) with Perception Pretext Reinforcement Learning (PPRL).

Stage 1 – Joint Preference Alignment

For each video, a set of artifact‑oriented questions is automatically generated. Using a strong base model (Qwen‑3‑VL‑32B), the system answers these questions, producing a detailed QA report that includes artifact type, timestamps, and descriptive analysis. This process yields two benefits: (1) it provides label‑agnostic perception of specific artifacts, reducing hallucinations compared with direct prompting; (2) it supplies a reference for data sampling. From the QA reports, the authors curate 4 K high‑quality samples and construct preference pairs at two levels:

- Response‑level – “anti‑label” prompts (e.g., inserting the reversed label) trigger the base model’s intrinsic hallucinations, which are treated as non‑preferred responses. For fact‑based videos, the base model’s own reasoning is taken as the preferred response.

- Video‑level – The model is encouraged to produce fine‑grained perceptual analyses for perception‑heavy videos (vₚ) and factual reasoning for fact‑heavy videos (vᵣ).

These preferences are optimized via Direct Preference Optimization (DPO), with a sigmoid‑based loss that pushes the model toward the designated preferred output while penalizing the non‑preferred one.

Stage 2 – Perception Pretext Reinforcement Learning (PPRL)

Instead of directly rewarding detection accuracy, the RL stage uses two simple, self‑supervised perception tasks as proxies:

- General spatiotemporal grounding – given a target object, the model must output exact start/end times and per‑second bounding boxes.

- Self‑supervised object counting – the model counts shapes (circles, squares, triangles) appearing in the video.

Both tasks require precise spatiotemporal reasoning but do not need expensive human annotations. By mastering these pretext tasks, the model internalizes object‑level segmentation, tracking, and counting capabilities, which later translate into more reliable detection judgments.

MintVid Dataset

To evaluate the approach, the authors release MintVid, a lightweight yet high‑quality benchmark consisting of 3 K videos from nine state‑of‑the‑art generators:

- 1.5 K realistic T2V/TI2V videos from six proprietary models,

- 2 K deep‑fake clips generated by three public models,

- A fact‑based subset collected from short‑video platforms, containing videos that can be falsified only by factual reasoning (e.g., impossible object appearances).

MintVid is deliberately diverse, covering general content, facial manipulation, and fact‑based scenarios, and it complements existing datasets (GenVideo, GenVidBench) that are dominated by older generators with poor temporal consistency.

Experimental Findings

The authors compare VideoVeritas against a range of baselines: binary detectors (DeMamba, RestraV), an MLLM cold‑start model (Skyra), and a pure RL detector (BusterX++). Across multiple benchmarks—including the new MintVid subsets—VideoVeritas consistently achieves higher accuracy, recall, and F1 scores (average gains of 4–7 percentage points). The most striking improvement appears on the fact‑based subset, where recall jumps by over 15 pp, demonstrating the model’s balanced ability to detect both visual artifacts and factual inconsistencies.

Behavioral analysis (Figure 2) shows that after PPRL training the model’s “Component Granularity” (ability to split a scene into distinct objects) wins 76.5 % of the time over the baseline, and “Physics Depth” (recognizing physical continuity violations) improves by 68.3 %. These metrics confirm that the perception pretext tasks reshape the model’s reasoning to resemble human perceptual analysis.

Contributions

- Perception Pretext RL – a task‑agnostic RL scheme that boosts detection without heavy annotation.

- Joint Preference Alignment – a novel combination of response‑level anti‑label guidance and video‑level preference learning that leverages the base MLLM’s own knowledge.

- MintVid – a new, well‑curated dataset covering modern generators and real‑world fact‑based videos, providing a more rigorous testbed for future work.

Implications & Future Work

VideoVeritas demonstrates that separating perception from reasoning—training the model first on generic spatiotemporal grounding and counting, then aligning its outputs with human‑like preferences—effectively bridges the gap between MLLM semantic prowess and fine‑grained visual acuity. The framework is modular and could be extended with more sophisticated pretext tasks (e.g., physics simulation, multi‑agent interaction) or adapted for real‑time streaming detection where computational efficiency is critical. Overall, the paper offers a compelling roadmap for building robust AI‑generated video detectors that are both perceptually sharp and factually aware.

Comments & Academic Discussion

Loading comments...

Leave a Comment