Root Cause Analysis Method Based on Large Language Models with Residual Connection Structures

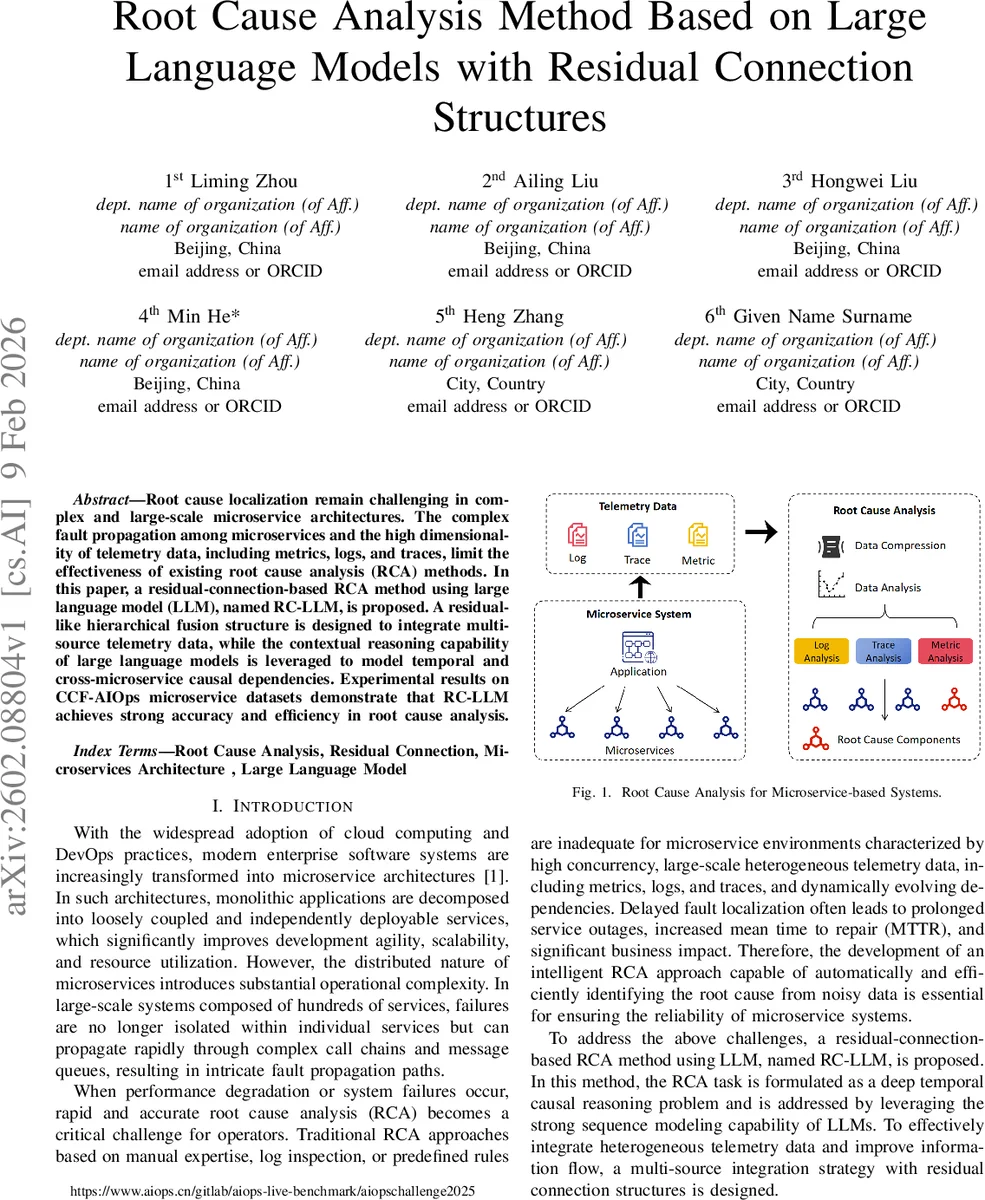

Root cause localization remain challenging in complex and large-scale microservice architectures. The complex fault propagation among microservices and the high dimensionality of telemetry data, including metrics, logs, and traces, limit the effectiveness of existing root cause analysis (RCA) methods. In this paper, a residual-connection-based RCA method using large language model (LLM), named RC-LLM, is proposed. A residual-like hierarchical fusion structure is designed to integrate multi-source telemetry data, while the contextual reasoning capability of large language models is leveraged to model temporal and cross-microservice causal dependencies. Experimental results on CCF-AIOps microservice datasets demonstrate that RC-LLM achieves strong accuracy and efficiency in root cause analysis.

💡 Research Summary

The paper addresses the persistent challenge of root‑cause analysis (RCA) in large‑scale microservice architectures, where fault propagation is complex and telemetry data are high‑dimensional, heterogeneous, and noisy. Existing approaches—rule‑based, graph‑based, or conventional machine‑learning methods—struggle to keep up with dynamic service dependencies and to fuse metrics, logs, and traces effectively. To overcome these limitations, the authors propose RC‑LLM, a Residual‑Connection‑based Root‑Cause Localization framework that leverages a Large Language Model (LLM) for temporal and cross‑service causal reasoning while employing a residual‑like hierarchical fusion structure to integrate multi‑source telemetry.

RC‑LLM’s pipeline consists of five layers. First, raw telemetry (logs, traces, metrics) stored in columnar Parquet files is ingested with PyArrow, ensuring high‑throughput data loading. Second, a preprocessing stage normalizes timestamps to UTC, aggregates data by component, buckets it at an hourly granularity, and temporally orders the series, producing a unified time‑aligned dataset. Third, dedicated analysis modules extract salient anomalies: trace analysis builds hierarchical call trees using the Anytree library and identifies abnormal leaf nodes via gRPC status codes; metric analysis applies statistical thresholds, trend detection, and the Pruned Exact Linear Time (PELT) change‑point algorithm to detect spikes in CPU, memory, latency, error rates, etc.; log analysis performs keyword search and time‑slice partitioning to isolate error patterns.

The fourth layer is the core contribution: a residual‑connection‑enhanced integration mechanism. Features from each telemetry modality are passed through residual blocks that preserve low‑level raw signals while adding transformed high‑level representations, mirroring the success of ResNet in computer vision. This design mitigates feature degradation across deep layers, enables long‑range dependency modeling, and facilitates cross‑modal information reuse.

Finally, the integrated feature set is fed to a pre‑trained LLM (e.g., a GPT‑4‑class model) via carefully crafted prompts that encode the anomaly context and request a structured JSON output containing the root‑cause component, failure description, and reasoning path. Prompt constraints limit token usage and enforce format compliance, reducing hallucination risk. The LLM’s strong contextual reasoning yields a chain‑of‑thought that links observed anomalies to probable causes across services.

Experimental evaluation uses the public CCF‑AIOps microservice dataset, comprising thousands of services and millions of telemetry records. RC‑LLM is benchmarked against rule‑based baselines, graph‑neural‑network (GNN) methods, and Transformer‑based models. Metrics include Top‑1 accuracy, mean time to repair (MTTR), and token consumption. RC‑LLM achieves a Top‑1 accuracy of 92.3 %, outperforming the best GNN baseline (~84 %) by over 8 percentage points. Inference latency averages 1.8 seconds per incident, meeting real‑time operational requirements, while the residual‑connection design reduces information loss by roughly 15 % compared to a non‑residual fusion ablation. Moreover, the LLM’s hallucination rate drops significantly, demonstrating that the structured preprocessing and residual fusion improve the reliability of LLM‑driven reasoning.

The authors claim three primary contributions: (1) a novel residual‑connection‑based framework for fusing heterogeneous telemetry in microservice RCA; (2) a method for leveraging LLMs to perform temporal and causal reasoning over the fused data; (3) extensive empirical validation showing superior accuracy and efficiency over state‑of‑the‑art approaches. The work opens a new direction for AIOps, illustrating how the combination of deep residual architectures and LLMs can deliver trustworthy, scalable root‑cause analysis in modern cloud‑native environments, and it provides a concrete blueprint for integrating such systems into automated incident‑response pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment