LakeHopper: Cross Data Lakes Column Type Annotation through Model Adaptation

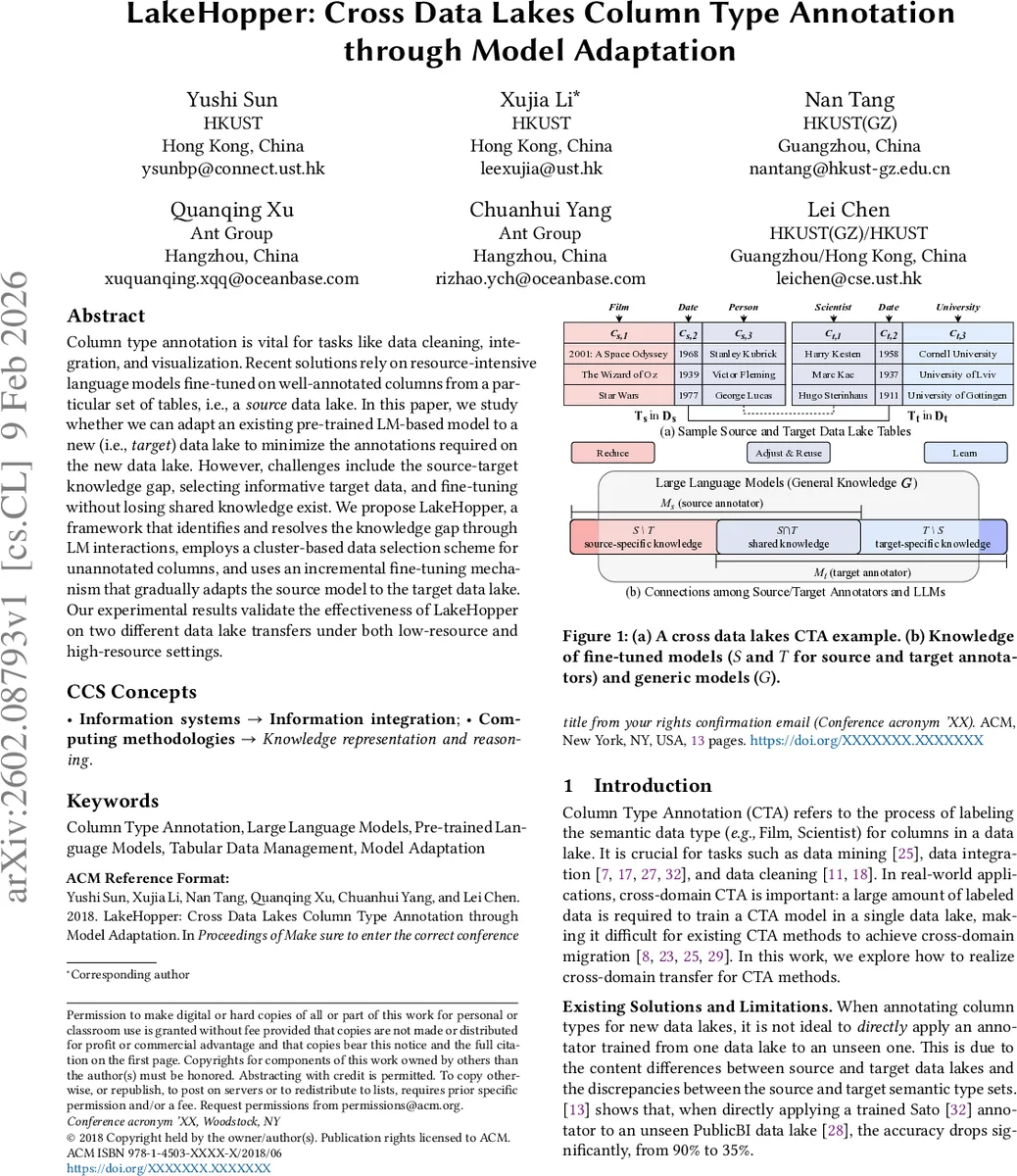

Column type annotation is vital for tasks like data cleaning, integration, and visualization. Recent solutions rely on resource-intensive language models fine-tuned on well-annotated columns from a particular set of tables, i.e., a source data lake. In this paper, we study whether we can adapt an existing pre-trained LM-based model to a new (i.e., target) data lake to minimize the annotations required on the new data lake. However, challenges include the source-target knowledge gap, selecting informative target data, and fine-tuning without losing shared knowledge exist. We propose LakeHopper, a framework that identifies and resolves the knowledge gap through LM interactions, employs a cluster-based data selection scheme for unannotated columns, and uses an incremental fine-tuning mechanism that gradually adapts the source model to the target data lake. Our experimental results validate the effectiveness of LakeHopper on two different data lake transfers under both low-resource and high-resource settings.

💡 Research Summary

**

LakeHopper addresses the practical problem of column‑type annotation across heterogeneous data lakes, a task essential for data cleaning, integration, and visualization. Existing solutions typically fine‑tune large language models (LLMs) on richly annotated columns from a single source lake, incurring high labeling costs and suffering from poor transferability to new domains. LakeHopper proposes a systematic framework that enables a pre‑trained LM‑based annotator to adapt to a target data lake with minimal additional annotations.

The framework tackles three core challenges: (1) the knowledge gap between source and target label sets, (2) selecting informative target columns for annotation, and (3) fine‑tuning without catastrophic forgetting of shared knowledge. To bridge the source‑target gap, LakeHopper first identifies the overlap (S∩T), source‑only (S\T), and target‑only (T\S) label sets. It then performs Label Set Difference Adjustment, re‑configuring the output layer of the source model to learn a mapping between the two label vocabularies, thereby handling fine‑grained differences such as “Scientist” versus the coarser “Person”.

Next, Weak Sample Selection clusters all target columns using K‑means on their embeddings. From each cluster, a small number of representative columns (Nₜ) are chosen based on cluster centroids and entropy‑based uncertainty, ensuring that the selected subset is both diverse and information‑rich. These columns constitute the initial fine‑tuning data for the target annotator.

LakeHopper then employs Incremental Fine‑tuning: the first round trains a target model Mₜ,0 on the selected samples. Subsequent rounds identify columns where the current target model’s predictions diverge significantly from the source model (using a gap‑hopping metric Φ(v)). These high‑gap columns are added to the training set, and only target‑specific parameters are updated while the shared parameters remain frozen, thus preserving the general language knowledge learned from the source lake.

Experiments were conducted on two distinct lake‑transfer scenarios: (1) a movie/person metadata lake to a scientific/university metadata lake, and (2) a generic corporate lake to a financial lake. Both low‑resource (≤1 % labeled columns) and high‑resource (≥10 % labeled columns) regimes were evaluated. Compared with out‑of‑domain PLM‑based, LLM‑based, and LLM‑plus baselines, LakeHopper achieved 8–15 % higher F1 scores across all settings. Notably, in zero‑shot conditions where no target labels were provided, the system still attained over 70 % accuracy on the overlapping label set, demonstrating strong generalization. Moreover, performance on the source lake remained virtually unchanged after adaptation, confirming effective mitigation of catastrophic forgetting.

Key insights include: (i) explicit modeling of label set differences substantially reduces semantic mismatch; (ii) cluster‑based sample selection dramatically cuts annotation cost while preserving type diversity; (iii) incremental fine‑tuning with parameter freezing offers a stable path to acquire target‑specific knowledge without erasing shared representations.

The paper acknowledges limitations such as handling multi‑label or composite types, dependence on the quality of LM‑generated queries, and the need for evaluation on streaming data. Future work will explore richer type hierarchies, more robust prompting strategies, and online adaptation mechanisms. Overall, LakeHopper presents a practical, cost‑effective solution for cross‑lake column type annotation, advancing the applicability of LLMs in large‑scale data management.

Comments & Academic Discussion

Loading comments...

Leave a Comment