GaussianCaR: Gaussian Splatting for Efficient Camera-Radar Fusion

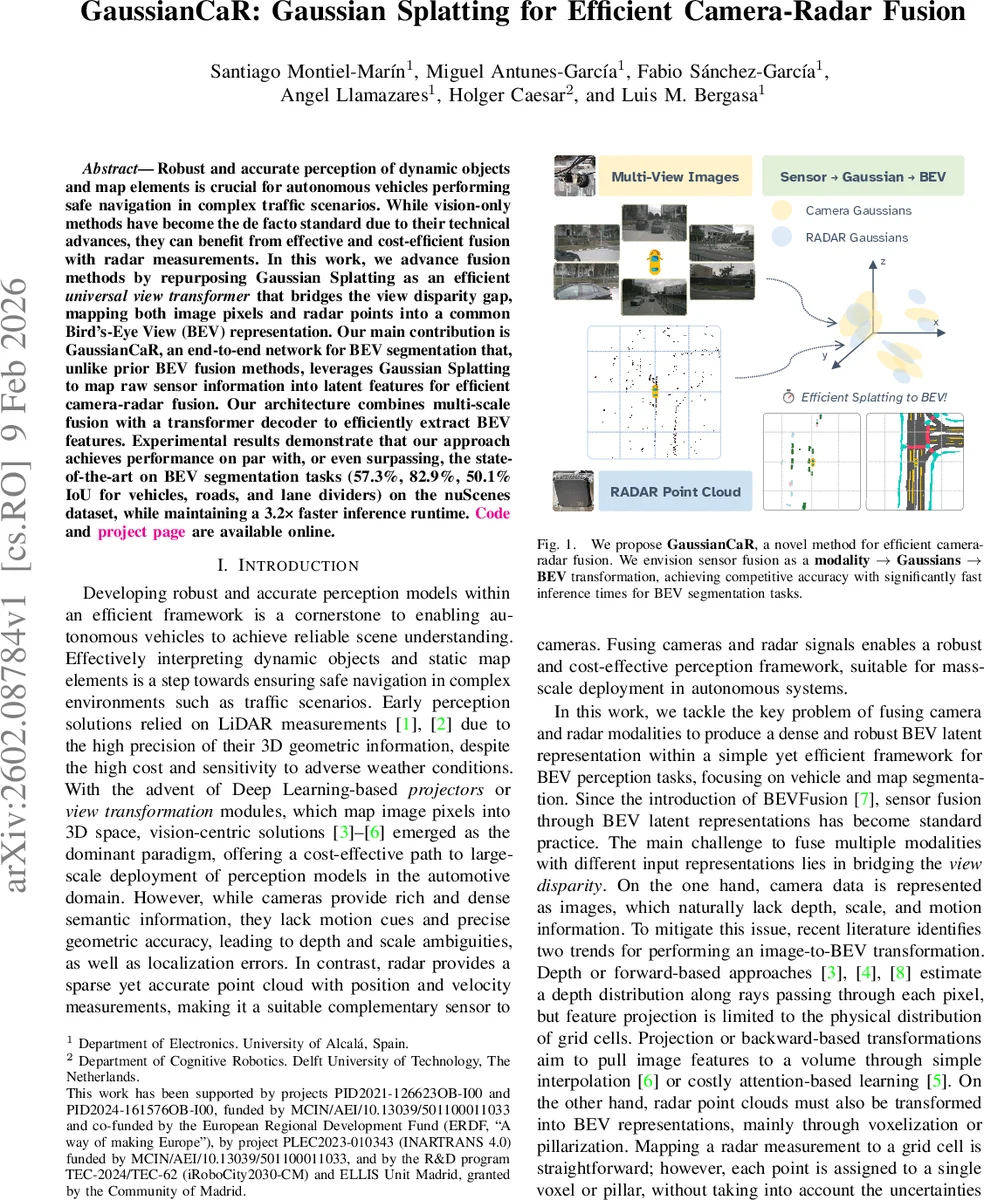

Robust and accurate perception of dynamic objects and map elements is crucial for autonomous vehicles performing safe navigation in complex traffic scenarios. While vision-only methods have become the de facto standard due to their technical advances, they can benefit from effective and cost-efficient fusion with radar measurements. In this work, we advance fusion methods by repurposing Gaussian Splatting as an efficient universal view transformer that bridges the view disparity gap, mapping both image pixels and radar points into a common Bird’s-Eye View (BEV) representation. Our main contribution is GaussianCaR, an end-to-end network for BEV segmentation that, unlike prior BEV fusion methods, leverages Gaussian Splatting to map raw sensor information into latent features for efficient camera-radar fusion. Our architecture combines multi-scale fusion with a transformer decoder to efficiently extract BEV features. Experimental results demonstrate that our approach achieves performance on par with, or even surpassing, the state of the art on BEV segmentation tasks (57.3%, 82.9%, and 50.1% IoU for vehicles, roads, and lane dividers) on the nuScenes dataset, while maintaining a 3.2x faster inference runtime. Code and project page are available online.

💡 Research Summary

The paper introduces GaussianCaR, a novel framework for camera‑radar fusion that repurposes Gaussian Splatting as a universal view‑transformer to bridge the gap between perspective images and sparse radar point clouds. The core idea is to convert each sensor modality into a set of learnable 3‑D Gaussians—Pixels‑to‑Gaussians for multi‑camera images and Points‑to‑Gaussians for radar data—thereby providing a common representation that naturally encodes spatial uncertainty.

For the camera branch, multi‑view images are processed by an EfficientViT‑L2 backbone to obtain low‑resolution feature maps. Convolutional heads predict per‑pixel Gaussian parameters: a coarse depth distribution over discretized bins, refined by an offset head that yields continuous 3‑D positions. The covariance derived from the depth distribution determines each Gaussian’s size and orientation, while an opacity scalar controls its contribution during rendering.

The radar branch employs a lightweight Point Transformer v3 (PTv3) to capture local and global context in the point cloud. After encoding, MLP heads predict Gaussian attributes for each radar point, using the original point coordinates as a base and learning only a metric offset. Covariance is directly regressed as six independent components, with a softplus activation ensuring positive eigenvalues.

Both sets of Gaussians are rasterized into a Bird’s‑Eye‑View (BEV) plane via differentiable orthographic projection. The rasterization is fully differentiable, allowing gradients to flow back to the original sensor encoders. This step yields dense BEV feature maps that retain the uncertainty information encoded in the Gaussian parameters.

Fusion is performed in BEV space using a CMX‑based multi‑scale cross‑modal attention module, which aligns and merges camera and radar features while preserving their respective scales. The fused BEV representation is then decoded by a Depth‑aware Pyramid Transformer (DPT) decoder that progressively upsamples and refines the features, producing pixel‑wise segmentation maps for three classes: vehicles, drivable road, and lane dividers.

Training is end‑to‑end with a combination of losses: depth classification, offset regression, opacity and feature supervision, and a standard multi‑class cross‑entropy for the final BEV segmentation. An additional regularizer penalizes overly large covariance values to keep uncertainty estimates realistic.

Experiments on the nuScenes dataset demonstrate that GaussianCaR achieves IoU scores of 57.3 % (vehicles), 82.9 % (roads), and 50.1 % (lane dividers), matching or surpassing current state‑of‑the‑art BEV‑Fusion methods. Crucially, the inference speed is 3.2× faster, enabling real‑time operation, and memory consumption is reduced thanks to the compact Gaussian representation (128‑dimensional feature vectors) and efficient rasterization. Ablation studies confirm that both the Gaussian‑based view transformation and the explicit modeling of radar uncertainty contribute significantly to performance, especially under adverse weather or low‑visibility conditions.

The authors claim three main contributions: (1) redefining view transformation with Gaussian Splatting to create a unified sensor‑agnostic pipeline, (2) introducing modality‑specific encoders that lift raw data into a sparse 3‑D Gaussian space while preserving uncertainty, and (3) delivering a fast, memory‑efficient BEV segmentation system suitable for large‑scale deployment. Limitations include the potential growth of Gaussian count with higher image resolutions and the current focus on static BEV segmentation rather than temporal tracking.

In summary, GaussianCaR showcases how a differentiable, uncertainty‑aware Gaussian representation can serve as a powerful bridge between dense visual data and sparse radar measurements, achieving state‑of‑the‑art accuracy with a substantial speedup. This work opens avenues for extending Gaussian‑based fusion to dynamic object tracking, multi‑modal SLAM, and integration with additional sensors such as LiDAR, promising a versatile foundation for next‑generation autonomous perception systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment