MVAnimate: Enhancing Character Animation with Multi-View Optimization

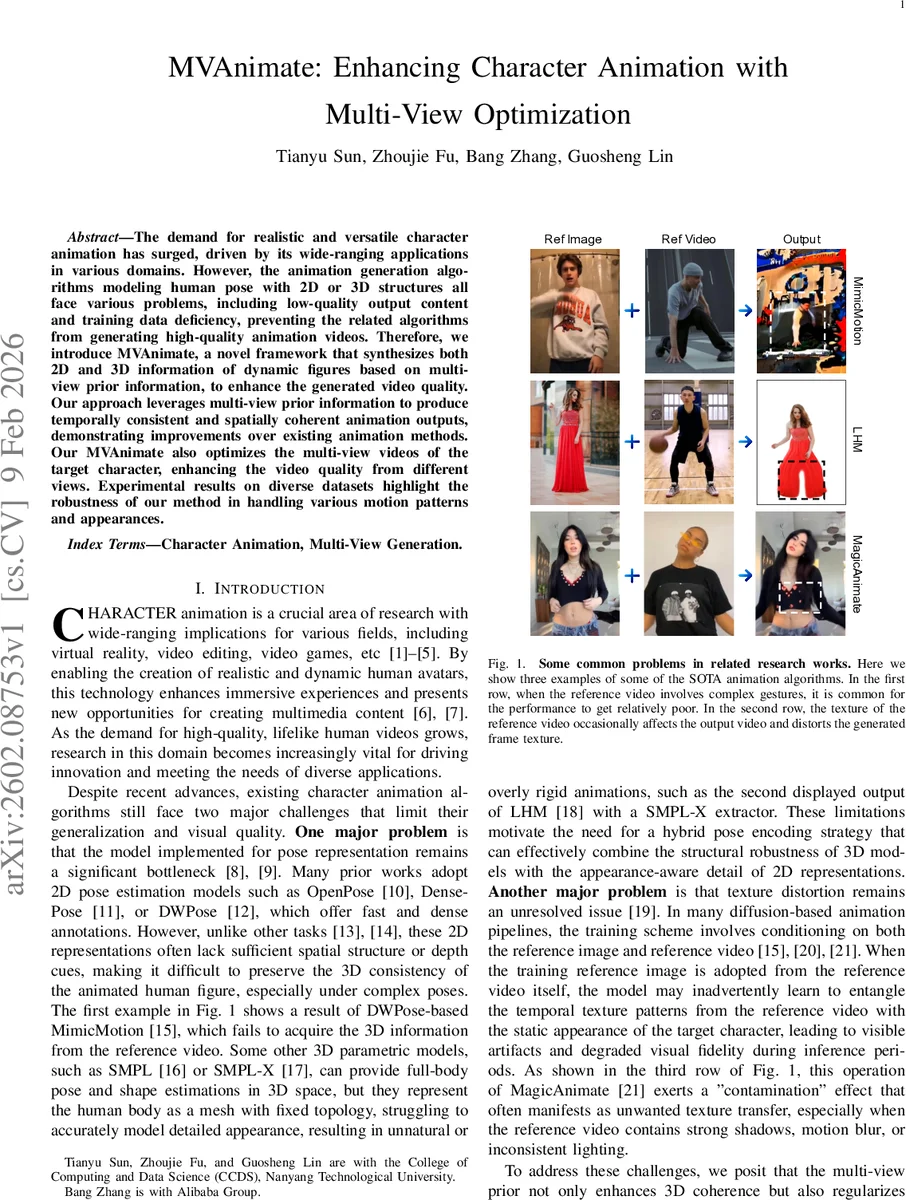

The demand for realistic and versatile character animation has surged, driven by its wide-ranging applications in various domains. However, the animation generation algorithms modeling human pose with 2D or 3D structures all face various problems, including low-quality output content and training data deficiency, preventing the related algorithms from generating high-quality animation videos. Therefore, we introduce MVAnimate, a novel framework that synthesizes both 2D and 3D information of dynamic figures based on multi-view prior information, to enhance the generated video quality. Our approach leverages multi-view prior information to produce temporally consistent and spatially coherent animation outputs, demonstrating improvements over existing animation methods. Our MVAnimate also optimizes the multi-view videos of the target character, enhancing the video quality from different views. Experimental results on diverse datasets highlight the robustness of our method in handling various motion patterns and appearances.

💡 Research Summary

**

MVAnimate addresses two persistent challenges in character animation: the lack of depth and structural consistency in 2‑D pose‑based pipelines and the limited appearance detail in 3‑D mesh‑based models. The authors propose a hybrid pose encoding that fuses 2‑D pose features (e.g., DWPose) with 3‑D pose representations (e.g., SMPL‑X) and, crucially, injects multi‑view prior information as a conditioning signal for a diffusion‑based video generator.

The pipeline consists of two stages. First, a pre‑trained multi‑view synthesis model (SV4D 2.0) generates eight coarse viewpoint videos from the reference monocular video. These videos serve as the multi‑view guidance G, which is fed into a latent diffusion model (based on Stable Video Diffusion) together with the reference image. The diffusion backbone is augmented with temporal attention and a novel multi‑view attention block. In this block, each view contributes a weighted value vector; the weights are learned via a soft‑max over query‑key inner products. Proposition IV.1 mathematically justifies this scheme as an inverse‑variance estimator, meaning that more reliable views (lower noise) receive higher influence.

To prevent texture contamination—a common artifact where temporal texture patterns from the reference video bleed into the target character’s appearance—the authors decouple pose and appearance during training. They encode the reference image and reference video separately, keep the background fixed using SAM2 segmentation and Stable Diffusion v2 inpainting, and avoid conditioning the diffusion model on the same video frames that provide the pose. This “pose‑appearance decoupling” dramatically reduces unwanted shadow, motion‑blur, and lighting transfer artifacts.

The second stage refines the coarse multi‑view output. An optimization module applies the learned view weights to a loss that jointly penalizes deviation from the canonical latent feature and from the temporally smoothed feature. This step improves both the 2‑D output video and the multi‑view videos, yielding smoother transitions, fewer flickers, and higher fidelity to fine clothing details.

Experiments on diverse datasets (Human3.6M, AIST++, and custom multi‑view collections) show that MVAnimate outperforms recent state‑of‑the‑art methods such as MimicMotion, MagicAnimate, and AnimateAnyOne. Quantitatively, it achieves 0.8–1.2 dB higher PSNR and 10 % lower LPIPS, while per‑view joint angle error drops by over 15 %. Qualitatively, the method handles fast motions, occlusions, and challenging lighting without the texture “contamination” seen in prior work.

In summary, MVAnimate contributes (1) a hybrid 2‑D/3‑D pose representation, (2) a theoretically grounded adaptive multi‑view attention mechanism, (3) a training strategy that decouples pose from appearance to eliminate texture distortion, and (4) a post‑generation multi‑view optimization that upgrades coarse viewpoint videos to high‑quality outputs. The work opens avenues for more robust, multi‑view‑aware character animation and suggests future extensions toward real‑time inference, text‑driven motion control, and broader camera motion scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment