PARD: Enhancing Goodput for Inference Pipeline via Proactive Request Dropping

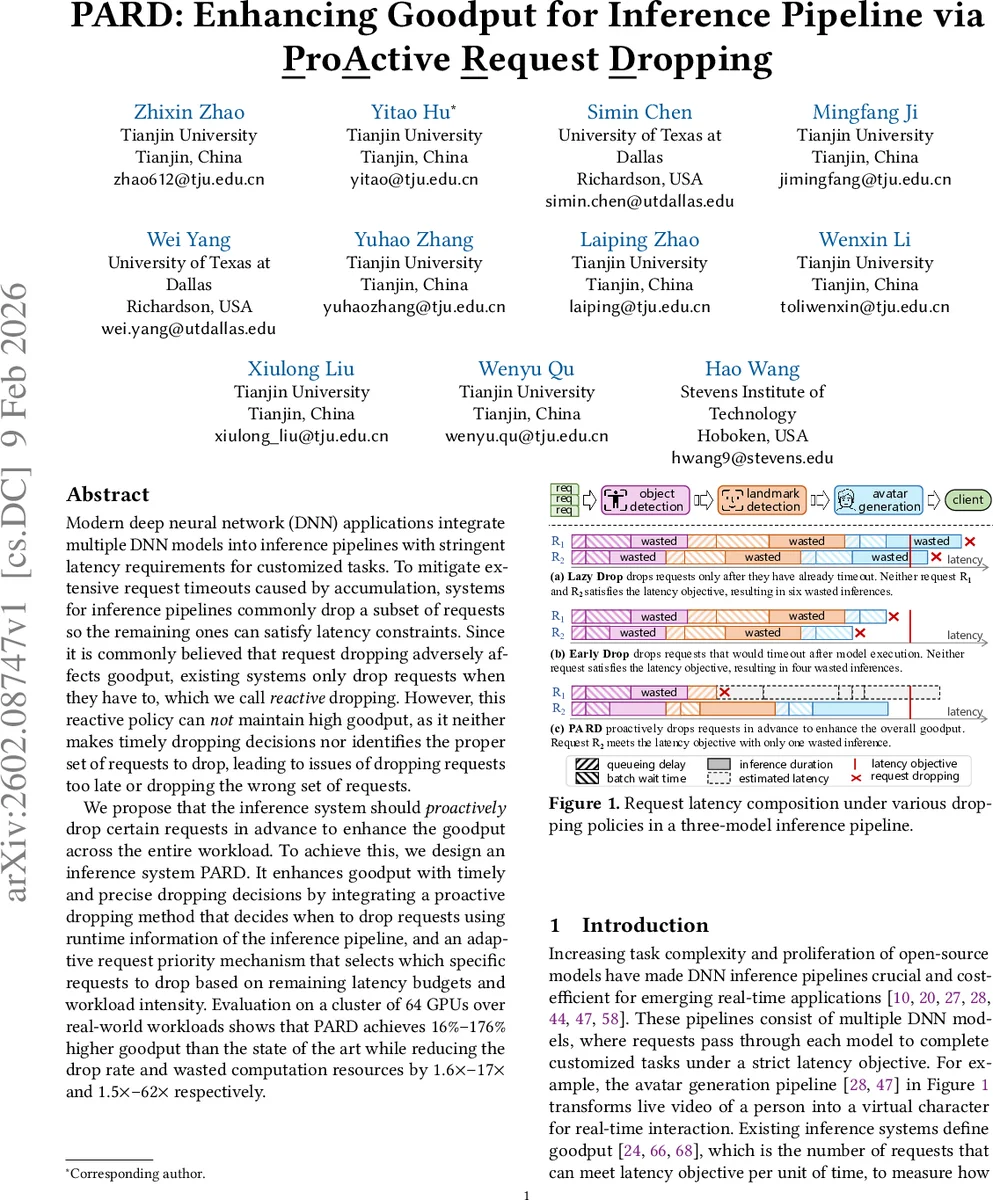

Modern deep neural network (DNN) applications integrate multiple DNN models into inference pipelines with stringent latency requirements for customized tasks. To mitigate extensive request timeouts caused by accumulation, systems for inference pipelines commonly drop a subset of requests so the remaining ones can satisfy latency constraints. Since it is commonly believed that request dropping adversely affects goodput, existing systems only drop requests when they have to, which we call reactive dropping. However, this reactive policy can not maintain high goodput, as it neither makes timely dropping decisions nor identifies the proper set of requests to drop, leading to issues of dropping requests too late or dropping the wrong set of requests. We propose that the inference system should proactively drop certain requests in advance to enhance the goodput across the entire workload. To achieve this, we design an inference system PARD. It enhances goodput with timely and precise dropping decisions by integrating a proactive dropping method that decides when to drop requests using runtime information of the inference pipeline, and an adaptive request priority mechanism that selects which specific requests to drop based on remaining latency budgets and workload intensity. Evaluation on a cluster of 64 GPUs over real-world workloads shows that PARD achieves $16%$-$176%$ higher goodput than the state of the art while reducing the drop rate and wasted computation resources by $1.6\times$-$17\times$ and $1.5\times$-$62\times$ respectively.

💡 Research Summary

The paper addresses the challenge of maintaining high goodput in real‑time deep neural network (DNN) inference pipelines that consist of multiple cascaded models and strict end‑to‑end latency objectives. Existing systems mitigate latency violations by dropping a subset of requests, but they employ reactive dropping policies that only discard a request when it has already exceeded its latency budget or is about to do so. This reactive approach suffers from two fundamental problems: (1) dropping decisions are made too late, often after the request has consumed significant GPU time in early pipeline stages, and (2) the selection of requests to drop follows a simple FIFO order, which frequently discards requests with larger remaining latency budgets while keeping those that are more likely to miss the deadline. Empirical evaluation of state‑of‑the‑art systems such as Nexus and Clipper++ shows that under bursty workloads their goodput can drop to as low as 30 % of the input rate, while the drop rate can exceed 70 %, sometimes performing worse than a naïve system that never drops requests.

To overcome these limitations, the authors propose PARD, a novel inference system that proactively drops requests and adaptively prioritizes which requests to discard. PARD’s design rests on two key mechanisms:

-

Proactive Request Dropping – At each pipeline stage PARD estimates the end‑to‑end latency of every in‑flight request using bidirectional runtime information: the actual execution time of preceding modules and a lower‑bound estimate of the remaining modules’ execution times. By comparing the remaining latency budget of a request with the minimum time required to finish the rest of the pipeline, PARD can identify requests that are impossible to complete on time and drop them immediately, often in the early stages. This prevents wasted computation and reduces queueing delay for the remaining requests.

-

Adaptive Request Priority – Instead of a static FIFO queue, PARD computes a priority score for each request that combines its normalized remaining latency budget with the current workload intensity (GPU utilization, batch queue length, arrival rate). When the system is lightly loaded, it prefers to keep requests with large remaining budgets, maximizing throughput. Under heavy load, it aggressively drops requests with small budgets, protecting the system from overload. This hybrid policy smoothly transitions between “budget‑driven” and “load‑driven” decisions, ensuring that the set of dropped requests is optimal for the current workload dynamics.

The architecture includes a distributed controller, per‑module workers, a request broker, a state planner, and a scaling engine. The request broker implements the proactive dropping logic, while the state planner maintains the bidirectional latency estimates. The scaling engine can still perform conventional resource scaling and dynamic batching; PARD’s dropping mechanisms are orthogonal and can be layered on top of any existing scheduler.

Evaluation is performed on a 64‑GPU cluster using six real‑world workloads (Twitter, Wikipedia, tracking, avatar generation, etc.). Compared with the best prior systems, PARD achieves 16 %–176 % higher goodput, reduces the overall drop rate by 1.6 ×–17 ×, and cuts wasted GPU computation by 1.5 ×–62 ×. Ablation studies demonstrate that removing the bidirectional latency estimator or reverting to FIFO priority dramatically degrades performance, confirming the necessity of both components. Moreover, PARD maintains stable goodput during sudden traffic spikes, where reactive policies experience transient drop rates exceeding 90 %.

In summary, PARD introduces a proactive, latency‑aware dropping strategy and an adaptive priority scheme that together enable inference pipelines to meet strict latency SLOs while maximizing the number of successfully served requests. The approach is compatible with existing scaling and batching techniques, making it a practical enhancement for production‑grade DNN serving platforms. Future work may explore more sophisticated machine‑learning‑based latency predictors and extensions to multi‑tenant environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment