Low-Light Video Enhancement with An Effective Spatial-Temporal Decomposition Paradigm

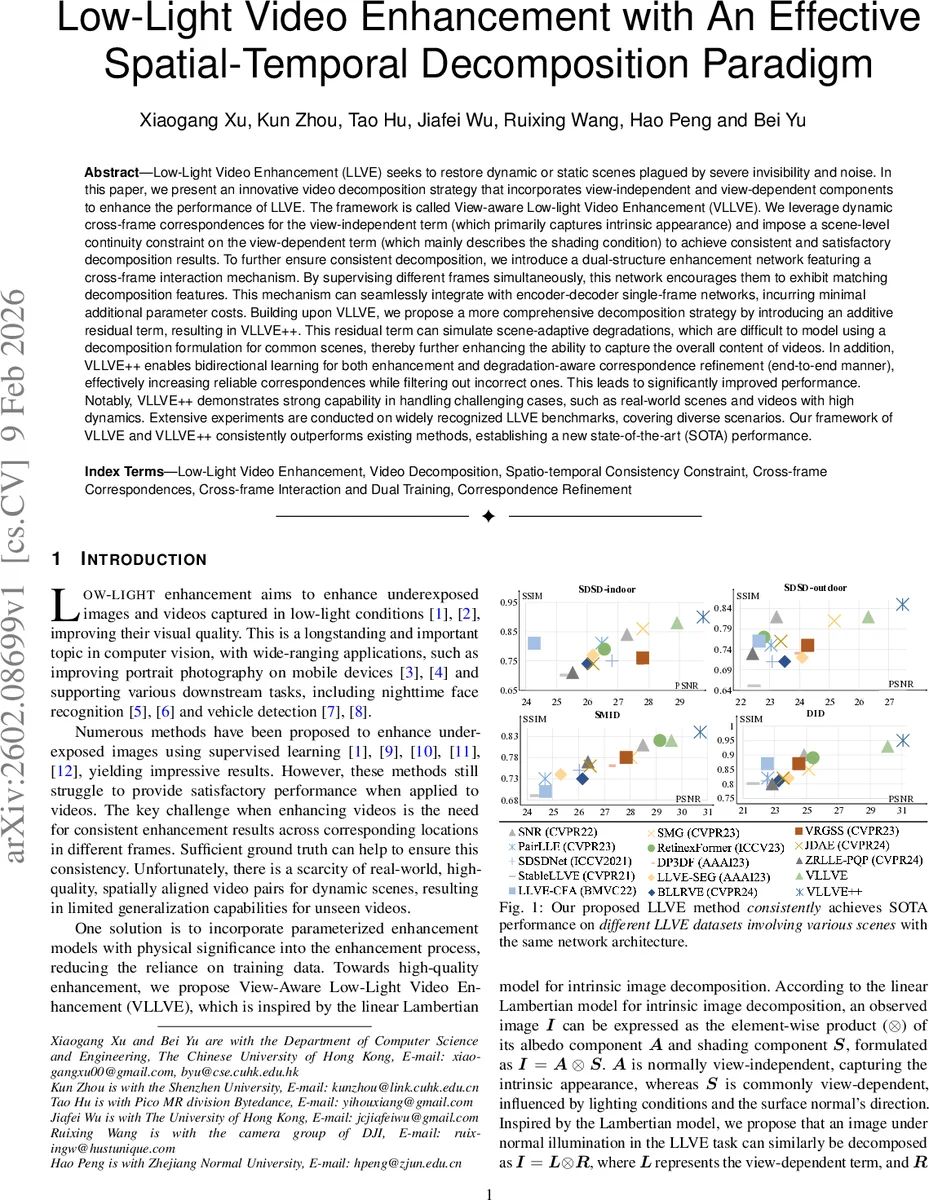

Low-Light Video Enhancement (LLVE) seeks to restore dynamic or static scenes plagued by severe invisibility and noise. In this paper, we present an innovative video decomposition strategy that incorporates view-independent and view-dependent components to enhance the performance of LLVE. The framework is called View-aware Low-light Video Enhancement (VLLVE). We leverage dynamic cross-frame correspondences for the view-independent term (which primarily captures intrinsic appearance) and impose a scene-level continuity constraint on the view-dependent term (which mainly describes the shading condition) to achieve consistent and satisfactory decomposition results. To further ensure consistent decomposition, we introduce a dual-structure enhancement network featuring a cross-frame interaction mechanism. By supervising different frames simultaneously, this network encourages them to exhibit matching decomposition features. This mechanism can seamlessly integrate with encoder-decoder single-frame networks, incurring minimal additional parameter costs. Building upon VLLVE, we propose a more comprehensive decomposition strategy by introducing an additive residual term, resulting in VLLVE++. This residual term can simulate scene-adaptive degradations, which are difficult to model using a decomposition formulation for common scenes, thereby further enhancing the ability to capture the overall content of videos. In addition, VLLVE++ enables bidirectional learning for both enhancement and degradation-aware correspondence refinement (end-to-end manner), effectively increasing reliable correspondences while filtering out incorrect ones. Notably, VLLVE++ demonstrates strong capability in handling challenging cases, such as real-world scenes and videos with high dynamics. Extensive experiments are conducted on widely recognized LLVE benchmarks.

💡 Research Summary

Low‑light video enhancement (LLVE) remains a challenging problem because it must simultaneously brighten severely under‑exposed frames, suppress noise, and preserve temporal consistency across the whole clip. Existing low‑light image enhancement methods, when applied frame‑by‑frame, often produce flickering and inconsistent illumination. This paper introduces a novel decomposition‑driven framework that explicitly separates a video frame into a view‑dependent illumination component (L) and a view‑independent reflectance component (R), inspired by the classic Lambertian model (I = A ⊗ S).

VLLVE (View‑aware Low‑light Video Enhancement)

The core idea is to exploit cross‑frame correspondences to enforce two complementary constraints: (1) the reflectance R should be identical for corresponding pixels across frames, because it encodes intrinsic texture that does not change with viewpoint; (2) the illumination L should vary smoothly over time, reflecting physical continuity of lighting. Correspondences are obtained with a pretrained matching network (e.g., optical flow or feature‑based matcher) on the normal‑light ground‑truth videos, which are pixel‑aligned with the low‑light inputs. Each correspondence carries an uncertainty weight, and the loss for R is a weighted L2 distance between the predicted R values at matched locations. A reconstruction loss (‖I − L ⊗ R‖) and regularizers on L and R complete the objective.

To make the decomposition robust and to propagate information between frames, the authors embed a Cross‑Frame Interaction Module (CFIM) into the deepest layer of any encoder‑decoder backbone (e.g., UNet). CFIM performs a cross‑attention between the feature maps of two frames, allowing the network to share view‑independent cues while still learning separate L and R for each frame. The module adds only a few parameters and can be dropped into existing single‑frame enhancement pipelines without redesign.

VLLVE++: Adding a Residual Term and Bidirectional Learning

The authors observe that a pure multiplicative model (I = L ⊗ R) cannot capture all real‑world degradations, especially complex lighting, sensor noise, and RAW‑domain artifacts. Therefore they extend the formulation to I = L ⊗ R + B, where B is a residual term that absorbs components not explainable by the product. B is initialized to zero and learned jointly with L and R, guided by the same multi‑frame reconstruction loss. Continuity is also imposed on B to keep it physically plausible.

A second major contribution is a bidirectional learning scheme that refines the correspondence maps during training. Instead of treating the pre‑computed correspondences as fixed, a lightweight refinement network predicts a residual correction for each correspondence. The refinement is supervised by the view‑independent features (R) produced by the VLLVE network: (i) matched points should have similar R values, and (ii) the refined correspondences are fed back to improve the decomposition. This creates a positive feedback loop: better correspondences → better decomposition → even better correspondences. The refinement network also learns to down‑weight or discard low‑confidence matches, effectively filtering out noisy or erroneous flows that are common in low‑light conditions.

Experiments

The paper evaluates VLLVE and VLLVE++ on several widely used LLVE benchmarks, including synthetic datasets (e.g., DarkVideo), real‑world captured clips, and RAW‑domain sequences. Metrics cover both spatial quality (PSNR, SSIM) and temporal consistency (t‑LPIPS, warped‑PSNR). VLLVE already outperforms prior state‑of‑the‑art methods such as LLNet, Retinex‑Net, and LAN by 1.2–2.0 dB in PSNR and shows noticeably lower flickering. VLLVE++ further improves results, especially on highly dynamic scenes and RAW data where the residual term B captures complex illumination patterns. A user study confirms that participants prefer the visual quality of VLLVE++ outputs, citing smoother lighting transitions and more natural colors.

Key Insights and Contributions

- Physically‑motivated decomposition for video – By treating each frame as a product of view‑dependent illumination and view‑independent reflectance, the method aligns with the Lambertian model while extending it to sequential data.

- Cross‑frame interaction without heavy overhead – CFIM integrates seamlessly into any encoder‑decoder backbone, enabling feature sharing across time and improving decomposition consistency.

- Residual term for real‑world complexity – The additive B term allows the model to handle lighting conditions that violate the pure multiplicative assumption, such as specular highlights, sensor saturation, and RAW‑specific noise.

- Bidirectional correspondence refinement – Jointly learning enhancement and correspondence correction creates a self‑reinforcing system that mitigates the poor matching quality typical of low‑light videos.

- State‑of‑the‑art performance across diverse datasets – Extensive quantitative and qualitative results demonstrate consistent superiority over existing LLVE approaches, with particular gains in high‑motion and RAW scenarios.

Conclusion

The paper presents a comprehensive, physics‑aware framework for low‑light video enhancement. By decomposing frames into illumination, reflectance, and a residual term, enforcing cross‑frame consistency through correspondences, and introducing a bidirectional learning loop for correspondence refinement, the authors achieve both high visual quality and temporal stability. The approach is modular, lightweight, and extensible, opening avenues for future work on real‑time deployment, broader sensor modalities, and integration with downstream vision tasks such as low‑light detection or tracking.

Comments & Academic Discussion

Loading comments...

Leave a Comment