Input-Adaptive Spectral Feature Compression by Sequence Modeling for Source Separation

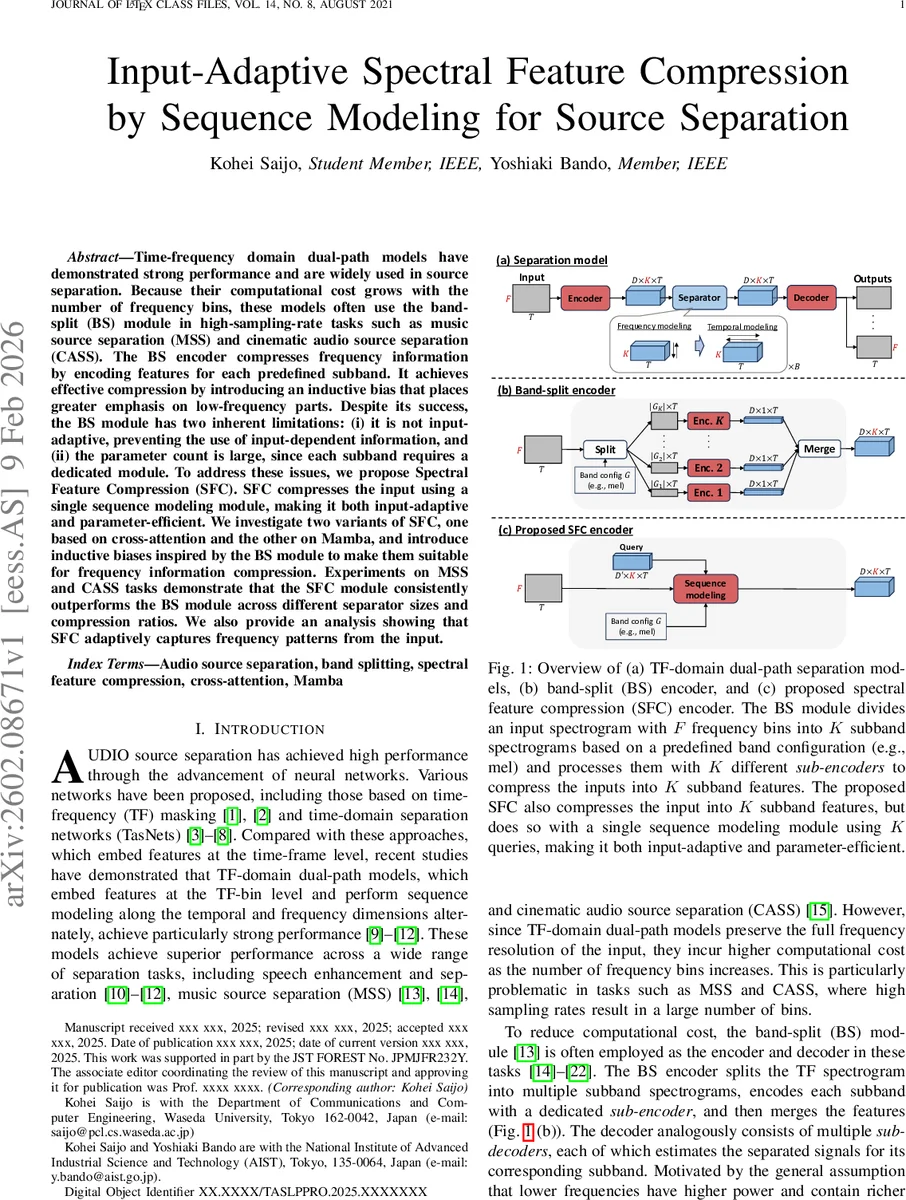

Time-frequency domain dual-path models have demonstrated strong performance and are widely used in source separation. Because their computational cost grows with the number of frequency bins, these models often use the band-split (BS) module in high-sampling-rate tasks such as music source separation (MSS) and cinematic audio source separation (CASS). The BS encoder compresses frequency information by encoding features for each predefined subband. It achieves effective compression by introducing an inductive bias that places greater emphasis on low-frequency parts. Despite its success, the BS module has two inherent limitations: (i) it is not input-adaptive, preventing the use of input-dependent information, and (ii) the parameter count is large, since each subband requires a dedicated module. To address these issues, we propose Spectral Feature Compression (SFC). SFC compresses the input using a single sequence modeling module, making it both input-adaptive and parameter-efficient. We investigate two variants of SFC, one based on cross-attention and the other on Mamba, and introduce inductive biases inspired by the BS module to make them suitable for frequency information compression. Experiments on MSS and CASS tasks demonstrate that the SFC module consistently outperforms the BS module across different separator sizes and compression ratios. We also provide an analysis showing that SFC adaptively captures frequency patterns from the input.

💡 Research Summary

The paper addresses the computational bottleneck of time‑frequency (TF) domain dual‑path source separation models when processing high‑resolution audio. Existing solutions often rely on a band‑split (BS) encoder that partitions the spectrogram into K predefined sub‑bands (e.g., using a mel or musical scale) and processes each sub‑band with its own encoder‑decoder pair. While BS efficiently compresses frequency information by allocating narrower bandwidths to low frequencies—a psychoacoustically motivated inductive bias—it suffers from two major drawbacks: (i) it is not input‑adaptive, thus cannot exploit the specific spectral patterns of each mixture, and (ii) it requires K separate encoder/decoder modules, inflating the total parameter count.

To overcome these limitations, the authors propose Spectral Feature Compression (SFC), a unified, input‑adaptive compression framework that replaces the multiple sub‑encoders with a single sequence‑modeling module equipped with K learnable queries. Two variants are explored:

-

SFC‑CA (Cross‑Attention) – The input feature map (after a 2‑D convolution and RMS normalization) of shape D′ × F × T is fed into a cross‑attention block. K queries Q_E (size D′ × K) attend to the full frequency axis. A carefully designed positional bias matrix P_E (K × F) aligns each query with its target sub‑band G_k, reproducing the BS inductive bias (greater emphasis on low frequencies). The attention output yields a compressed representation of shape D′ × K × T, which is further processed by a second 2‑D convolution to obtain the final compressed feature Z (D × K × T).

-

SFC‑Mamba (State‑Space Recurrent) – This variant uses the Mamba block, a state‑space model that efficiently captures long‑range dependencies. Queries are inserted at fixed intervals along the frequency dimension according to the band definition G_k. The Mamba processes the sequence and returns compressed embeddings at the query positions, again producing a D′ × K × T tensor. Positional bias is implicitly realized by the placement of queries.

Both encoders are paired with a symmetric decoder that expands the compressed representation back to the original frequency resolution using the same mechanism in reverse (queries of length F). After expansion, a 2‑D transposed convolution predicts complex masks, which are applied to the mixture STFT to obtain separated sources.

Experimental Evaluation

The authors evaluate SFC on two challenging tasks: music source separation (MUSDB18) and cinematic audio source separation (CASS). They test three model sizes (small, medium, large) and three compression ratios (K/F = 1/16, 1/8, 1/4). Baselines use the identical dual‑path separator but with the traditional BS encoder/decoder. Metrics include SI‑SDR, SDR, and PESQ.

Results show that across all configurations SFC consistently outperforms BS, achieving gains of 0.3–0.8 dB in SI‑SDR while reducing total parameters by 30 %–50 %. SFC‑CA generally yields the highest scores, but SFC‑Mamba offers comparable performance with lower computational overhead, making it attractive for resource‑constrained scenarios.

Ablation Studies

- Positional Bias: Removing the bias matrix degrades performance dramatically, confirming that the bias is essential for guiding queries to appropriate frequency regions.

- Number of Queries: Varying K demonstrates the trade‑off between compression ratio and separation quality; moderate K (≈64 for F = 1025) balances efficiency and accuracy.

- Receptive Field: Experiments with deeper attention/recurrent stacks indicate diminishing returns beyond a certain depth, suggesting that the primary benefit stems from adaptive compression rather than sheer model capacity.

Interpretability

Attention weight visualizations reveal that queries focus on spectrally salient regions (e.g., bass lines, vocal formants) specific to each mixture, evidencing true input‑adaptivity. This dynamic allocation contrasts with the static band partitions of BS.

Conclusions and Future Work

SFC introduces a novel paradigm: compressing the frequency dimension with a single, query‑driven sequence model that retains the psychoacoustic bias of BS while adding input‑dependent flexibility and parameter efficiency. The approach enables more scalable dual‑path separation, especially for high‑sampling‑rate audio where F can exceed 2000. Future directions include extending SFC to alternative efficient attention mechanisms (e.g., Performer, linear‑attention), exploring multi‑channel and multi‑source extensions, and integrating SFC into end‑to‑end time‑domain separation pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment