High-Speed Vision-Based Flight in Clutter with Safety-Shielded Reinforcement Learning

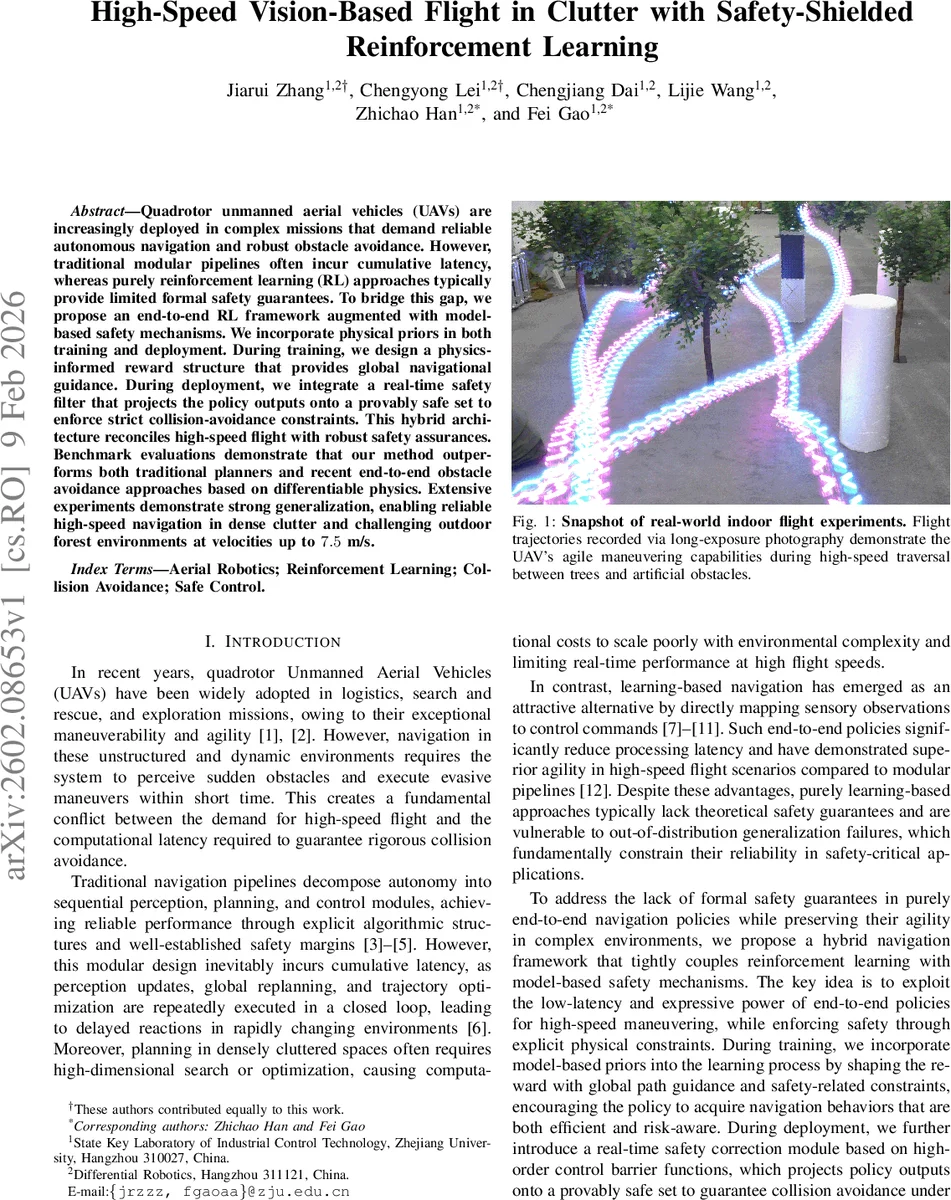

Quadrotor unmanned aerial vehicles (UAVs) are increasingly deployed in complex missions that demand reliable autonomous navigation and robust obstacle avoidance. However, traditional modular pipelines often incur cumulative latency, whereas purely reinforcement learning (RL) approaches typically provide limited formal safety guarantees. To bridge this gap, we propose an end-to-end RL framework augmented with model-based safety mechanisms. We incorporate physical priors in both training and deployment. During training, we design a physics-informed reward structure that provides global navigational guidance. During deployment, we integrate a real-time safety filter that projects the policy outputs onto a provably safe set to enforce strict collision-avoidance constraints. This hybrid architecture reconciles high-speed flight with robust safety assurances. Benchmark evaluations demonstrate that our method outperforms both traditional planners and recent end-to-end obstacle avoidance approaches based on differentiable physics. Extensive experiments demonstrate strong generalization, enabling reliable high-speed navigation in dense clutter and challenging outdoor forest environments at velocities up to 7.5m/s.

💡 Research Summary

The paper tackles the challenging problem of enabling quadrotor UAVs to fly at high speed through densely cluttered environments while guaranteeing collision‑free operation. Traditional modular pipelines (perception → planning → control) suffer from cumulative latency and heavy computational loads, especially when high‑frequency replanning is required. Pure end‑to‑end reinforcement‑learning (RL) policies eliminate most of this latency but lack formal safety guarantees, making them unsuitable for safety‑critical deployments.

To bridge this gap, the authors propose a hybrid framework that combines the agility of model‑free RL with the rigor of model‑based safety mechanisms. The key contributions are:

-

Physics‑informed reward shaping – During training the reward is a weighted sum of several terms. Two novel components are introduced:

- Geodesic navigation reward: A Dijkstra‑computed cost map (Φ) representing the true shortest‑path distance around obstacles is pre‑computed for each training scene. At each timestep the agent interpolates the cost at its current and previous positions, computes the progress δ = Φ(p_{t‑1}) – Φ(p_t), clamps it, and scales it to form r_navigation. This dense, global potential field steers the policy away from local minima that plague Euclidean‑distance rewards.

- Control‑Barrier‑Function (CBF) safety reward: An ESDF provides the distance d(x) to the nearest obstacle. The barrier h(x)=d(x)−d_safe and its time derivative ˙h = ∇d·v are used to compute a safety term r_safety = clip(˙h + γh, δ_min, 0). By penalising violations of the CBF condition, the policy learns to align its velocity with the ESDF gradient, effectively anticipating collisions.

-

Asymmetric actor‑critic architecture – The observation consists of a 100×60 depth image, linear velocity, attitude matrix, previous action, and goal‑relative displacement. The depth image is processed by a CNN, concatenated with proprioceptive data, and fed into a GRU that captures temporal context. The actor outputs a four‑dimensional continuous action (normalized thrust and desired roll, pitch, yaw angles) at 100 Hz. Crucially, the critic receives noise‑free ground‑truth states, while the actor is trained on noisy, delayed observations (dropout, sensor noise, communication lag). This privileged‑learning setup improves robustness to real‑world uncertainties.

-

Real‑time safety filter based on high‑order CBF (HOCBF) – At deployment, the raw action from the policy is passed through a HOCBF filter that projects it onto the set of control inputs satisfying ˙h + γh ≥ 0. The filter runs at the same high frequency as the controller, guaranteeing that even under disturbances or distribution shifts the UAV never violates the safety margin d_safe.

-

Sim‑to‑real transfer via domain randomization – During training, the authors randomize image dropout, additive noise, and actuation delay to mimic real sensor and communication imperfections. This dramatically reduces the sim‑to‑real gap, allowing the same network to be deployed on a PX4‑based quadrotor without additional fine‑tuning.

-

Extensive evaluation – In simulation, the method is benchmarked against state‑of‑the‑art modular planners (e.g., Ego‑Planner), RL baselines (NavRL, optimization‑embedded networks), and pure vision‑based approaches. The proposed system consistently achieves higher success rates, lower collision counts, and smoother trajectories, especially at speeds above 6 m/s where other methods fail. Real‑world experiments include indoor flights through artificial trees and outdoor flights in a dense forest, reaching speeds up to 7.5 m/s while maintaining zero collisions. Logs confirm that the HOCBF filter actively corrects unsafe commands, providing empirical evidence of the theoretical safety guarantee.

Limitations and future work – The current safety filter assumes a static ESDF; extending it to dynamic obstacles would require predictive models or adaptive CBFs. The geodesic reward relies on pre‑computed cost maps, which may not be available in completely unknown environments; online map updates or learned cost fields are potential extensions. Finally, the HOCBF parameters (γ, d_safe) are manually tuned; automated or learning‑based parameter adaptation could further improve performance.

Overall, the paper presents a compelling solution that reconciles high‑speed vision‑based navigation with provable safety, demonstrating both strong simulation results and successful real‑world deployment. This hybrid RL‑plus‑model‑based approach sets a new benchmark for agile, reliable UAV flight in complex, cluttered settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment