Supporting Effective Goal Setting with LLM-Based Chatbots

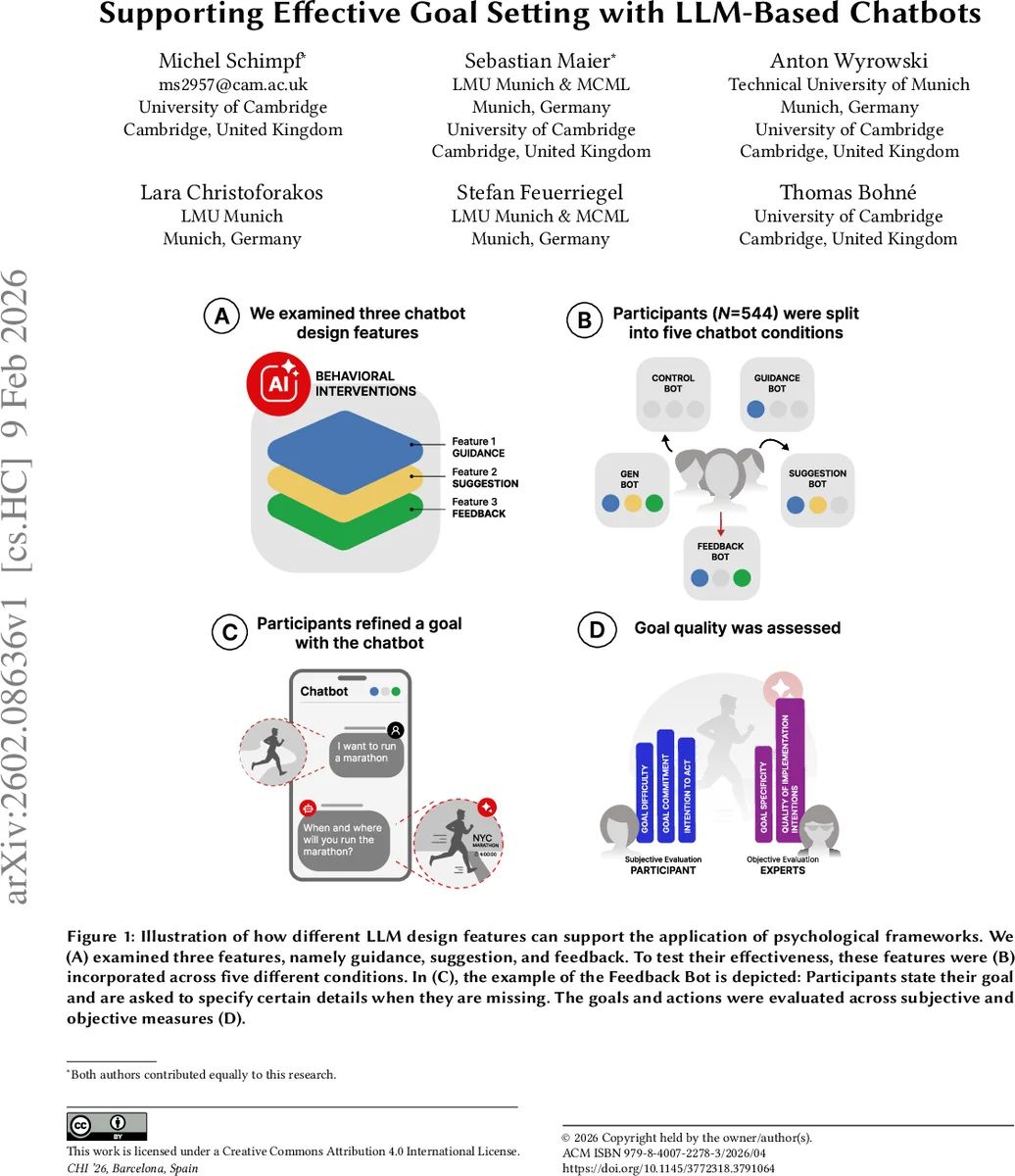

Each day, individuals set behavioral goals such as eating healthier, exercising regularly, or increasing productivity. While psychological frameworks (i.e., goal setting and implementation intentions) can be helpful, they often need structured external support, which interactive technologies can provide. We thus explored how large language model (LLM)-based chatbots can apply these frameworks to guide users in setting more effective goals. We conducted a preregistered randomized controlled experiment ($N = 543$) comparing chatbots with different combinations of three design features: guidance, suggestions, and feedback. We evaluated goal quality using subjective and objective measures. We found that, while guidance is already helpful, it is the addition of feedback that makes LLM-based chatbots effective in supporting participants’ goal setting. In contrast, adaptive suggestions were less effective. Altogether, our study shows how to design chatbots by operationalizing psychological frameworks to provide effective support for reaching behavioral goals.

💡 Research Summary

This paper investigates how large language model (LLM)‑based chatbots can operationalize well‑established psychological frameworks—goal‑setting theory and implementation‑intention theory—to improve the quality of personal goals and action plans. The authors identify three design features that might influence effectiveness: (1) guidance, which provides step‑by‑step structure; (2) suggestions, which generate adaptive examples or ideas; and (3) feedback, which detects missing elements in a user’s goal description and offers personalized corrective input. To test these features, a preregistered randomized controlled trial with 543 participants was conducted. Five chatbot conditions were created: a basic control chatbot that asks two fixed questions, a rule‑based chatbot delivering only guidance, a guidance‑plus‑suggestions chatbot, a guidance‑plus‑feedback chatbot, and a fully interactive chatbot combining all three features. Participants set personally meaningful goals (e.g., running a marathon, learning Python) and were evaluated on both subjective and objective metrics: goal specificity, perceived difficulty, commitment, quality of implementation intentions (if‑then plans), and intention to act.

Results show that guidance alone already raises goal specificity and perceived difficulty, confirming its value. Adding personalized feedback produces the strongest improvements across all quality measures of goals and implementation intentions. In contrast, the adaptive suggestion feature does not yield measurable benefits and may even dilute users’ sense of ownership over their own goals. Notably, while feedback enhances the cognitive quality of goals, it does not increase participants’ commitment or behavioral intention, suggesting that cognitive support does not automatically translate into motivational change.

The authors conclude that effective LLM‑based goal‑setting chatbots should prioritize structured guidance and real‑time, personalized feedback that fills informational gaps, while using suggestions sparingly or context‑specifically. The study contributes both theoretically—by embedding psychological theory into human‑AI interaction—and practically—by offering concrete design guidelines for scalable, domain‑agnostic behavioral interventions. Limitations include the single‑session design, reliance on self‑report measures, and lack of long‑term outcome data. Future work is recommended to explore different feedback typologies (e.g., positive reinforcement vs. corrective feedback), optimal feedback frequency, and longitudinal effects on goal attainment and behavior change.

Comments & Academic Discussion

Loading comments...

Leave a Comment