OSCAR: Optimization-Steered Agentic Planning for Composed Image Retrieval

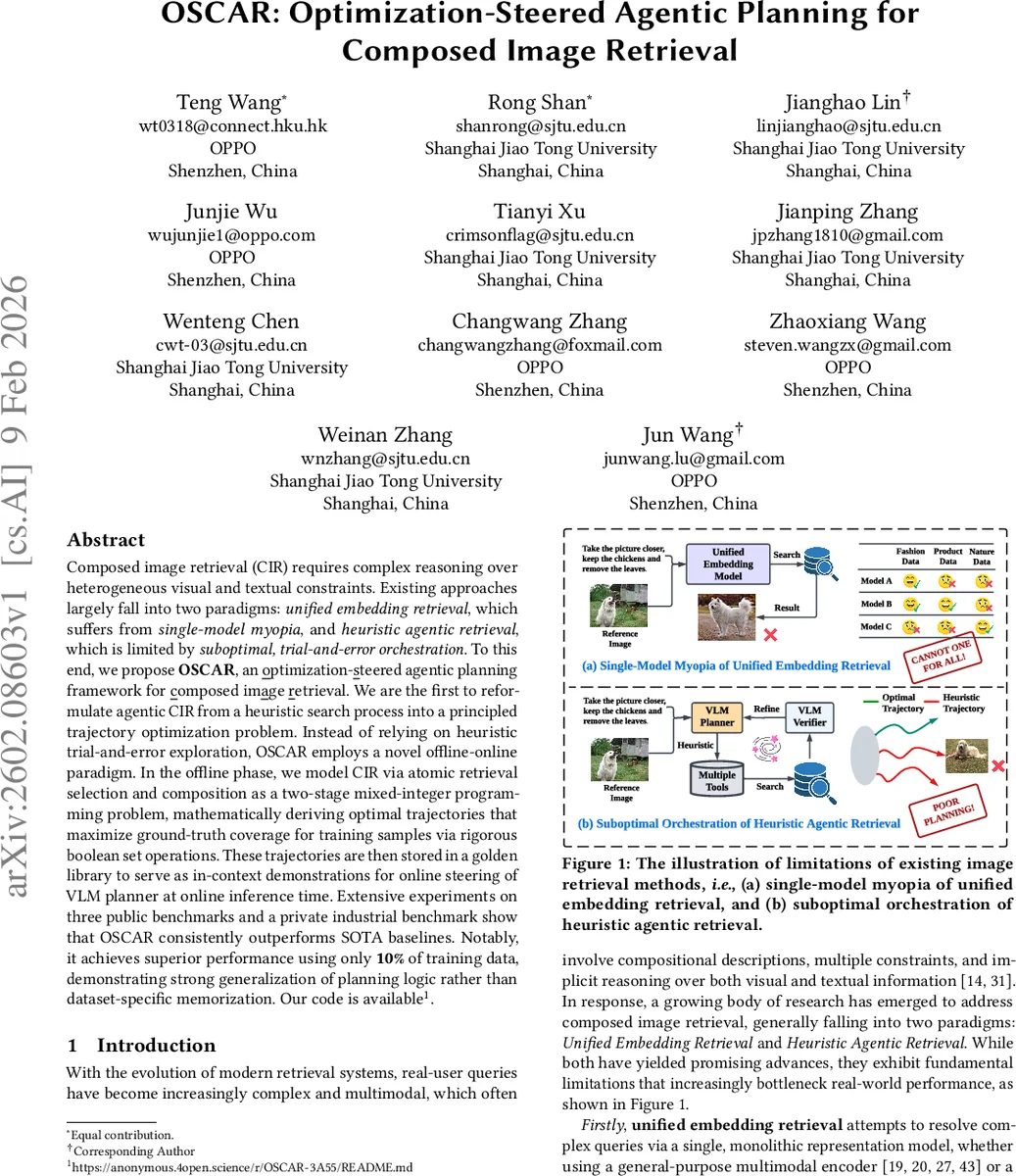

Composed image retrieval (CIR) requires complex reasoning over heterogeneous visual and textual constraints. Existing approaches largely fall into two paradigms: unified embedding retrieval, which suffers from single-model myopia, and heuristic agentic retrieval, which is limited by suboptimal, trial-and-error orchestration. To this end, we propose OSCAR, an optimization-steered agentic planning framework for composed image retrieval. We are the first to reformulate agentic CIR from a heuristic search process into a principled trajectory optimization problem. Instead of relying on heuristic trial-and-error exploration, OSCAR employs a novel offline-online paradigm. In the offline phase, we model CIR via atomic retrieval selection and composition as a two-stage mixed-integer programming problem, mathematically deriving optimal trajectories that maximize ground-truth coverage for training samples via rigorous boolean set operations. These trajectories are then stored in a golden library to serve as in-context demonstrations for online steering of VLM planner at online inference time. Extensive experiments on three public benchmarks and a private industrial benchmark show that OSCAR consistently outperforms SOTA baselines. Notably, it achieves superior performance using only 10% of training data, demonstrating strong generalization of planning logic rather than dataset-specific memorization.

💡 Research Summary

The paper introduces OSCAR, a novel framework for composed image retrieval (CIR) that replaces heuristic, trial‑and‑error agentic pipelines with a principled, optimization‑driven planning approach. Existing CIR methods fall into two categories. Unified embedding models collapse the reference image and modification text into a single latent vector and retrieve images with nearest‑neighbor search. While simple, this “single‑model myopia” fails to capture the heterogeneous visual and textual cues that real‑world queries demand, especially across diverse domains such as fashion, natural scenes, or fine‑grained attributes. Heuristic agentic methods, on the other hand, decompose the task into multiple steps (e.g., query rewriting, captioning, retrieval) and let a large language model (LLM) or vision‑language model (VLM) iteratively decide which tool to call next. Although flexible, these approaches rely on local, greedy decisions, leading to redundant tool calls, poor ordering, and an inability to enforce strict inclusion/exclusion constraints in a global manner.

OSCAR reframes CIR as a global trajectory optimization problem. The key insight is that, given a query with known ground‑truth images, the ideal set of tool invocations can be expressed as a variant of the set‑cover problem: select a minimal subset of atomic retrievals whose union covers the ground‑truth set while minimizing overlap with non‑target images. To operationalize this, the authors propose an offline‑online paradigm.

Offline phase (optimization):

-

Atomic retrieval construction: Each possible tool call is treated as an atomic action defined by a four‑tuple (tool f, rewritten query \hat{q}, polarity p∈{+,−}, top‑k k). Polarity indicates whether the retrieved set should be included (+) or excluded (−) from the final result. The VLM rewrites the original modification text into multiple attribute‑level queries, each assigned a polarity. The top‑k parameter controls the trade‑off between recall and precision; the authors discretize k into a finite set and compute the maximal‑k retrieval once, then slice to obtain smaller k values, ensuring computational efficiency. All combinations of tools, rewritten queries, polarities, and k values generate a large pool of atomic retrievals (≈1,200 per training sample).

-

Recall‑oriented MIP: Binary variables x_r indicate whether a positive atomic retrieval r is selected. Auxiliary variables c_i track whether each image i is covered, and t_f indicate whether a tool f is used. To avoid redundant calls, atomic retrievals sharing the same tool, query, and polarity (differing only in k) are grouped into families; selecting multiple members of a family yields diminishing returns due to monotonicity of top‑k. The MIP maximizes the number of covered ground‑truth images while penalizing inclusion of non‑target images and excessive tool usage, yielding a compact set of positive retrievals U.

-

Precision‑oriented MIP: Using the candidate set U, a second MIP incorporates negative atomic retrievals and explicit Boolean set operations (union, intersection, difference). This stage refines the result by enforcing exclusion constraints and improving precision, something heuristic agents cannot guarantee.

The optimal trajectories (ordered selections of atomic retrievals together with the required set operations) are stored in a “golden library” as high‑quality demonstrations.

Online phase (steering):

When a test query arrives, its ground‑truth is unknown. OSCAR retrieves the most similar trajectories from the golden library (based on query embedding similarity) and feeds them as in‑context examples to the VLM planner. The VLM, now guided by these demonstrations, reproduces a near‑optimal planning sequence in a single inference pass, eliminating the need for iterative tool‑calling loops. This dramatically reduces computational overhead while preserving the logical rigor of the offline‑derived plan.

Experimental results:

The authors evaluate OSCAR on three public CIR benchmarks (including FashionIQ, CIRR, and Shoes) and a proprietary industrial dataset. Across all metrics (Recall@K, mAP), OSCAR consistently outperforms both state‑of‑the‑art unified embedding models and recent heuristic agentic baselines. Notably, using only 10 % of the available training data to build the golden library yields performance comparable to using the full dataset, demonstrating that the learned planning logic generalizes beyond memorization of specific examples. Inference speed improves by 30‑50 % and the number of tool calls drops significantly, confirming the efficiency gains of the optimization‑steered approach.

Contributions and significance:

- Optimization perspective: First formulation of agentic CIR as a mixed‑integer program, providing provable optimality for training samples.

- Set‑theoretic composition: Explicit Boolean logic for inclusion and exclusion, enabling precise handling of complex constraints.

- Offline‑online framework: Seamless integration of MIP‑derived optimal trajectories with VLM planning via a golden library, achieving single‑pass inference.

- Empirical superiority: State‑of‑the‑art results with minimal training data and reduced computational cost.

Overall, OSCAR showcases how global optimization can replace heuristic exploration in tool‑driven multimodal agents, offering a blueprint for future systems that require both reasoning depth and operational efficiency.

Comments & Academic Discussion

Loading comments...

Leave a Comment