SemiNFT: Learning to Transfer Presets from Imitation to Appreciation via Hybrid-Sample Reinforcement Learning

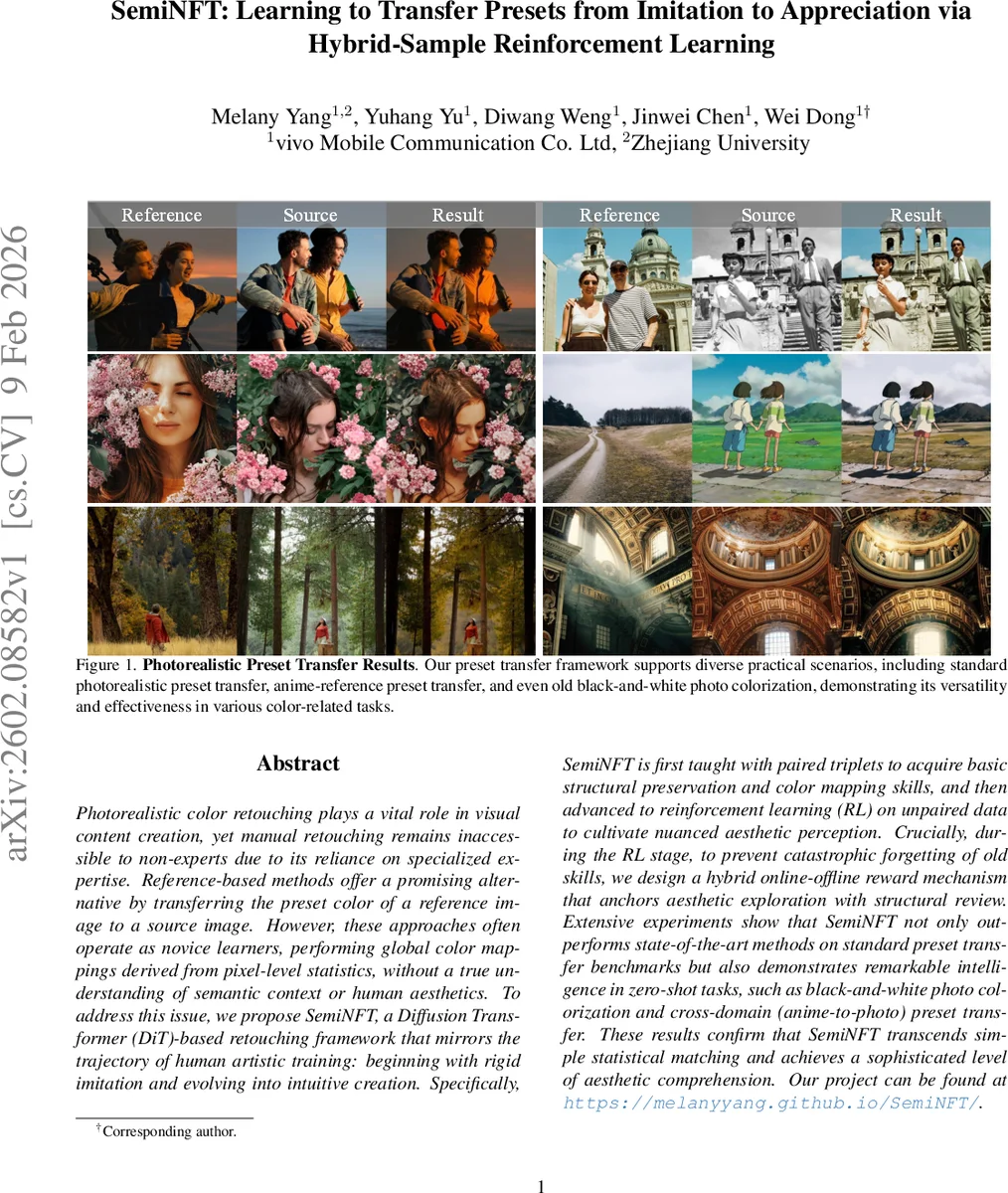

Photorealistic color retouching plays a vital role in visual content creation, yet manual retouching remains inaccessible to non-experts due to its reliance on specialized expertise. Reference-based methods offer a promising alternative by transferring the preset color of a reference image to a source image. However, these approaches often operate as novice learners, performing global color mappings derived from pixel-level statistics, without a true understanding of semantic context or human aesthetics. To address this issue, we propose SemiNFT, a Diffusion Transformer (DiT)-based retouching framework that mirrors the trajectory of human artistic training: beginning with rigid imitation and evolving into intuitive creation. Specifically, SemiNFT is first taught with paired triplets to acquire basic structural preservation and color mapping skills, and then advanced to reinforcement learning (RL) on unpaired data to cultivate nuanced aesthetic perception. Crucially, during the RL stage, to prevent catastrophic forgetting of old skills, we design a hybrid online-offline reward mechanism that anchors aesthetic exploration with structural review. % experiments Extensive experiments show that SemiNFT not only outperforms state-of-the-art methods on standard preset transfer benchmarks but also demonstrates remarkable intelligence in zero-shot tasks, such as black-and-white photo colorization and cross-domain (anime-to-photo) preset transfer. These results confirm that SemiNFT transcends simple statistical matching and achieves a sophisticated level of aesthetic comprehension. Our project can be found at https://melanyyang.github.io/SemiNFT/.

💡 Research Summary

The paper introduces SemiNFT, a novel framework for photorealistic preset transfer that mimics the learning trajectory of human retouching experts. Built on a Diffusion Transformer (DiT) backbone, SemiNFT adopts a curriculum‑style two‑stage training pipeline. In the first “cold‑start” stage, a dedicated LoRA module is fine‑tuned on 3,200 paired image triplets (source, reference, target) together with textual descriptions. This supervised phase teaches the model to preserve structural relationships and perform basic color mapping while preventing cross‑branch information leakage through causal attention masks.

The second stage transitions to reinforcement learning (RL) on 1,500 unpaired source‑reference pairs. Leveraging the DiffusionNFT flow‑matching paradigm, the authors integrate a VLM‑based reward model (Qwen3‑VL‑8B‑Instruct) that evaluates both global and local similarity between the generated image and the reference preset. The VLM is prompted to output a single categorical token (1‑5), and the expected score is computed as a probability‑weighted average, providing a smooth, interpretable reward signal.

To avoid reward hacking and catastrophic forgetting of structural knowledge, SemiNFT employs a hybrid online‑offline reward mechanism. Each training batch mixes (1) online samples generated by the current policy (DiT with frozen cold‑start LoRA and trainable RL LoRA) scored by the VLM, and (2) a small curated set of offline samples with human‑assigned quality scores. The offline anchors regularize the reward landscape, ensuring the policy does not drift toward unrealistic structures or over‑aggressive preset application.

Architecturally, the model consists of the base DiT, a frozen cold‑start LoRA, and a trainable RL LoRA. The cold‑start LoRA is trained for 10 k steps and then frozen, allowing the RL LoRA to adapt without interfering with previously learned structural priors. This separation reduces parameter conflict and clarifies knowledge transfer between stages.

Evaluation goes beyond traditional PSNR/SSIM metrics. The authors construct a standardized VLM‑based assessment protocol using Gemini‑2.5‑flash, GPT‑4o, and Qwen3‑VL‑32B to approximate human judgments across multiple dimensions (color fidelity, structural preservation, aesthetic appeal). SemiNFT outperforms state‑of‑the‑art methods on standard preset transfer benchmarks, achieving 7‑8 % average gains in combined scores. Zero‑shot experiments demonstrate its versatility: the model successfully colorizes black‑and‑white photos and transfers presets from anime to real‑world images, maintaining natural colors and fine details where prior approaches fail.

In summary, SemiNFT’s contributions are threefold: (1) a two‑stage curriculum that isolates structural learning from aesthetic refinement, (2) a hybrid reward design that blends VLM‑derived online feedback with human‑rated offline anchors to prevent forgetting and reward exploitation, and (3) a publicly released diverse dataset and VLM‑driven evaluation suite. By integrating these innovations, SemiNFT sets a new benchmark for photorealistic preset transfer and opens pathways for broader image editing tasks that require both precise color control and nuanced aesthetic judgment.

Comments & Academic Discussion

Loading comments...

Leave a Comment