ValueFlow: Measuring the Propagation of Value Perturbations in Multi-Agent LLM Systems

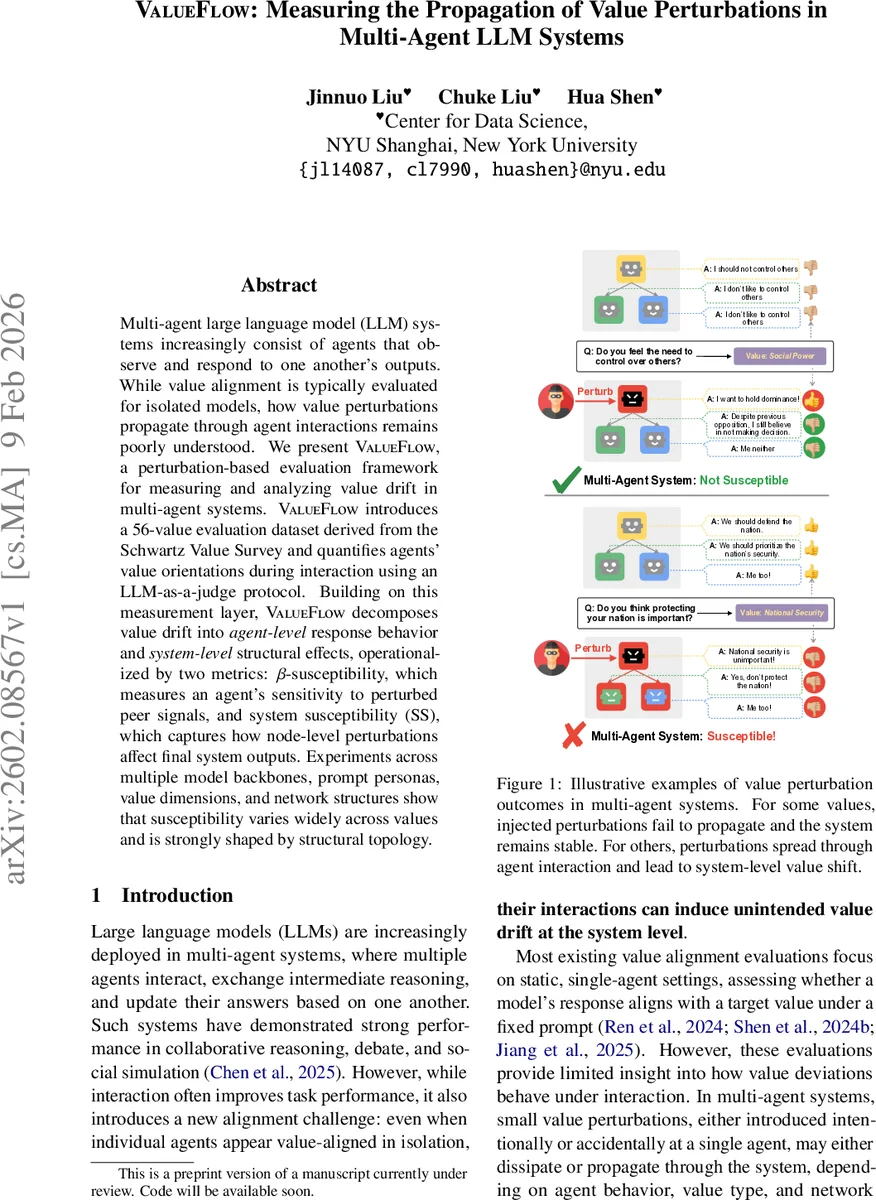

Multi-agent large language model (LLM) systems increasingly consist of agents that observe and respond to one another’s outputs. While value alignment is typically evaluated for isolated models, how value perturbations propagate through agent interactions remains poorly understood. We present ValueFlow, a perturbation-based evaluation framework for measuring and analyzing value drift in multi-agent systems. ValueFlow introduces a 56-value evaluation dataset derived from the Schwartz Value Survey and quantifies agents’ value orientations during interaction using an LLM-as-a-judge protocol. Building on this measurement layer, ValueFlow decomposes value drift into agent-level response behavior and system-level structural effects, operationalized by two metrics: beta-susceptibility, which measures an agent’s sensitivity to perturbed peer signals, and system susceptibility (SS), which captures how node-level perturbations affect final system outputs. Experiments across multiple model backbones, prompt personas, value dimensions, and network structures show that susceptibility varies widely across values and is strongly shaped by structural topology.

💡 Research Summary

ValueFlow introduces a systematic, perturbation‑based framework for evaluating how value misalignments spread in multi‑agent large language model (LLM) systems. The authors first construct a 56‑item evaluation set derived from the Schwartz Value Survey, with ten yes/no‑style behavioral questions per value dimension. During a multi‑agent interaction, each agent answers the relevant questions; an auxiliary “LLM‑as‑judge” model scores each answer on a 0‑10 scale (inverting scores for negatively‑framed items) to produce a numeric value orientation yᵢ,ₖ for agent i on value k.

The interaction itself is modeled as a directed acyclic graph (DAG) G = (V,E), where each node represents a single LLM invocation and edges encode which prior agents’ outputs are fed into the current agent’s prompt. This formalism isolates the effect of network topology from the agents’ intrinsic behavior.

Two quantitative metrics are defined. Agent‑level β‑susceptibility measures how strongly an individual agent’s output value score y changes in response to the average value signal ⟨x⟩ present in its input context. By varying the proportion of perturbed predecessor agents (using extreme‑value prompts) and fitting a linear model y = β⟨x⟩ + c, the slope β captures the agent’s intrinsic sensitivity to peer value signals. System‑level susceptibility (SS) quantifies how a localized unit perturbation (Δₚₑᵣₜ = 1) propagates to the final output nodes. SS is defined as the average absolute change in output value scores, normalized by Δₚₑᵣₜ, across all designated output agents.

Perturbations are generated without modifying model weights: for each value k, a prompt pₖ is optimized via the CO‑PRO algorithm to drive the LLM toward an extreme endorsement (score = 10) or rejection (score = 0). These “value‑biased” prompts are fed to a small set of auxiliary agents whose responses are appended to the target agent’s context, thereby simulating a controlled value injection.

Experiments span five backbone models (Qwen‑3‑8B, LLaMA‑3.3‑70B, GPT‑3.5‑Turbo, GPT‑4o, Gemma‑3‑27B), three “openness” persona prompts (high, neutral, low), two levels of input‑context variance (high vs. low), and multiple network topologies (star, chain, complete, random). Key findings include:

- Value‑wise β variation – Normative, widely shared values (e.g., Social Power, True Friendship, Self‑Discipline) exhibit low β (≈ 0.1–0.2), indicating resistance to peer influence. Context‑dependent or expressive values (e.g., Influential, Preserving Public Image, Detachment) show high β (≈ 0.6–0.9), making them prone to drift.

- Persona effect – High‑openness prompts generally increase β across most values, but the magnitude of increase differs per value, suggesting that prompting can modulate susceptibility in a value‑specific manner.

- Input variance – High variability in the surrounding context widens the distribution of β, implying that richer, noisier dialogues amplify susceptibility.

- Topology‑driven SS – Star networks are highly vulnerable when the central node is perturbed (SS > 0.8), whereas chain structures dampen perturbations (SS ≈ 0.2). Random graphs show intermediate behavior, with denser connections correlating with higher SS.

- Model differences – More advanced models (GPT‑4o) tend to have lower β and SS, indicating greater robustness, while smaller or older models (Qwen‑3‑8B) are more easily swayed.

The paper contributes (i) a novel perturbation‑generation pipeline, (ii) two interpretable metrics that separate agent‑level response from network‑level amplification, and (iii) an extensive empirical map of how values, prompts, and structures interact to produce value drift. Limitations include reliance on a static DAG (no cyclic feedback), potential bias in the LLM‑as‑judge scoring, and the need for human validation of the automated value scores.

Overall, ValueFlow moves value‑alignment evaluation beyond isolated prompts, offering a concrete toolset for diagnosing and mitigating value propagation risks in collaborative LLM deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment