QARM V2: Quantitative Alignment Multi-Modal Recommendation for Reasoning User Sequence Modeling

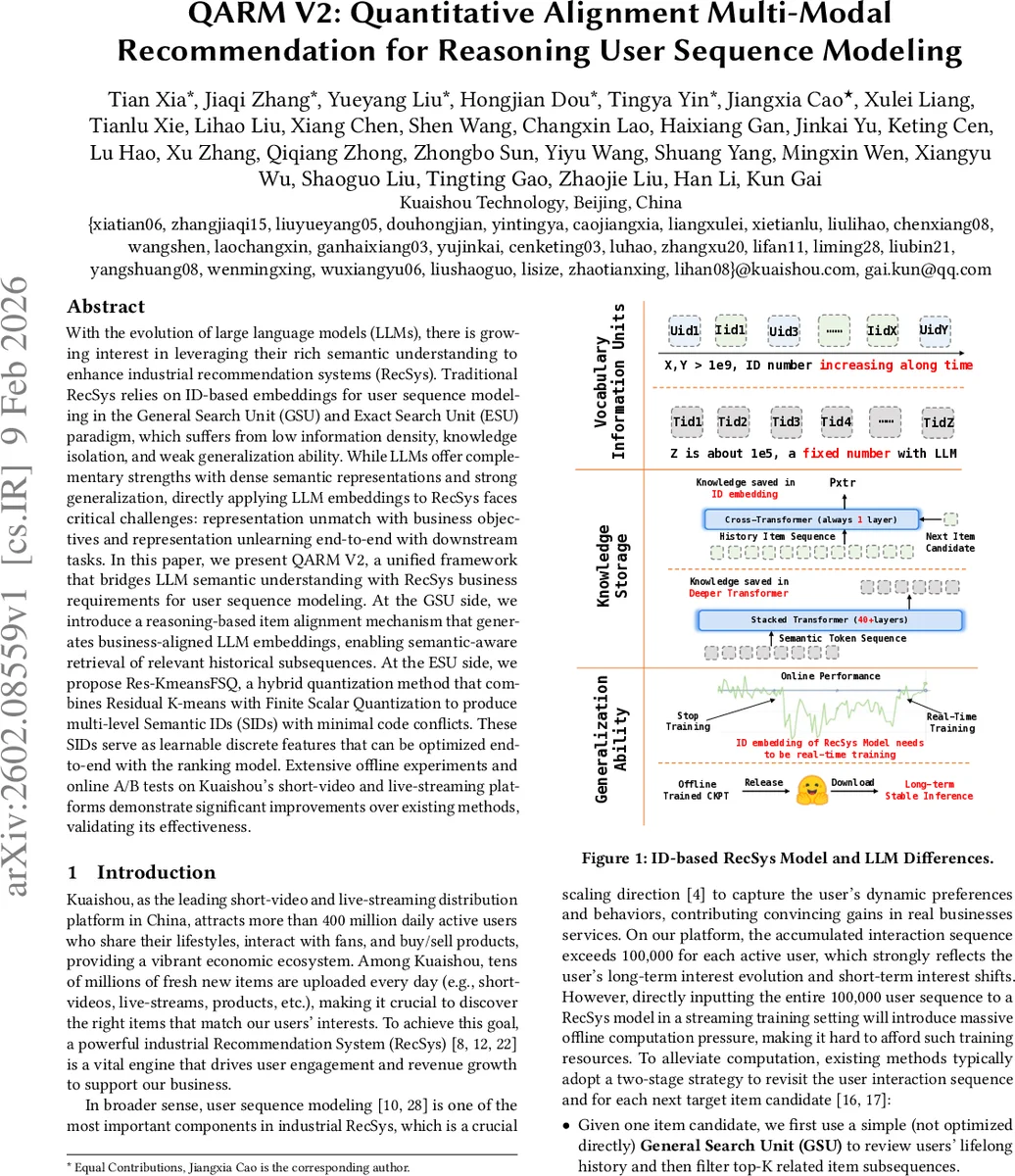

With the evolution of large language models (LLMs), there is growing interest in leveraging their rich semantic understanding to enhance industrial recommendation systems (RecSys). Traditional RecSys relies on ID-based embeddings for user sequence modeling in the General Search Unit (GSU) and Exact Search Unit (ESU) paradigm, which suffers from low information density, knowledge isolation, and weak generalization ability. While LLMs offer complementary strengths with dense semantic representations and strong generalization, directly applying LLM embeddings to RecSys faces critical challenges: representation unmatch with business objectives and representation unlearning end-to-end with downstream tasks. In this paper, we present QARM V2, a unified framework that bridges LLM semantic understanding with RecSys business requirements for user sequence modeling.

💡 Research Summary

The paper introduces QARM V2, a unified framework that tightly integrates large language models (LLMs) with industrial recommendation systems (RecSys) to improve user sequence modeling. Traditional RecSys pipelines rely on two stages—General Search Unit (GSU) and Exact Search Unit (ESU)—both of which use sparse, ID‑based embeddings. This design suffers from low information density, knowledge isolation (once an item disappears its learned knowledge is lost), and weak generalization, especially under the massive scale of platforms like Kuaishou where each active user may have over 100 k interactions.

QARM V2 addresses two fundamental mismatches between LLMs and RecSys: (1) Representation‑Unmatch, where the objectives of pre‑trained LLMs (e.g., captioning, QA) differ from business goals (click‑through prediction); and (2) Representation‑Unlearning, where frozen LLM embeddings cannot be fine‑tuned end‑to‑end with downstream ranking models. The proposed solution consists of two complementary mechanisms.

1. Reasoning Item Alignment (GSU side).

Existing RecSys models export large numbers of item‑pair signals (Item2Item from exploitation‑focused swing retrieval, and User2Item from exploration‑focused two‑tower retrieval). Many of these pairs are noisy or biased (e.g., hot‑popular but semantically unrelated pairs). QARM V2 leverages a state‑of‑the‑art multimodal LLM (e.g., Qwen‑3‑8B) to filter and enrich these pairs. The pipeline works as follows:

- The raw pair (titles, attributes, visual features) is fed to the LLM with a specially crafted prompt asking the model to assess semantic correlation, complementary purchase relationships, or usage similarity.

- Simultaneously, the LLM generates a question‑answer (QA) pair for each item, encouraging the model to internalize item‑level semantics.

- Training adopts a three‑segment attention mask: (i) contrastive segment for pairwise similarity, (ii) QA generation segment, and (iii) compression segment. This joint objective fine‑tunes the LLM so that its output embeddings encode both world knowledge and platform‑specific business logic. The resulting “business‑aligned” embeddings are then used in the GSU to retrieve a compact, semantically relevant subsequence from a user’s lifelong history, dramatically reducing noise before the ESU stage.

2. Res‑KmeansFSQ Quantization (ESU side).

To make the LLM embeddings usable in the high‑throughput ESU, they must be transformed into discrete, learnable features. The original QARM employed a pure Residual K‑means approach, which quantizes embeddings layer‑by‑layer into multi‑level “Semantic IDs” (SIDs). However, because item representations follow a long‑tail distribution, many SIDs suffered from collisions (multiple items sharing the same code), degrading downstream ranking performance.

QARM V2 mitigates this by hybridizing Residual K‑means with Finite Scalar Quantization (FSQ):

- The first two quantization layers remain Residual K‑means, capturing coarse category‑level structure.

- The final layer replaces K‑means with FSQ, which uses a predefined uniform grid independent of data density. This guarantees that fine‑grained item‑specific nuances are encoded without codebook crowding. The hybrid scheme yields multi‑level SIDs (e.g., three levels, total code space 4 096) that are both semantically meaningful and low‑collision. Crucially, these SIDs are treated as learnable embeddings within the ESU, enabling end‑to‑end gradient flow from the final ranking loss back to the discrete codes.

Empirical Evaluation.

Experiments were conducted on Kuaishou’s short‑video and live‑streaming platforms across three business scenarios: shopping, advertising, and live‑commerce. Offline metrics (AUC, NDCG) showed consistent improvements of 1.2‑2.3 % over strong baselines that use only ID embeddings or naïve LLM features. Online A/B tests reported CTR lifts of 0.8‑2.3 % and conversion rate gains of 1.5‑3.1 %, with especially notable uplift for newly uploaded items (better cold‑start handling). The Res‑KmeansFSQ component reduced code collisions from >30 % to <5 %, and the overall latency increase was kept under 15 % compared to the legacy pipeline, demonstrating production feasibility at a scale of 400 million daily active users.

Contributions and Impact.

- Introduces a reasoning‑driven item alignment mechanism that filters noisy pairwise signals and aligns LLM embeddings with business semantics.

- Proposes the Res‑KmeansFSQ hybrid quantization to generate low‑collision, multi‑level Semantic IDs suitable for end‑to‑end training.

- Validates the framework in a real‑world, billion‑scale recommendation environment, showing measurable gains in user engagement and revenue.

In summary, QARM V2 successfully bridges the gap between the rich, world‑knowledge representations of LLMs and the stringent, business‑driven requirements of industrial RecSys, delivering a scalable, high‑performance solution for lifelong user interest modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment